TL;DR

Consumer insight questions work when they align with your method and objective.

Surveys vs. qualitative interviews: Use surveys to measure customer behavior at scale. Use qualitative interviews when you need to understand why they behave the way they do. Mixing up the method wastes both budget and time.

Question sequencing: Structure consumer insight questions by research objective first, not topic. Broad context before specific probing. Behavioral before attitudinal.

Stakeholder traceability: Actionable insights only drive decisions when they link back to the source. Build outputs around evidence, not summaries.

Most consumer insight questions are designed for the wrong constraint. Teams reach for surveys because they're fast, but surveys capture what customers say they think, not why they behave the way they do. Agency-moderated interviews get closer to that "why," but at a cost most teams cannot sustain: six to twelve weeks of fieldwork, five-figure budgets, and findings that arrive after the decision has already been made. Small insights teams, typically one to five researchers serving entire organisations with dozens of research requests per quarter, cannot run enough qualitative interviews to meet internal demand without agency support, and agency timelines are incompatible with the pace of business decisions.

The result is a familiar pattern. Shallow consumer insight questions produce shallow answers. Those answers arrive too late to change anything. And the research function gets blamed for being slow when the real problem is structural.

That structure is changing. Qualitative research is shedding the constraints that have defined it for decades: slow recruitment cycles, manual moderation, and unsearchable outputs buried in decks. The tradeoff between depth and speed is not a law of nature. It was a product of how research had to be done before the tools caught up with the demand. The ability to gather insights at scale, understand consumer behavior in real time, and deliver evidence-ready outputs to stakeholders is no longer limited to teams with agency budgets and twelve-week timelines.

This article covers question types by research objective, adaptive probing techniques, and analysis frameworks for making sense of consumer insights interview questions at scale.

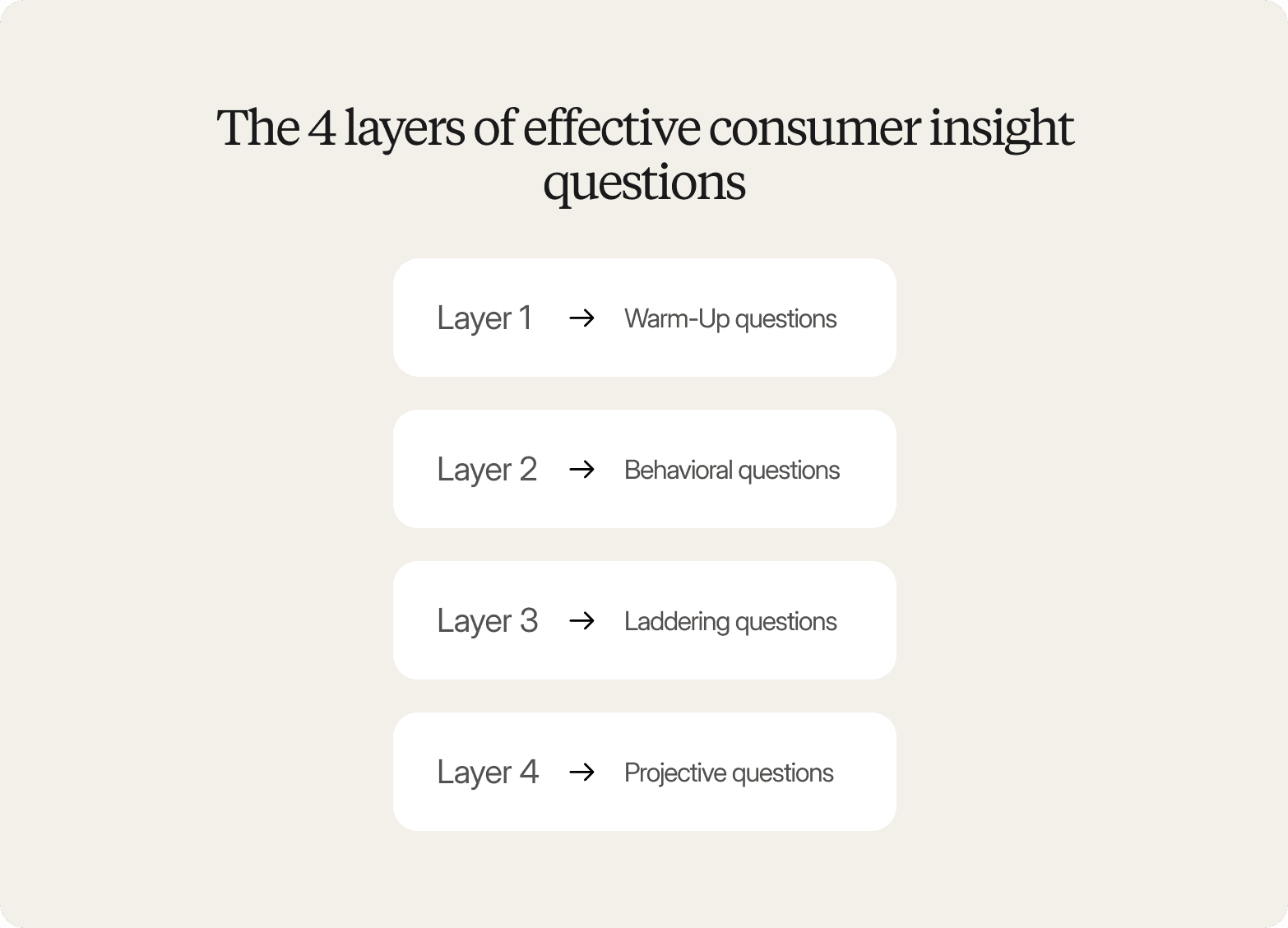

The 4 layers of effective consumer insight questions

A consumer insight question does more than collect a response. It creates the conditions for a participant to reveal something they might not have volunteered on their own: a habit they've never examined, a tension they haven't named, a belief that shapes their consumer behavior without their full awareness. The difference between a question that generates data and one that generates genuine consumer insights lies in what it's designed to surface: not stated preferences or customer satisfaction ratings, but motivations, behaviors, and emotions.

The most reliable way to reach that level of depth is through a structured approach. Consumer insights interview questions work best when they follow four distinct layers, each one earning access to the next.

Layer 1: Warm-Up questions

These establish rapport and lower the social pressure that makes participants default to polished, acceptable answers. Example: "Tell me about the last time you bought something in this category." The goal is to get the participant out of evaluation mode and into memory and experience.

Layer 2: Behavioral questions

These anchor the conversation in real actions rather than hypothetical preferences. Behavioral questions force specificity around actual purchase behavior and customer journey moments. Example: "What made you choose that brand over the others you considered?" They're harder to fabricate than opinion questions, and specificity is where the real signal lives.

Layer 3: Laddering questions

These follow the behavioral thread deeper, probing the motivations behind the action. Example: "What would it take for you to switch to something else?" Laddering moves the conversation from what someone did to why they did it, the layer most survey questions never reach. This is where you begin to identify trends in customer needs that wouldn't surface in quantitative data.

Layer 4: Projective questions

These access beliefs and associations are what participants struggle to articulate directly. Example: "If this product were a person, how would you describe them?" Projective questions bypass the rational filter and surface the emotional logic that drives purchasing decisions. They're especially useful for probing brand perception and consumer sentiment.

Compare this to the market research questions most surveys ask: "How satisfied are you on a scale of one to five?" or "Would you recommend this product to a friend?" Those questions confirm that an attitude exists. They do not explain where it came from, what it's connected to, or what would change it.

The four-layer structure is necessary but not sufficient. What makes it work is the ability to respond to what a participant just said. If someone mentions price sensitivity, the next question should follow that thread. If pain points around onboarding surface, probe them. The moment a researcher ignores a signal to stay on script, the interview stops generating deeper insights and starts collecting compliance. That capacity for real-time responsiveness is what separates research that changes decisions from research that fills a slide.

"It picks up on the nuances a survey never could."

CMI Manager, Edgar & Cooper

Consumer insight questions by research objective

Concept testing

Question sequence (example category: snack foods)

Warm-up: "Walk me through the last time you picked up a snack on impulse. What were you doing, and what made you reach for it?"

Behavioral: "When you're looking at something new on the shelf, what do you actually do before you decide whether to try it?"

Motivational: "After reading through this concept, what would make you feel confident enough to put it in your basket, and what would give you pause?"

Projective: "If this product had been around for five years and had a loyal following, who do you picture buying it regularly? What does that person value?"

What this sequence uncovers:

Unmet needs, purchase hesitation triggers, and the gap between claimed appeal and actual adoption intent among target customers.

Avoid this:

"Do you like this concept?" This produces binary approval, not insight into the conditions under which someone would actually buy. The better framing: "Walk me through what you would use this for and when, and describe a situation where you'd reach for it over what you already buy." A well-designed concept testing sequence also surfaces how consumers describe a new product or service in their own words, language that often proves more useful for positioning than anything generated internally.

Survey vs. interview:

Use surveys to quantify purchase intent and rank concept appeal across 500 or more respondents. Use interviews when you need to understand what's driving hesitation, what specific language resonates, and what would need to change before a sceptical buyer commits. Adaptive probing turns a "probably not" into a traceable explanation your team can act on.

Brand perception

Question sequence (example category: financial services)

Warm-up: "When you think about the financial brands you actively use, which one feels most like it understands you? What gives you that impression?"

Behavioral: "Think about the last time you switched a financial product or seriously considered switching. What triggered that? What were you looking for that you weren't getting?"

Motivational: "If you were describing this brand to someone who'd never heard of it, what would you say it's really for, and who would you say it's for?"

Projective: "Imagine this brand as a person at a dinner party. How would they introduce themselves? What would they talk about? Who would they avoid?"

What this sequence uncovers:

Competitive vulnerabilities, the emotional and functional jobs the brand is hired to do, and where positioning language diverges from how consumers actually describe the brand compared to other brands in the category.

Avoid this:

"How would you describe our brand in three words?" This produces a list, not an insight. Participants reach for safe descriptors that flatten real perception. The better framing: "Tell me about a moment when this brand either delivered on what you expected or didn't. What happened, and how did it change how you think about them?"

Survey vs. interview:

Use surveys to measure brand awareness, track equity metrics across time, and compare brand perception across target market segments. Use interviews to understand why a positioning statement lands or falls flat with a specific audience, and to build the verbatim evidence base that makes positioning decisions traceable to real consumer language rather than internal assumptions.

Purchase journey mapping

Question sequence (example category: skincare)

Warm-up: "Tell me about your current skincare routine. How did it end up the way it is? Did you build it deliberately, or did it just kind of happen?"

Behavioral: "Walk me through the last time you added something new to your routine. Where did you start looking? What did you do before you bought?"

Motivational: "At what point did you feel confident enough to make a purchase? What would have made you stop and reconsider?"

Projective: "If you were advising a family member on how to approach this category for the first time, what would you tell them to watch out for?"

What this sequence uncovers:

The decision criteria customers actually use, the information sources that carry real weight, and the specific moments where purchase behavior can be influenced or lost.

Avoid this:

"How do you typically research products before buying?" This invites a generic, idealised account. The better framing: "Walk me through exactly what you did the last time you made this kind of purchase, from the moment you started thinking about it."

Survey vs. interview:

Use surveys to quantify which information sources your target audience uses at each stage of the customer journey. Use interviews to understand the emotional logic behind the journey, the pain points that create friction, and the moments where a competitor could intervene. Collecting feedback through interviews at this depth often surfaces consumer preferences that no survey could anticipate or measure.

Adaptive Probing: How to follow up when consumer insight interview questions need depth

The most valuable moment in a qualitative interview is rarely the answer to the question you planned. It's the unexpected phrase, the hesitation, the offhand comment that opens a door your discussion guide never anticipated. Fixed consumer insight interview questions to close that door before the participant finishes speaking. Adaptive probing keeps it open.

Adaptive probing means adjusting your follow-up questions in real time based on what a participant just said, rather than advancing to the next scripted item. In practice, it separates interviews that produce meaningful insights from interviews that produce polished non-answers.

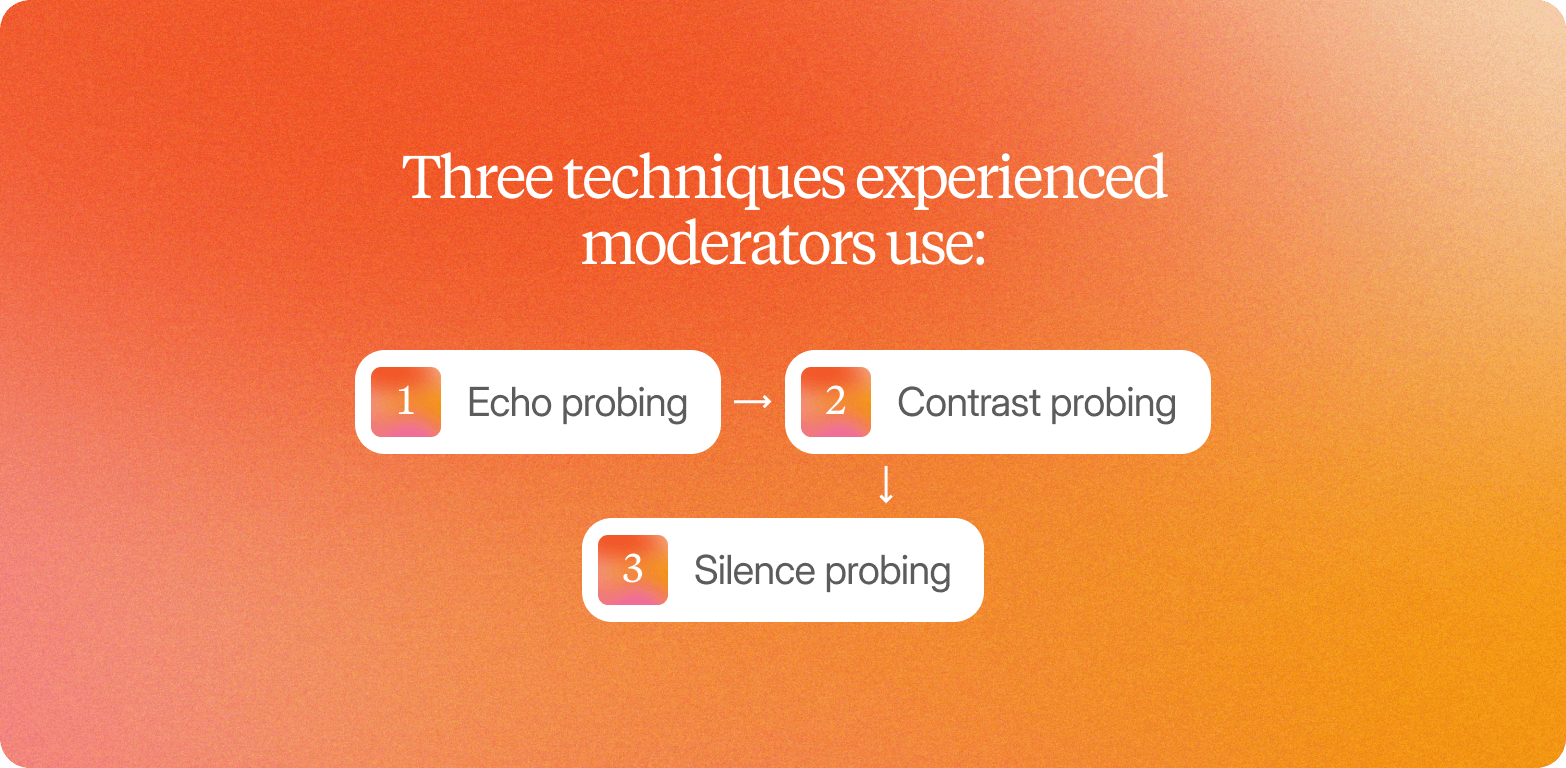

Three techniques experienced moderators use:

Echo probing

Reflect a word or phrase as a question. "You mentioned 'trust': what does trust mean to you in this context?" This surfaces the personal meaning behind language that would otherwise pass unexamined.

Contrast probing

Invite comparison to sharpen a claim. "How does that compare to your experience with other brands in this category?" Vague positive sentiment becomes a concrete expression of customer preferences when a participant has to explain what makes something different.

Silence probing

After a participant finishes answering, pause. Most people interpret silence as an invitation to continue, and what follows is often more candid than the initial reaction.

The operational problem is that adaptive probing requires real-time judgment. A skilled moderator has to listen, assess, and decide within seconds whether to probe deeper, shift direction, or move on. That cognitive load is why agencies charge premium rates, and why most insights teams cannot run enough interviews to keep pace with internal demand. When probing requires a human in the room, qualitative research scales slowly and runs periodically, limiting the team's ability to identify trends or act on shifts in consumer sentiment before decisions close.

That constraint is dissolving. AI-moderated interviews can now probe adaptively based on what participants actually say. The qual cycle that once required weeks of scheduling, moderation, and data analysis can now run in days, at a scale no agency team could match, without trading away the depth that makes qualitative findings credible.

Survey open-text fields capture verbatim responses, but they cannot ask a clarifying question when an answer is vague, probe the meaning behind a word, or follow a thread the researcher did not anticipate. The response is the endpoint. In a well-moderated interview, the response is the starting point.

From Answers to Evidence: Structuring consumer insights analysis questions for stakeholder trust

The consumer insight questions that produce the most defensible findings share one structural feature: they are designed from the start to generate quotable, traceable evidence, not just quantitative data or aggregate responses.

Stakeholder distrust in qualitative research rarely comes from disagreement with the findings. It comes from an inability to inspect them. When an executive asks, "How do you know that?" and the answer is, "That's what participants told us," the credibility of the entire study is at risk. Without a direct line back to what a specific person said, in their own words, no finding can be defended.

The synthesis workflow that produces traceable consumer insights analysis addresses this at every step. Interviews are transcribed and coded by theme, not summarised at the session level. Representative quotes are extracted for each theme, selected for typicality across the participant group. Findings are then packaged with evidence attached: quote cards tied to specific participants, video clips timestamped to the relevant exchange, and frequency counts that show how many customers expressed a given view without prompting.

Survey analysis produces none of this. A 72% agreement rate tells you that a pattern exists. It cannot tell you what the pattern feels like, what language surrounds it, or what underlying concern drives it. Even well-structured open-ended survey questions cannot substitute for the contextual richness of a live exchange.

When research outputs live in static slide decks, the customer data supporting each finding becomes inaccessible the moment the deck is filed. Insights stop compounding. They become one-off deliverables rather than institutional memory, and the organisation starts each new study from the same baseline. The discipline of traceability, built into how consumer insight questions are framed and how findings are structured, is what separates research that builds organisational confidence from research that gets questioned every time it enters a room. Informed decisions require not just the finding, but the evidence trail behind it.

When to use surveys vs. qualitative interviews for consumer insight questions

The choice between surveys and qualitative interviews is not a question of which method is better. It is a question of what you are trying to learn and at what stage of your understanding.

When surveys fit the job:

Surveys work when you already know what to measure. Use consumer insight survey questions to quantify consumer preferences across large samples, validate hypotheses your qual work has already generated, track metric shifts over time, or segment audiences by stated behavior and attitude. Multiple-choice and multiple-answer formats capture structured consumer preferences at volume, but they should not be mistaken for a route to deeper insights. If you do not know what to ask, scaling the question only scales the ambiguity.

When interviews fit the job:

Use consumer insight interview questions when you need to understand the reasoning behind a behavior, not just its frequency. Interviews surface motivations that surveys cannot reach: why a current customer switched brands, what made a concept feel off, and what a net promoter score shift actually signals. When research objectives require understanding how factors influence purchasing decisions, interviews consistently produce more relevant insights than surveys alone. They are also the right method for diagnosing changes in brand perception before a campaign goes live.

A combined approach in practice:

Run 10 to 15 interviews to identify the top three reasons customers switch brands, then survey 500 customers to quantify how prevalent each reason is across segments. The interviews generate the hypotheses. The survey validates their scale. Each method does what the other cannot. This model is particularly effective for concept testing, where qualitative rounds surface product features that resonate or confuse respondents, and quantitative rounds measure how widely those reactions hold across the target market.

What has changed:

Running 50 interviews per quarter through traditional moderation is structurally impossible for a team of two or three researchers, so teams default to surveys even when the question calls for depth. Metric shifts go undiagnosed. Concept tests return preference scores with no explanation. AI-moderated video interviews now allow teams to run qualitative research at a pace and scale previously only achievable with surveys, removing the forced tradeoff between depth and timeline that caused teams to under-invest in qual in the first place.

How Conveo helps teams answer consumer insight questions with depth and speed

Answering consumer insight questions at the speed decisions actually require, without trading away the depth that makes findings defensible, is the outcome against which insights teams are increasingly measured. Conveo is the video-first AI qualitative research platform built around that shift: adaptive, AI-moderated interviews at scale, compressing qual timelines from weeks to days while producing outputs that hold up under stakeholder scrutiny.

The platform delivers this through three interconnected mechanisms. First, findings adapt to what participants actually say. Conveo's AI moderator probes based on real responses rather than a fixed script, surfacing the consumer behavior signals and contextual nuance that structured guides routinely miss. Second, research runs at scale: asynchronous video interviews allow 10 to 1,000 conversations in parallel, across more than 20 languages, without moderator scheduling or fieldwork coordination. Third, analysis time compresses. Automated transcription, translation, and thematic synthesis eliminate the manual data analysis bottleneck that typically adds weeks between fieldwork and a shareable deliverable.

Traceability is built into the output, not bolted on. Every finding links directly to the video clip and verbatim quote it came from, so stakeholders can audit conclusions rather than accept a summary on faith. Brand perception questions, purchase journey findings, and concept testing results all arrive with the evidence attached, quote cards, timestamped clips, and frequency counts that make every claim defensible.

Consumer insight questions accumulate value across an organisation over time. Conveo's searchable insight library captures that value: every study feeds a secure, cross-searchable repository, so findings from six months ago remain accessible when a related question surfaces today. Teams can cross-reference consumer sentiment across studies, track shifts in customer needs, and identify trends that wouldn't be visible in any single piece of customer data.

Compliance is a procurement requirement, not a footnote. Conveo holds SOC 2 certification, maintains GDPR compliance, and offers optional EU regional data hosting, clearing the governance requirements that quietly block enterprise deals for many research platforms.

One qualification worth stating plainly: Conveo is designed for teams running ongoing interview programmes. If a team needs a single survey fielded with no recurring research ambition, the platform is not the right fit.

Over 400 enterprise teams, including Google, FOX, and Bosch, use Conveo to run video-first interviews at scale.

Frequently Asked Questions

What are consumer insight questions and answers?

What are the 7 basic questions in market research?

What are good consumer insight interview questions for concept testing?

How do you structure consumer insight survey questions to avoid shallow answers?