TL;DR

A well-structured qualitative interview transcript includes speaker labels, timestamps, a session header that covers the date, participant code, and study context, and consistent notation for nonverbal cues. Formatting consistency across sessions is what makes synthesis tractable: it lets researchers spot themes, pull comparable quotes, and navigate directly to relevant moments without re-reading from the start. When AI-moderated interviews are involved, the transcript arrives pre-structured and linked to the source video, shifting the researcher's starting point from cleanup to active analysis, and key insights surface days earlier than the manual route allows.

Most research teams have solved the hard part: getting participants to open up. The qualitative interview itself, the probing, the listening, the follow-up that surfaces what a survey would miss, is where the methodology lives. What happens after the recording stops is where the time goes.

Transcript cleanup is not a minor inconvenience. When analysts arrive at synthesis with inconsistent speaker labels, missing timestamps, and formatting that varies by interviewer or transcription service, the first hours of analysis become data janitorial work. That is time that does not produce findings. For teams under sprint pressure or racing toward a campaign launch, a qualitative interview transcript that requires significant reformatting before it is readable is one that arrives too late to matter.

The fix is not faster transcription. It is structured transcription: consistent metadata headers, standardized speaker attribution, timestamped turns, and annotation fields that travel with the audio file from recording to synthesis. When teams work from a coherent example transcript interview format, comparison across participants becomes faster, themes emerge more clearly, and findings carry more weight with stakeholders who can trace every claim back to a source conversation.

This article covers transcript format standards, real examples organized by research program type, and how structured documentation connects to faster synthesis of qualitative data.

Qualitative interview transcript template

Below is a sample transcription of a qualitative interview, formatted so your team can copy it directly into Word or Google Docs and apply it consistently across all studies. Whether you are transcribing semi-structured interviews or more exploratory conversations, this format creates an accurate, analysis-ready record from the moment the session ends.

Applying this format consistently across every interview in a study removes a problem that quietly compounds: when different analysts use different naming conventions, themes are coded inconsistently, quotes become hard to locate, and synthesis takes longer than it should. A shared template means the third analyst reviewing session 14 is working from the same structure as the first analyst who reviewed session one.

Timestamps every two to three minutes also make it practical to trace a finding back to its source. When a stakeholder asks where an insight came from, the answer is a speaker tag and a timestamp, not a vague reference to something a participant said.

What makes a good qualitative research interview transcript

Not every transcript is created equal. A raw text dump from an audio recording captures words. A structured qualitative interview transcript captures evidence. The difference matters most when a stakeholder pushes back on a finding, or when you need to compare how 30 participants responded to the same prompt across different research studies. Without a consistent structure, that comparison becomes guesswork.

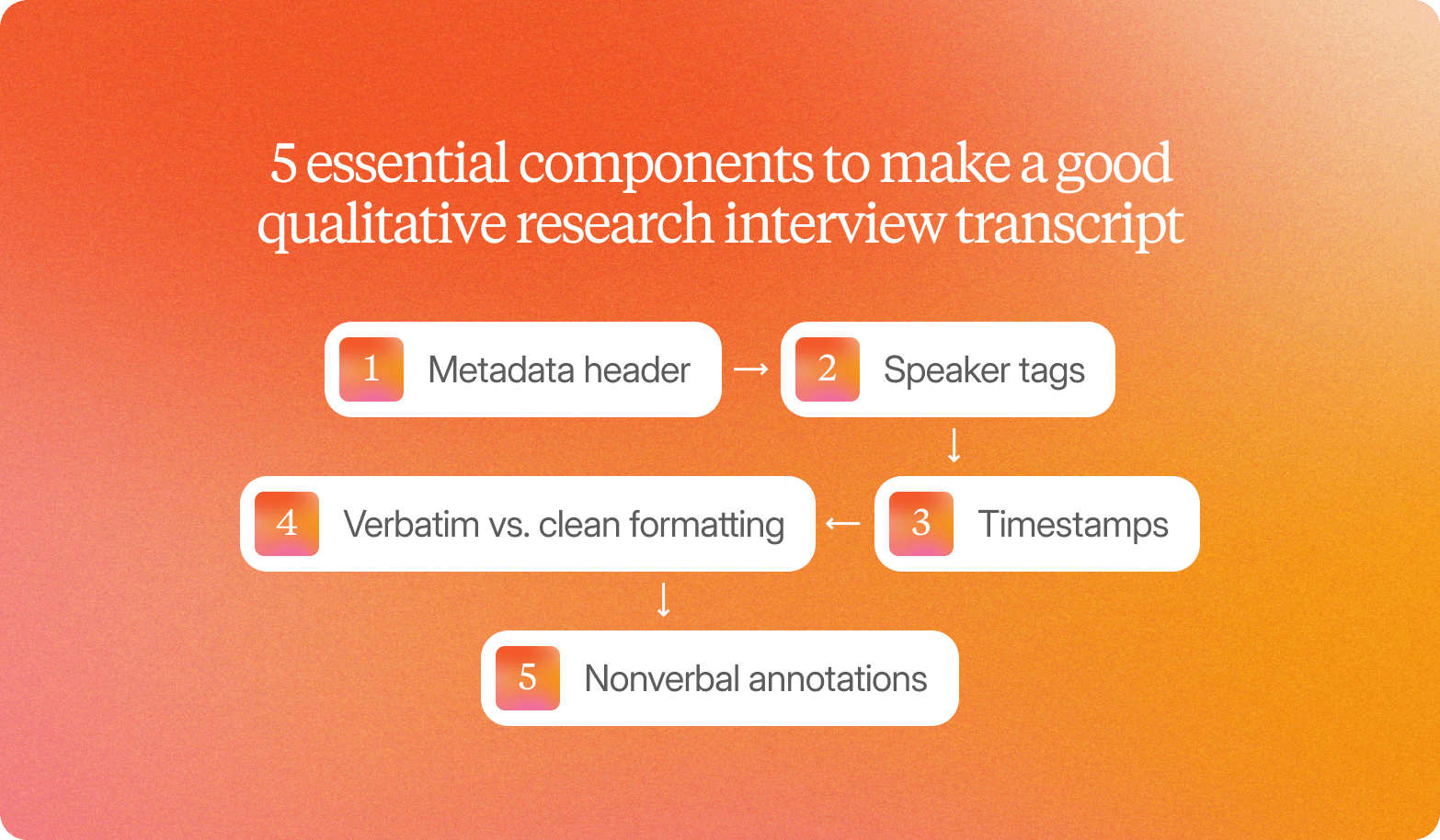

Any sample qualitative interview transcript worth using for downstream analysis includes five essential components: the difference between a document you can search, cite, and defend, and one you can only read.

Metadata header

Every transcript should open with a standard header: study name, participant ID, interview date, interviewer name or ID, and a consent confirmation timestamp. This is the chain of custody for your qualitative data. If a finding gets challenged six months later, the metadata header is how you prove it came from a real session, not a reconstructed memory.

Speaker tags

Label every turn clearly using a consistent naming convention: INTERVIEWER and PARTICIPANT for one-on-one sessions, or role-based labels (MOD, P1, P2) for focus groups. Consistent speaker tags are what make it possible to isolate participant responses across a full transcript set without manually reading every line.

Timestamps

At a minimum, add a timestamp every two to three minutes. Ideally, timestamp each speaker's turn. Timestamps let analysts jump directly to a moment in the recording to verify a quote and build video highlight reels from the transcript itself.

Verbatim vs. clean formatting

This decision should be made once per study and applied consistently. Verbatim captures filler words, false starts, and hesitations, which matter when how something is said carries analytical weight. Clean verbatim removes verbal clutter while preserving meaning, which is the right call when thematic content is the priority. The critical rule: decide before fieldwork begins, and apply the same standard to every session. Mixing styles across transcripts makes cross-participant comparison unreliable.

Nonverbal annotations

Pauses, laughter, tone shifts, visible objects, and gestures belong in the transcript. A participant who laughs nervously when asked about a price point is communicating something that a clean text response will erase. Bracketed annotations such as [long pause], [laughs], or [holds up product] preserve that context without disrupting readability. Background noise and audio interference should also be flagged with [background noise] or [inaudible] so analysts can determine whether a passage is reliable before coding it.

Interview transcript examples by research program type

Transcript structure is not one-size-fits-all. What you annotate in a concept testing session differs meaningfully from what you code in a continuous discovery interview or a pricing sensitivity study. Across qualitative projects of any size, getting the annotation right from the start means your synthesis reflects the actual purpose of the research study, not a generic pass at whatever stood out.

Concept testing

Excerpt:

"I like the idea, but when I saw the price, I kind of stopped. I wasn't sure what I was actually getting for that. Like, is this a one-time thing, or is there a subscription? I'd probably want to try it first before committing to anything."

Codes applied:

Price hesitation, value ambiguity, trial preference, commitment barrier

Theme:

Across participants, hesitation appeared at the price point rather than at the concept itself. The concept generated genuine interest, but unclear value framing at the moment of price exposure created a consistent pause.

Key insight:

Concept appeal is strong, but conversion risk sits at the pricing page. Participants need clearer value framing before they are willing to commit.

Continuous discovery

Excerpt:

"Honestly, I just screenshot things and dump them into a Slack channel. There's no real system. Half the time, I forget I even saved it. I've started keeping a Notes app on my phone, but it's a mess. I'd have to scroll forever to find something from two weeks ago."

Codes applied:

Workaround behavior, information fragmentation, retrieval friction, and low-tech coping mechanisms

Theme:

This participant's behavior mirrors a pattern that appears across continuous discovery sessions: users have developed personal workarounds for problems the product was designed to solve. The gap reveals an adoption failure, not a feature gap.

Key insight:

Users are actively working around the core workflow, which signals that the current in-app experience is not meeting the organizational need for fast, searchable retrieval.

For teams transcribing interviews conducted across 20 or 50 parallel sessions, the formatting step compounds quickly. This is where AI-moderated depth interviews change the upstream dynamic: transcripts arrive pre-structured with speaker tags, timestamps, and initial thematic tags already applied, so researchers are editing and refining rather than building from a blank document after 40 sessions have landed.

Pricing research

Excerpt:

"Fifty dollars a month feels okay if I'm using it every day. But I don't use it every day. Some months, I barely touch it. I'd feel better if there were some kind of pause option, or if it scaled down when I wasn't active. Paying full price for a quiet month doesn't sit right."

Codes applied:

Usage variability, pricing model friction, fairness perception, flexibility preference, churn risk signal

Theme:

Participants did not reject the price in absolute terms. Resistance was tied to the fixed-cost structure in the context of irregular usage. The fairness perception around paying full price during low-activity periods was a consistent driver of churn consideration, even among participants who expressed overall satisfaction with the product.

Key insight:

Pricing resistance is not about the number itself. It is about perceived fairness during low-usage periods, which points to a retention risk that a usage-based or pause option could address.

Verbatim, clean, and annotated transcripts: When to use each format

The transcript format question sits at the center of every qualitative interview workflow, and getting it wrong costs more than time. Choose the wrong format and you either lose the analytical depth you need for rigorous coding, or you hand stakeholders a wall of verbal clutter they cannot act on.

A verbatim transcript preserves every word exactly as spoken: filler words, false starts, repetitions, grammatical errors, and non-verbal sounds. A clean transcript removes that verbal clutter, lightly corrects grammar for readability, and presents the speech content in a form closer to polished prose. The meaning is retained; the texture of speech is smoothed. The choice between them operates at different levels of the same qualitative data: verbatim protects the participant's voice, clean makes the analysis accessible to people who weren't in the room.

Use this table to choose the right format for each context:

Situation | Format |

Discourse analysis, academic research | Verbatim |

Legal or compliance documentation | Verbatim |

Stakeholders who distrust edited outputs | Verbatim |

Stakeholder-ready reports and presentations | Clean |

Synthesizing themes across many interviews | Clean |

Analysis and coding (internal use) | Verbatim |

Stakeholder-facing excerpts and highlight reels | Clean |

The most common enterprise practice is a hybrid: verbatim for coding and thematic analysis, clean for any excerpt that leaves the research team.

On nonverbal signals: the annotation key in the template above covers the core cases. The principle is straightforward. Tone, pauses, facial expressions, and visible objects all carry participant intent that words alone cannot represent. When a participant says "yeah, I think that works" while visibly frowning, the verbal transcript records agreement. The video context records doubt. Conveo's product architecture keeps every session available for review alongside its transcript, so researchers can interrogate moments where verbal and nonverbal signals diverge rather than relying on text that has already resolved the ambiguity in the wrong direction.

From transcript to insight

A structured transcript is not the end of the workflow. It is the starting point for faster qualitative data analysis.

The manual route most teams still use: audio recordings go to a transcription service, raw transcripts come back, analysts spend hours cleaning and standardizing them, read through every session to begin identifying initial codes, apply codes in Word or Excel, group codes into themes, extract supporting quotes, and produce a stakeholder-ready report. Depending on interview volume, that sequence takes days to weeks. When two analysts code the same conversation differently, the defensibility of the findings weakens.

See the output: How Conveo packages insights for decision makers →

The structural value of a well-formatted transcript is that it compresses this process as soon as the session ends. Consistent speaker tags mean coding can begin without orientation. Timestamps enable researchers to access and verify any finding without having to return to the recording. Nonverbal annotations mean the researcher does not have to remember what a participant's tone revealed when they read the text three weeks later. Themes identified in week one are traceable to the same evidence as themes identified in week three.

Conveo builds this structure automatically: study design, participant recruitment, AI-moderated video interviewing, structured transcription, and thematic synthesis run within one platform. Over 400 enterprise teams, including Google, Reddit, and Bosch, rely on Conveo for qualitative research at this level of integration. Teams report cutting research timelines from 6 weeks to 3 days, not by compressing the rigor of analysis, but by removing the manual steps between recording and insight.

"Within days, we had insights that would've taken a traditional agency a month."

Head of Customer Insights, JDE Peet’s

Frequently Asked Questions

What should a qualitative interview transcript include?

What is the difference between verbatim and clean interview transcripts?

How do I structure a qualitative interview transcript for thematic analysis?

When should I start coding a qualitative interview transcript?

What is an example of a coded qualitative interview transcript?