TL;DR

Messaging testing is a qualitative research methodology for evaluating how marketing language resonates with a target audience: whether a message is understood, believed, relevant, and distinct from what competitors say.

Messaging testing is not A/B testing. A/B testing measures which variant performs better; message testing research diagnoses why language lands or fails, so teams can fix it before committing to media spend.

Effective messaging testing covers four dimensions: clarity (does the audience understand it?), relevance (does it connect to a real need?), value (does it feel worth something?), and differentiation (does it say something competitors don't?).

Surveys alone are insufficient. Closed-ended responses confirm what consumers say but miss the reasoning behind it. Open-ended conversations surface the hesitations, reframings, and emotional signals that explain whether a message will hold up in the market.

AI-led qualitative interviews have changed the speed and cost profile of messaging testing. Teams report moving from a six-week agency engagement to findings in under a week, without sacrificing the depth that makes the output credible.

The brief was locked by Thursday. The consumer research came back the following week.

That sequence plays out constantly across brand and marketing teams, and the consequences are rarely catastrophic enough to trace back to the decision.

The problem is structural, not a failure of effort. Messaging is developed by people who already believe in the product, in language shaped by internal priorities rather than the words customers use to describe their own pain points and needs.

This article covers what messaging testing actually requires, where common approaches fall short, and how qualitative interviewing closes the gap between speed and depth for teams who need a defensible answer before the brief locks.

Why Messaging Testing Matters: Protecting Your Marketing Campaigns Before Launch

Messaging that works for the people who built the product often doesn't work for the people who need to buy it.

The gap between internal conviction and customer reality is where marketing budgets go to waste. When untested messaging ships without structured consumer validation, the risks are concrete: copy that confuses rather than converts, value propositions that fail to differentiate from competitors in the eyes of the actual buyer, and product launches where the core claim lands flat because it was written in the language of the category, not the customer.

The further up the organization messaging decisions sit, the more distance there is from the lived experience of the target market. Marketing leaders bring pattern recognition and strategic instinct, but they also bring assumptions that are hard to challenge without structured external evidence. Internal reviews, no matter how rigorous, cannot replicate what happens when a potential customer encounters a cold message, with no context and no goodwill to extend.

Done well, consumer validation does two things:

It surfaces how customers actually describe their own problems and pain points, which is source material for sharper copy and more effective messaging.

It identifies where existing messaging variants create confusion, skepticism, or indifference before those reactions happen at scale, protecting future marketing campaigns from foreseeable failure.

"Every marketing decision we make now starts with what consumers actually told us on Conveo."

CMI Manager, Edgar & Cooper

Qualitative Market Research for Messaging

Qualitative messaging testing is the method designed to answer the question that neither A/B testing nor surveys can reliably answer: not which message performed better, but why it did or didn't land.

It works by putting real consumers in conversation with the message itself. Through discussion guides, in-depth interviews (IDIs), focus groups, or AI-led video interviews, moderators and researchers probe four dimensions that numeric outputs cannot capture:

Clarity: Does the audience actually understand what is being offered?

Relevance: Does the value proposition connect to something they genuinely care about?

Value: Does the message make the product benefits feel worth paying attention to?

Differentiation: Does it explain, credibly, why this offer is meaningfully different from alternatives?

Qualitative methods like depth interviews and concept testing are especially well-suited to messaging work because they capture how audiences interpret language, not just whether they respond to it. Research respondents can articulate hesitations, reframe claims in their own words, and reveal the emotional logic behind their reactions, none of which survey responses reliably surface.

Research suggests that 12 to 17 participants from a well-screened, homogenous target audience are sufficient to surface the primary patterns. Large samples are not the point. The depth of audience feedback is.

The practical test is whether removing your brand name from the message leaves anything that specifically points back to you. If another company's name slots in cleanly, the message is doing category education rather than competitive positioning. That may have value at different stages of a buyer's journey, but it won't move someone who is already evaluating alternatives.

Dimension | What It Tests | What Failure Looks Like |

Differentiation | Whether the message creates a clear competitive position | Buyers say it sounds like every other brand in the category |

Clarity | Whether the core offer is understood on first read | Buyers can't explain back what the product does or who it's for |

Relevance | Whether the message speaks to a problem the buyer actively has | Buyers find it interesting but don't connect it to their situation |

Value | Whether the promised outcome is worth acting on | Buyers understand the message but feel no urgency to respond |

How to Run a Messaging Test | A 6-Step Framework

Step 1: Define the Research Objective and Decision It Supports

Start with the decision, not the message. Before writing a single discussion guide question, the team should name the specific business decision this messaging test is feeding: finalizing the headline before creative briefing begins, choosing between three positioning variants before the investor deck is locked, or validating a campaign concept before media spend is committed.

That decision determines everything downstream: which participant profile to recruit, how many different messages to test in a single session, and how deep the AI interviewer needs to probe before the data collected is conclusive. Teams that enter a messaging test with a broad objective like "understand how customers feel about our brand" routinely get findings that can't drive a room to an informed decision. Specific objectives produce specific, actionable outputs.

Step 2: Identify and Screen Your Participants

Message testing research produces reliable signals only when the people responding to it match the people you're actually trying to reach. Testing a value proposition with the wrong audience doesn't just waste fieldwork time: it produces directional data that can actively mislead the brief.

Define your screener in concrete terms: industry, role, seniority, and direct experience with the problem your product or service solves. Consider mapping participants to your buyer persona before recruitment; the closer the match to your ideal customer profile, the more directly the findings will apply to your real target customers. Where possible, exclude participants who already know your brand to keep familiarity bias out of the results.

Step 3: Design the Discussion Guide

A strong discussion guide for messaging testing is not a script. It is a structure with probing latitude: enough shape to keep sessions consistent across participants, enough flexibility to follow the moments that matter.

Build your guide in this sequence:

Warm-up questions to establish the participant's context before any stimulus is introduced

Stimulus exposure presents the message, headline, or value proposition being tested. This works for different versions of the same concept as well as entirely different messages

Open-ended comprehension questions to surface what participants actually understood

Relevance and differentiation probes to assess whether the message speaks to their situation and effectively communicates a distinct benefit

Overall reaction question to capture an unprimed, holistic response

Branching probes for moments of confusion or strong positive reaction

That last element is where most guides fall short. When a participant hesitates, misreads a claim, or lights up at a specific phrase, a rigid script moves on. A well-designed guide with branching probes follows them in. Those moments, when a participant says "I'm not sure what that means" or "that's exactly my problem", carry more signal than any rating scale.

Step 4: Run the Interviews

Depth interviews are the gold standard for messaging research because they create space for real probing. A session running 30 to 45 minutes gives a skilled interviewer, or a well-configured AI interviewer, enough room to follow hesitation, test comprehension by paraphrasing responses back, and push on reactions that feel polished or rehearsed rather than genuine.

Focus groups can work at the exploratory stage of a messaging project, particularly when the goal is to understand how target customers talk about a category before any copy is written. But for evaluating how specific marketing messages perform against the four core dimensions, individual depth interviews produce cleaner signals. Group dynamics introduce social influence that suppresses the honest, individual reactions that predict how a message behaves in the wild.

Whether sessions are human-led or AI-led, the moderator's job is the same: listen for what participants do not say as much as what they do.

Step 5: Analyze and Synthesize

Thematic coding is the analytical engine of a messaging test. The goal is not to average impressions across participants but to identify structural patterns: where comprehension breaks down consistently, how participants rephrase the message in their own words, and which objections surface repeatedly against your differentiation claims.

Work through four analytical passes in sequence:

Comprehension gaps: Where did participants misread or reframe the core message?

Message mining: What language did participants use when restating the message positively? That phrasing is often stronger than what you wrote, and represents customer preferences expressed without prompting.

Objection mapping: Which differentiation claims triggered skepticism, and from which segments?

Resonance language: What words and phrases appeared consistently in strong positive reactions?

For teams running messaging research alongside quantitative research methods, this qualitative layer provides the why behind the quantitative data. Where quantitative methods tell you how many people responded positively, qualitative analysis explains what drove that response, giving decision-makers a more complete picture before they commit to a direction.

Step 6: Deliver Stakeholder-Ready Findings

Structure findings around the four dimensions your messaging test was designed to evaluate: clarity, relevance, value, and differentiation. For each dimension, lead with a conclusion, then support it with direct consumer quotes, paraphrased language, and, where available, video or audio clips from the sessions themselves.

Marketing leaders and senior stakeholders who hear a participant's tone shift when they say "I'm not sure what that actually means" are more likely to act on a comprehension finding than stakeholders who read a bullet point saying "clarity was low." The evidence format matters as much as the finding itself. Conveo's highlight reels and clip-level traceability make this straightforward to produce without building a 40-slide deck from scratch.

Ready to run a messaging test? → See how Conveo teams move from brief to findings in days

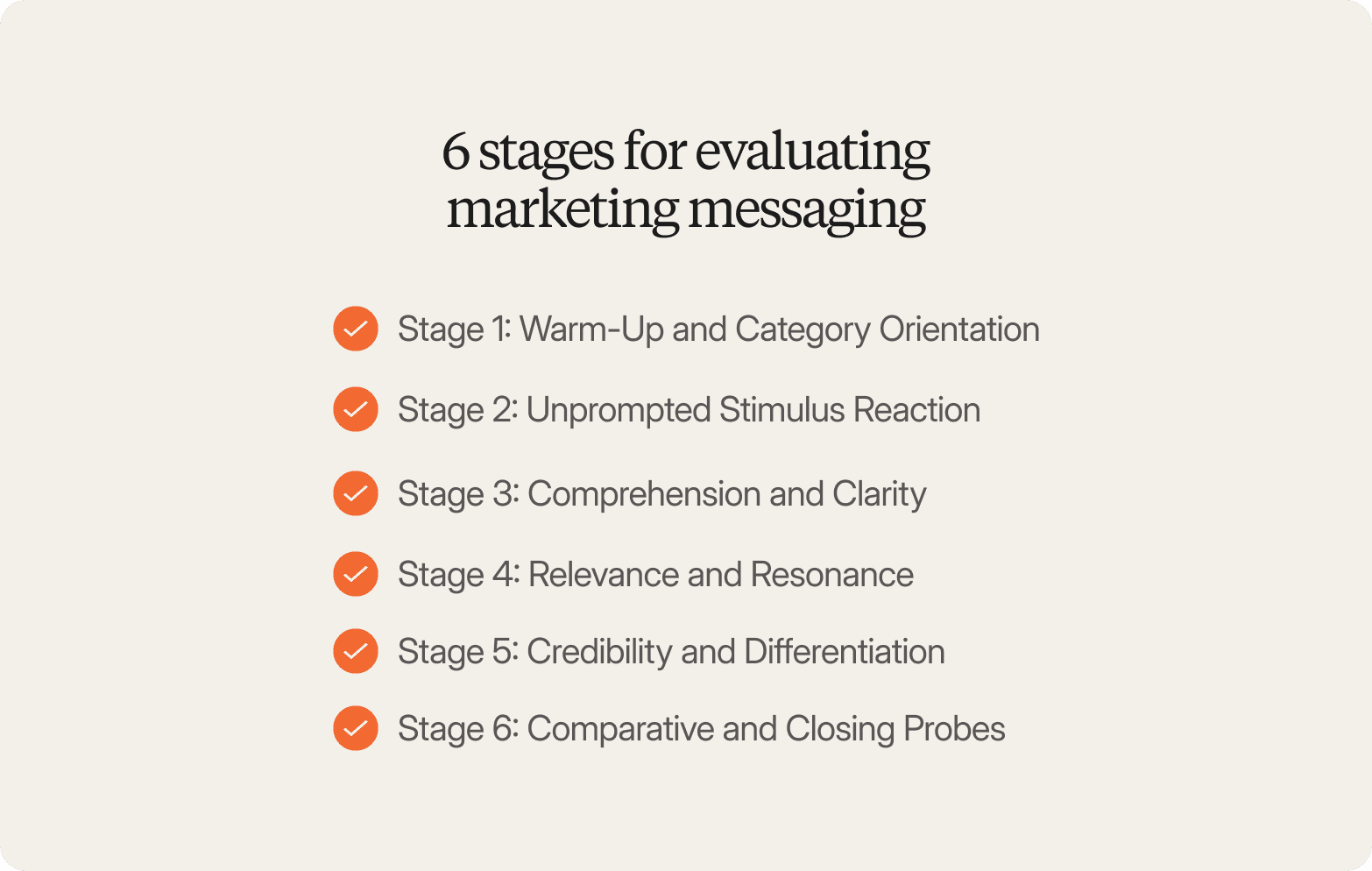

6 Stages for Evaluating Marketing Messaging

The question bank below is organized by discussion guide stage because sequencing matters. Strong messaging testing questions are open-ended, stage-appropriate, and designed to let participants lead rather than confirm what the team already believes.

Stage 1: Warm-Up and Category Orientation

These questions establish context and surface the language participants already use before any brand or messaging stimulus is introduced. Their answers here become the baseline against which you measure whether your message actually shifts perception and aligns with how customers naturally think about the category.

Walk me through the last time you had to choose between [category]. What were you weighing up?

When you think about what makes a product or service worth paying more for in this space, what comes to mind?

What would have to be true about a brand in this space for you to trust it straight away?

How do you currently describe [category problem] to colleagues or family?

Stage 2: Unprompted Stimulus Reaction

Participants see or hear the message for the first time. Questions here should capture immediate, unfiltered audience feedback before any probing shapes their thinking.

What's the first thing that went through your mind when you read that?

What did you take that message to be about, in your own words?

What stood out to you, if anything?

Was there anything that felt unclear or that you had to read twice?

Stage 3: Comprehension and Clarity

Once the initial reaction is captured, probe whether the message was understood as intended. Misreads at this stage are diagnostic: they tell you where the language is working against you and where product messaging needs to be simplified.

If you had to explain that message to a colleague in one sentence, what would you say?

What do you think the brand is claiming to do for you?

Was there anything you weren't sure about?

What do you think they mean by [specific phrase]?

Stage 4: Relevance and Resonance

This stage tests whether the message connects to real needs and whether messaging resonates with the intended audience. Participants who understand a message clearly can still find it irrelevant. These questions separate comprehension from resonance.

Does this feel like it was written for someone like you? Why or why not?

How well does this match the way you actually think about [problem]?

Is there anything here that would make you want to find out more?

What would make this message feel more relevant to your situation?

Stage 5: Credibility and Differentiation

Enterprise buyers in particular scrutinize whether marketing communications are believable and distinct. These questions surface the skepticism your team needs to hear before the campaign launches, and identify which claims need supporting evidence to land with potential customers.

How believable is this claim, on first read?

What would you need to see to feel confident that this is true?

Does this sound like something you've heard before, or does it feel different?

Which part of this, if any, would you push back on in a meeting?

Stage 6: Comparative and Closing Probes

Used when testing different versions of a message or wrapping up. These questions help prioritize and capture anything the guide may have missed, and are especially useful when the goal is to evaluate messages side by side before selecting a direction for marketing strategies and campaigns.

If you had to choose between Message A and Message B for a brand you were considering, which would move you further along and why?

Is there anything important to you about [category] that none of these messages addressed?

What one thing would you change about the message that would make it land better for people like you?

Over the past three years, AI-led qualitative interviewing has materially changed what messaging research can deliver at enterprise scale. Participants receive a link, complete a video interview on their own schedule, and are probed adaptively based on what they actually say, not a rigid script. Sessions run in parallel rather than against a moderator's calendar, which means a team can move from screened recruitment to thematic analysis in days rather than the six to ten weeks a traditional agency engagement requires. For brand and insights teams who need to test messaging before a brief locks, not after, this changes what is operationally realistic, and what gets skipped because the timeline doesn't allow it.

Why Marketing Leaders at Enterprise Companies Use Conveo

Conveo is a video-first AI qualitative research platform built for the kind of messaging testing that brand and insights teams at enterprise companies actually need: real consumer conversations, analyzed and delivered in days, with outputs stakeholders can trace back to source. The four reasons enterprise teams across various industries choose Conveo for messaging work are specific, and each connects directly to where standard approaches break down.

Real Participant Conversations, Not Synthetic Responses

Conveo's AI interviewer conducts video interviews with vetted, screened participants, not synthetic personas or avatar-generated responses. For messaging testing, where the entire research objective is authentic emotional reaction, this is not a minor distinction. Participants can be heard and seen reacting to a headline or value proposition in real time. A CMI director can show a stakeholder the exact moment when a participant said, "I'd have to think about what that actually means," rather than just report it as a bullet point.

This level of traceability matters especially when message testing results need to influence executive-level decisions or shape positioning for a major product launch.

From Study Setup to Stakeholder-Ready Findings in Days, Not Weeks

Teams go from a business objective or research brief to a live study in approximately 30 minutes, run 30 to 100 screened interviews in parallel, and receive thematic analysis with traceable quotes within three to five days. Enterprise teams report compressing research timelines from six to ten weeks with traditional agency fieldwork to a matter of days, without sacrificing the qualitative depth that makes findings defensible to stakeholders.

That compression matters most when messaging aligns to campaign timelines, product release schedules, or board-level reviews, situations where findings after the brief locks have no value, and findings before it change decisions.

Traditional Agency Qual | Conveo | |

Timeline | 6–10 weeks end-to-end | 3–5 days to thematic analysis |

Multimodal Analysis That Captures What Transcripts Miss

Conveo's analysis layer blends speech, tone, facial cues, and on-screen context, a form of advanced analytics that goes beyond what text-based platforms can process. When a participant's tone shifts in response to a pricing claim or they show genuine confusion at a tagline, that signal is captured and coded. Survey platforms and text-only interview approaches miss this layer entirely. For messaging testing, where the gap between what someone says and how they actually react is often where the most important insight lives, this distinction is the difference between a finding and a guess.

The result is a more complete picture of how your marketing messages perform with real audiences, including the non-verbal signals that predict whether a message will drive conversion rates or fall flat.

An Insight Library That Compounds Across Messaging Studies

Every messaging test run on Conveo feeds into a searchable, secure insight library protected by SOC 2 certification and regional data hosting. Consumer language that resonated during a previous product launch will be discoverable when the next positioning review begins. Teams stop losing findings to decks and start building a compounding record of what their target customers actually respond to, across campaigns, markets, and time.

For teams with ongoing messaging research needs, product launches, campaign validation, annual brand tracking, and multi-market positioning, this means each study builds on the last. Customer preferences, objections, and resonant language from earlier work become inputs to new messages, rather than being buried in a folder somewhere.

Conveo is the right fit for teams running ongoing messaging research across different stages of the product and campaign lifecycle. It is not designed for one-off, ad hoc studies without an internal research owner or a recurring ambition for insights.

See how teams run qualitative research with Conveo → Book a demo

Frequently Asked Questions

What Is Message Testing?

What Is the Purpose of Message Testing?

How Do You Conduct Message Testing?

What Is the Difference Between Message Testing and A/B Testing?

What Is the Difference Between Message Testing and Focus Groups?

What Are the Key Metrics Evaluated in Message Testing?