TL;DR

Qualitative research automation is no longer an emerging capability; it is an operational decision that research teams at enterprise organizations are being asked to make now.

This article covers:

Where manual qualitative workflows break down: the structural bottlenecks that limit study volume and prevent human insights from reaching decisions in time

Three automation models and how to choose between them: matched to team maturity, research methods, and organizational readiness

The governance framework Research Ops Managers need: SOC 2, GDPR, data residency, SSO, and what procurement actually requires before a platform clears approval

How to build the internal business case: the evidence, framing, and stakeholder language that moves a pilot into a signed contract

Written for teams that have moved past "should we automate qualitative research?" and are now working through how to do it responsibly.

Qualitative research automation is a workflow decision already in motion. Research operations managers are being asked to modernize the way qualitative work is done. The question has shifted from whether to automate to how to do it in a way that earns stakeholder trust and clears compliance reviews.

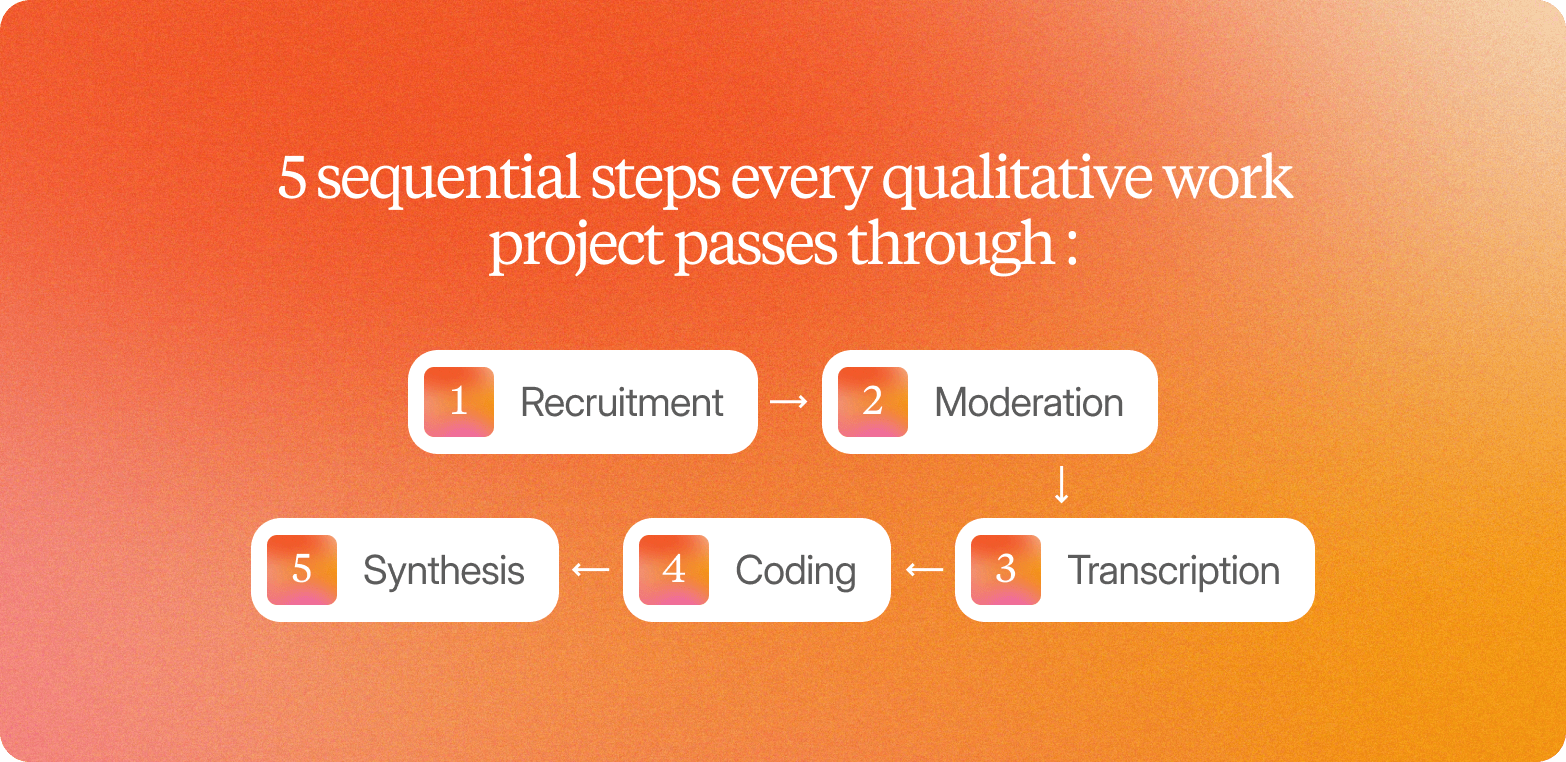

The timeline problem is structural. Traditional qualitative research requires manual coordination across at least five stages: recruitment, moderation, transcription, coding, and synthesis. Combined, these produce cycles of six to ten weeks from brief to findings. Against two-week product sprints and fixed campaign go-live dates, that pace is a genuine liability.

The consequence is a missed decision. By the time the research is back, the decision is usually already made. The concept gets approved based on internal consensus rather than customer feedback. The campaign launches on instinct. Research that arrives after the decision window closes confirms what the team already did; it doesn't inform them what to do next.

Automated qualitative research changes the economics of that cycle. When recruitment, interviewing, transcription, and the analysis process run in parallel rather than in sequence, the six-week timeline compresses. Studies that once required agency coordination can return structured, traceable findings in days.

This article is not a platform comparison guide; there are already good tools for that (see Conveo's AI tools comparison). This is for teams that have moved past vendor selection and are now designing the actual automation workflow.

Where manual qualitative workflows break down

Every qualitative work project passes through five sequential steps: recruitment, moderation, transcription, coding, and synthesis. Each one adds time. Because they run in sequence rather than in parallel, the delays compound.

Recruitment

Consumes two to three weeks before a single interview takes place, screening participants, scheduling sessions, filtering fraudulent respondents, and managing incentives across time zones.

Moderation

Caps throughput. A skilled human moderator conducting interviews can manage three to five sessions per day. A 30-person study takes at least a week of fieldwork, before accounting for no-shows and rescheduling.

Transcription

Is slower than most teams anticipate. Manual analysis of audio data requires four to six hours of work per hour of recorded interview. Multi-language studies add further complexity: specialized vendors are required, and 30 sessions can take weeks to transcribe when files are submitted in batches.

Coding

Is where traditional qualitative research stalls. Thematic analysis of 20 or more sets of interview transcripts can take one to two weeks for a single analyst. The work requires reading every transcript, developing a coding frame, applying it consistently, often across Excel spreadsheets or qualitative data analysis software, and reconciling disagreements. A time-consuming, systematic process that grows linearly with study volume.

Synthesis

Is consistently underestimated. Turning coded themes into stakeholder-ready outputs requires building a narrative and editing findings for an audience that wasn't in the room. Add another week.

The cumulative result: six to ten weeks for traditional qualitative research, often longer for multi-market programs. Business decisions don't wait. When research timelines and decision timelines diverge this sharply, insights teams either skip qualitative work and rely on survey results that miss the "why," or run fewer studies and accept the gaps.

Platforms that conduct AI-moderated video interviews asynchronously, with participants completing sessions on their own schedule and transcription handled in real time across 50+ languages, remove two of the five bottlenecks in a single step. What remains are the steps requiring human expertise: study design, critical thinking about findings, and synthesis in a business context.

Comparison table: Manual vs. automated qualitative workflows

The operational differences between manual and automated qualitative research workflows are most visible at the stage level. This is a workflow comparison, not a platform comparison. Readers looking for platform comparisons should use the linked articles above.

Workflow stage | Manual | Automated (end-to-end platform) |

Recruitment and screening | 2–3 weeks: panel sourcing, screening, scheduling, incentive management | Hours: built-in recruitment, fraud filtering, and incentive handling |

Conducting interviews | 3–5 interviews per day per moderator; scheduling overhead across time zones | 10 to 1,000+ asynchronous conversations; no scheduling dependency |

Transcription | 4–6 hours per hour of audio data; multi-language requires specialized vendors | Real-time transcription in 50+ languages |

Coding and synthesis | 1–2 weeks for thematic analysis of 20+ interviews | Hours for AI-assisted automated coding, with human oversight retained |

Total cycle | 6–10 weeks end-to-end | Days from launch to stakeholder-ready output |

Note: automated column figures reflect capabilities achievable with an integrated end-to-end platform. Point solutions that handle only one stage will not produce these outcomes on their own.

The compression comes from removing repetitive tasks that don't require human judgment: chasing panel partners, waiting for transcripts, and manually applying a coding frame across dozens of files. When those steps are handled by the platform, experienced researchers spend their time on work that only they can do.

The 3 automation models: Which one fits your team?

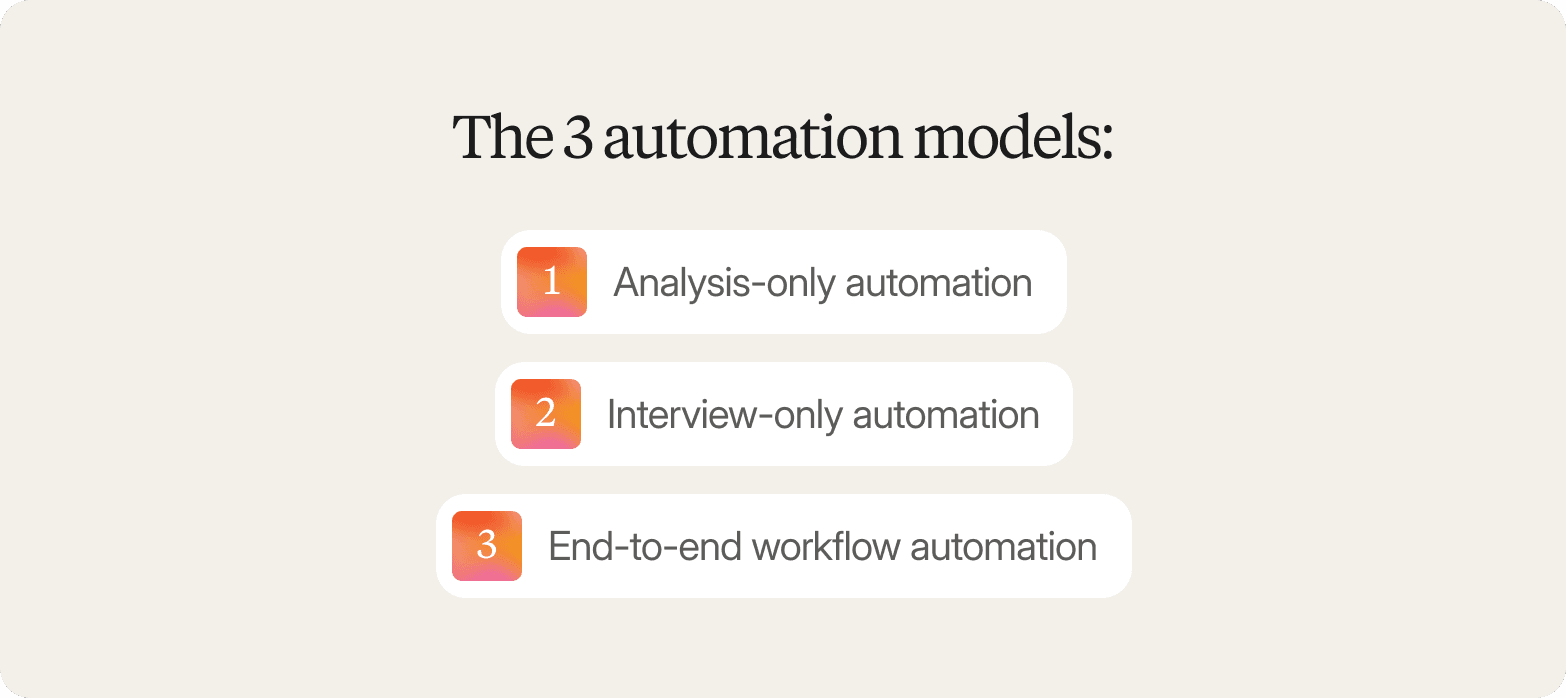

Qualitative research automation is not a single capability. There are three distinct operational models, and teams at different stages of maturity will benefit from different ones.

Analysis-only automation

The team continues to use existing research methods for recruitment and conducting interviews, but replaces manual analysis, transcription, manual coding, and synthesis with automated tools. Best fit when the primary bottleneck is the analysis process backlog, not data collection volume. Qualitative researchers with strong moderation capacity but an overflowing queue of interview transcripts will see real gains here. Teams whose core problem is data collection volume will not.

Interview-only automation

AI-moderated interviews replace live moderation, compressing data collection. Recruitment, qualitative data analysis, and reporting remain largely manual. This suits teams with established panel relationships, or organizations that need to prove AI moderation credibility before committing to broader workflow change. It doesn't resolve the full timeline, compressing the collection from two weeks to three days and then spending another three weeks on manual analysis has moved the bottleneck, not removed it.

End-to-end workflow automation

Recruitment, AI-moderated interviewing, automated coding, and stakeholder-ready reporting are integrated in a single platform. The advantages of automated qualitative research are most fully realized here. Platforms built around end-to-end workflow integration, such as Conveo, connect recruitment, AI-moderated video interviews, and automated qualitative analysis in a single workflow, so teams can answer research questions and receive decision-ready findings in days rather than weeks.

The distinction matters: choosing end-to-end automation to solve an analysis-only problem is over-investment. Choosing analysis-only tooling when the real bottleneck is recruitment and moderation leaves the timeline problem intact.

What no model replaces: study design, the human expertise needed to frame research questions, and the critical thinking required to interpret qualitative data in a business context. Automation is operational leverage, not a replacement for experienced researchers.

Automating qualitative interviews: AI-Led data collection

The first thing enterprise stakeholders ask when AI-moderated interviews come up is not "how fast can we get results?" It is "Can I trust what comes back?" That question is the right one to start with.

The benefits of automating qualitative research interviews are clearest when you consider what the credibility argument actually requires. Conveo's AI interviewer conducts real video and voice conversations with real human participants, no avatars, no synthetic respondents. The video-first format means stakeholders can inspect the underlying data, not accept an AI-generated summary on faith. When a CMI director presents findings to a skeptical C-suite, pulling up a 90-second clip of a real consumer explaining their hesitation is a different class of evidence than a bullet point in a deck.

"Conveo's video-first approach is a real differentiating methodological advantage. The ability to distill insights from reactions and not just hear answers adds context you simply can't get from transcript-only tools, or any other tool in the market for that matter."

Senior Marketing Research & Insights Manager, Google

Beyond credibility, there is a meaningful shift in capacity. Teams can run 10 to 1,000+ conversations simultaneously without adding moderators or coordination effort, processing large volumes of qualitative data that would take weeks to collect through traditional methods. Conveo supports this across 50+ languages with no separate translation workflow required.

What distinguishes AI-moderated interviews from glorified surveys is adaptive probing. Rather than a scripted sequence, the AI follows up based on what participants actually say, extracting deeper insights and contextual understanding that open-ended survey responses simply cannot deliver. The result is the conversational depth of qualitative work, delivered without the calendar overhead.

Teams report moving from six to ten weeks with traditional agency fieldwork to three to five days on comparable studies. AI-moderated interviews are not the right fit for every context; in-person immersion and physical product interaction still require human researchers. But they remove the operational ceiling that prevents insights teams from running qual continuously rather than periodically.

Automating data analysis: Transcription, coding, and synthesis

The analysis bottleneck in qualitative research is familiar to anyone who has run a study at scale. Manual analysis takes four to six hours for every hour of audio data, and thematic analysis across 20 or more sessions can consume one to two weeks before synthesis even begins.

Automated qualitative analysis changes where that time goes, not how much rigor the output carries. Conveo transcribes every session in real time across 50+ languages using natural language processing and machine learning, eliminating per-interview lag as study volume grows. For multi-market studies, automated translation removes the dependency on specialist vendors; a study running simultaneously across multiple data sources in German, Portuguese, and Korean no longer means three separate timelines.

On the coding side, AI-generated codes and AI-generated themes replace manual line-by-line qualitative content analysis. Rather than a researcher reading the entire dataset to identify recurring patterns, Conveo's analysis layer runs automated coding, clusters relevant data, and structures findings automatically. Content analysis that previously took weeks now takes hours. Human oversight is retained throughout: AI structures and accelerates the analysis process, but experienced researchers review, challenge, and apply human interpretation before findings are delivered. Subjective interpretations and business context remain the researcher's domain.

The traceability requirement is non-negotiable

Outputs must tie AI-generated themes to verbatim quotes, and verbatim quotes to source video clips. That chain, from finding to quote to video, is what makes automated qualitative research findings credible to procurement, legal, and executive stakeholders. Break it, and trust breaks with it.

This is the core failure mode of using general-purpose LLMs for qualitative data analysis. Pasting open-ended feedback or open-ended survey responses into ChatGPT produces plausible-looking summaries with no grounding in the underlying data, no audit trail, and no traceability. That is not a robust approach; it is a synthesis without evidence that won't survive enterprise scrutiny.

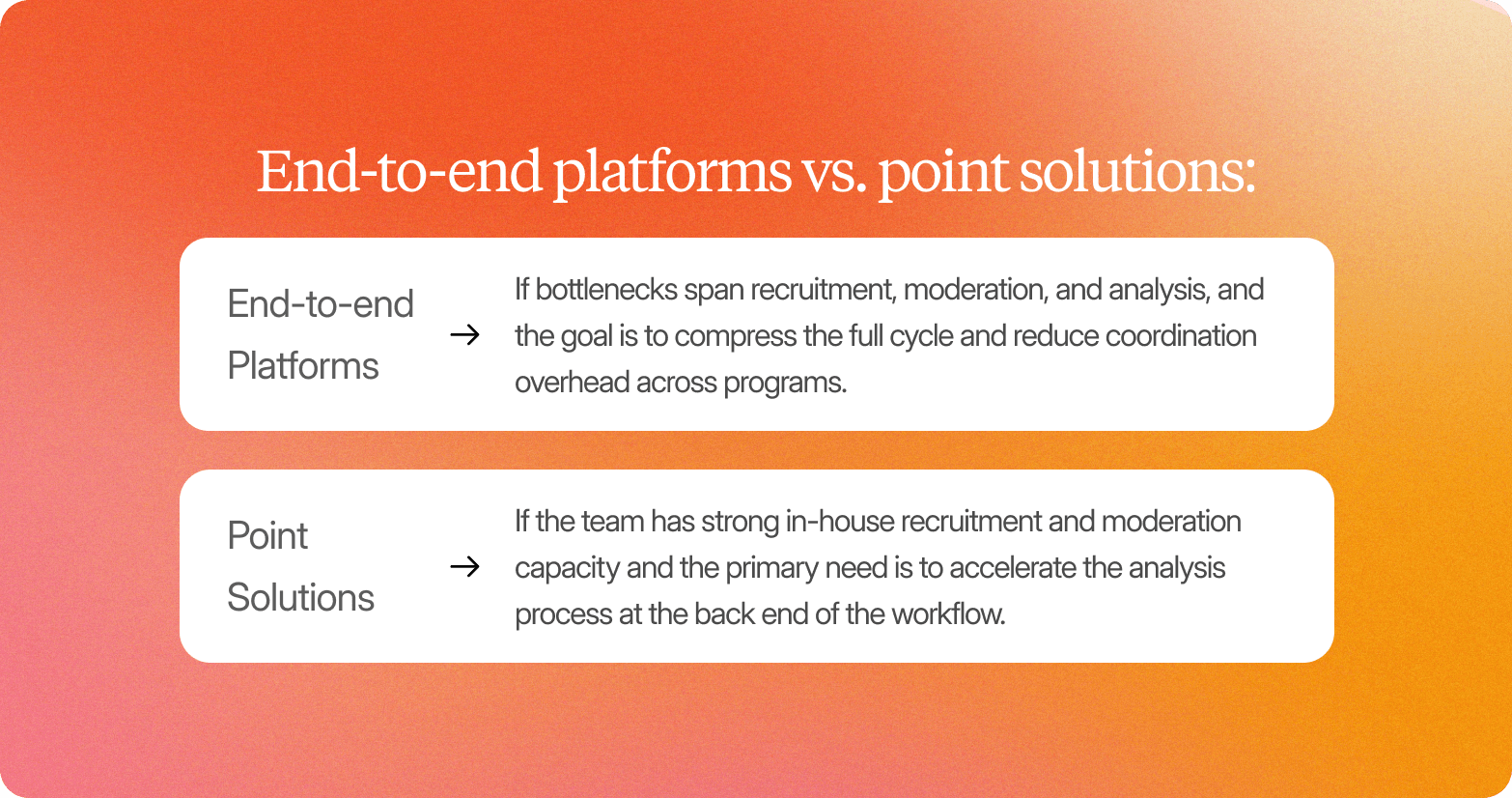

End-to-end platforms vs. point solutions: How to choose

Most enterprise research teams have accumulated their stack one problem at a time: an AI tool for transcription, a qualitative data analysis platform for coding, a survey tool for faster data collection. Each addition solved a real problem. The result is a workflow in which every handoff creates coordination overhead that falls on the research team.

The point solution landscape for tools for automating qualitative research in enterprises covers the full workflow in fragments. Transcription platforms handle one data source, QDA platforms handle another, and survey tools handle a third. None of them connect by default; researchers spend time exporting files, reformatting data collected from different systems, and chasing status updates. The bottleneck moves rather than disappears. (For readers evaluating specific platforms, Conveo's AI tools comparison covers 12 options in detail.)

End-to-end platforms are designed around the workflow as a whole. Recruitment, AI-moderated video interviews, automated qualitative analysis, and stakeholder-ready reporting run as a single connected process. Conveo is built on this model. Research Ops Managers working across multiple studies can launch, field, and deliver findings without managing handoffs between systems or vendors. Every step runs inside a single governed environment with consistent data handling, audit trails, and access controls across business units.

Choose point solutions if the team has strong in-house recruitment and moderation capacity and the primary need is to accelerate back-end analysis.

Choose an end-to-end platform if bottlenecks span recruitment, moderation, and analysis, and the goal is to compress the full cycle and reduce coordination overhead across programs.

The governance framework: How to automate without losing credibility

Research Ops Managers introducing qualitative research automation face two distinct challenges before any platform can be deployed at scale: stakeholder trust and procurement clearance. Solving one without the other is not enough.

Stakeholder trust requires a complete evidence chain

Automated research outputs are only valuable if stakeholders trust them. Trust requires traceability; every finding links to a theme, every theme links to verbatim quotes, and every quote links back to the source interview, inspectable by video or transcript. The evidence chain is: finding → theme → verbatim → video clip. If any link is missing, the output cannot survive scrutiny.

Generic AI tools that generate summaries from qualitative data without source grounding present a specific credibility problem. When a stakeholder asks, "Where does this finding come from?" and the answer is a black-box model rather than a real participant conversation, trust breaks down. The question to ask of any qualitative research automation platform is direct: can my stakeholders trace this finding back to the participant who said it?

Conveo addresses this directly. Because every interview is conducted on video with real human participants, no avatars, no synthetic respondents, the underlying data is verifiable at every level. Findings link to clips. Clips link to full sessions. Sessions are inspectable by any stakeholder with appropriate access.

Procurement clearance is a gate, not a consideration

Before any platform reaches a research team's workflow, it must clear enterprise procurement. For Research Ops Managers, this means mapping specific requirements:

SOC 2 certification

Security and availability standards for enterprise vendor approval

GDPR compliance

Participant data handled in accordance with EU privacy regulations

EU data hosting

Allows European enterprises to meet data residency requirements

SSO and role-based access controls

Enterprise user management and audit trail documentation

Data retention and deletion policies

Alignment with internal data governance standards and ethical considerations around participant data

Many AI research platforms lack SOC 2 certification, GDPR compliance, or EU data hosting, disqualifying them from enterprise consideration regardless of feature set. Conveo is SOC 2 certified, GDPR-compliant, and offers EU regional data hosting. Research Ops Managers building the internal procurement case can use these credentials directly in security reviews.

Governance readiness checklist

Can stakeholders trace findings back to source interviews, verbatim quotes, and video clips?

Is the platform SOC 2 certified?

Does it offer GDPR compliance and EU data hosting?

Does it support SSO and role-based access controls?

Are participants real humans, verified through the platform's screening process?

Can human researchers review and refine AI-generated themes before delivery?

Governance is not a box to tick after automation is selected. It is the framework that determines which automation is viable in the first place.

Who should automate qualitative research, and who shouldn't

Qualitative research automation is not the right fit for every team. Part of building a credible internal case is being honest about fit.

Where automation delivers the most value:

Research Ops teams supporting multiple simultaneous projects across markets or business units hit a structural ceiling with manual coordination. Scheduling, transcription, and thematic analysis across five concurrent studies is not a bandwidth problem that hiring solves quickly. Automation addresses the architecture, not just the workload.

Insights functions that have delivered findings after the decision window closed know this well: the qualitative work was rigorous, the research methods sound, and the stakeholders had already moved on. When the timing gap is structural rather than occasional, automation is the lever.

Teams shifting qualitative research in-house after years of agency-led fieldwork are also strong candidates. An end-to-end platform with confirmed compliance infrastructure can absorb agency overhead without adding a new category of vendor management. Research Ops Managers evaluating Conveo often start with a single-market pilot, 20 to 50 interviews on a concept test or voice-of-customer study, to build stakeholder confidence before committing to full workflow adoption.

Where automation is not the right fit:

Single-use, one-off studies rarely justify the platform investment. Methodologies requiring in-person immersion or physical product interaction can't be replicated asynchronously. And teams where internal stakeholder trust in AI-driven outputs has not been established should sequence carefully: rushing to automate before the governance framework is in place creates credibility problems harder to recover from than the timeline problems automation was meant to solve.

For teams not yet ready for full workflow automation, starting with automated analysis is lower-risk, it builds familiarity with AI-generated codes and themes, and generates internal credibility before moving to AI-moderated interviews.

Building the internal business case for qualitative research automation

The internal business case is not primarily about cost. The most compelling argument for procurement and leadership is not "we will save money on research", it is "we will stop making decisions without real customer input."

Three audiences need different answers simultaneously:

Procurement and IT

Are asking whether the platform is enterprise-grade. The answer is documented: SOC 2 certification, GDPR compliance, EU data residency, and SSO support. The governance checklist above is the procurement appendix. Present it as infrastructure evidence, not a feature list.

Senior research and insights stakeholders

Are asking whether findings can be trusted and defended. The evidence chain answers this: every finding traces to a theme, every theme to a verbatim, every verbatim to a video clip. Human researchers remain in the loop. Participant authenticity is verifiable. This is the credibility architecture that makes AI-driven qualitative analysis defensible to a CMO or board.

Business sponsors and P&L owners

Are asking whether the investment delivers better outcomes. Teams report moving from 6–10 week cycle times to three to five days with end-to-end platforms. In programs that previously relied on agency qualitative delivery, teams report up to 70% lower research spend. Conveo's team works with Research Ops Managers through the business case process directly, including providing compliance documentation for enterprise procurement reviews.

A practical structure: document the current state (studies per year, average cycle time, studies missed due to timing), calculate the opportunity (how many additional studies become viable if the cycle compresses), list the governance credentials as a procurement appendix, and anchor the case on one pilot study with traceable outputs.

How Conveo supports enterprise qualitative research automation

Research Ops Managers reading this article have worked through a real evaluation framework: what qualitative research automation actually covers, where governance requirements sit, and what it takes for AI-generated themes and findings to earn stakeholder trust. Conveo is built to meet all three standards in a single platform.

Workflow coverage

Conveo covers the full qualitative research cycle, participant recruitment from vetted global panels, AI-moderated video interviews across 50+ languages, automated qualitative content analysis and thematic analysis, and stakeholder-ready reporting. No handoff between a recruitment platform, a moderation tool, a transcription service, and a reporting layer. For consumer and market insights teams whose bottleneck spans the entire cycle, that consolidation removes the coordination overhead that point solutions leave in place.

Governance and compliance

Conveo is SOC 2 certified, GDPR-compliant, and supports EU regional data hosting. SSO and role-based access controls are standard. For Research Ops Managers building a procurement case, these credentials map directly to the enterprise procurement checklist, no compliance gaps to explain, no security questionnaires requiring custom documentation.

Evidence traceability

Every AI-generated theme Conveo surfaces ties back to verbatim quotes and source video clips. Stakeholders can move from a high-level finding to the specific participant response that supports it. Enterprise teams at Google, Reddit, FOX, and Bosch rely on this level of source-linked evidence as a baseline requirement for research outputs that inform real decisions.

Frequently Asked Questions

What is qualitative research automation?

What are the benefits of automating qualitative research interviews?

How does qualitative research automation maintain research rigor?

What should enterprise teams look for in a qualitative research automation platform?