TL;DR

Transcription in qualitative research converts recorded interviews into structured written text for coding and analysis. Without accurate research transcripts, qualitative data analysis loses its foundation.

Three transcription types serve different research purposes: verbatim transcription captures every word spoken and non-verbal cues; intelligent verbatim transcription removes noise while preserving participant intent; edited transcription restructures responses for pattern identification. Most enterprise work defaults to intelligent verbatim.

Consistency in the transcription process matters as much as accuracy. Standardizing qualitative data transcription across hundreds of qualitative interviews is where manual transcription and fragmented workflows break down.

Integrated platforms eliminate the export-clean-re-upload cycle that adds days to every project and introduces errors in transcribed data between steps.

Enterprise buyers must verify: SOC 2 certification, regional data hosting, GDPR-compliant consent language, and sensitive data handling protocols.

Transcription in qualitative research is where insight generation either accelerates or stalls. Raw research transcripts that arrive without speaker labels, consistent timestamps, or standardized formatting cannot be coded until someone cleans them first. That cleaning step compounds across qualitative studies: when each interview produces a different artifact, cross-participant comparison becomes unreliable, and findings arrive after the decision window has closed.

Analysis-ready transcription is the foundation that makes qualitative data comparable, traceable, and credible.

What is transcription in qualitative research, and why does quality determine analysis speed?

Transcription in qualitative research is the process of converting spoken data from qualitative interviews and focus group discussions into structured written text for qualitative data analysis. While quantitative research produces structured numerical data that is immediately analyzable, qualitative research generates spoken data, words spoken by participants in in-person interviews, remote sessions, and focus groups, that must be converted into a written format before analysis can begin.

The bottleneck is structural. Analysts cannot begin thematic coding until research transcripts are cleaned, reformatted, and prepared for comparison. Inconsistent speaker labels force manual identification before a single theme can be tagged. Missing timestamps break the link between coded written text and source audio or video recordings. Unstructured written form requires manual segmentation before any coding platform can process it. The importance of transcription in qualitative research comes down to this: poor transcription of qualitative data delays findings beyond decision windows, and that delay compounds across every qualitative study the team runs.

The standard to evaluate against is the analysis-ready transcript: consistent speaker labels, timestamps tied to the source recording, and a structure that a coding platform can process without reformatting. Enterprise-grade platforms produce this by default. Individual researchers relying on manual transcription and separate tools do not.

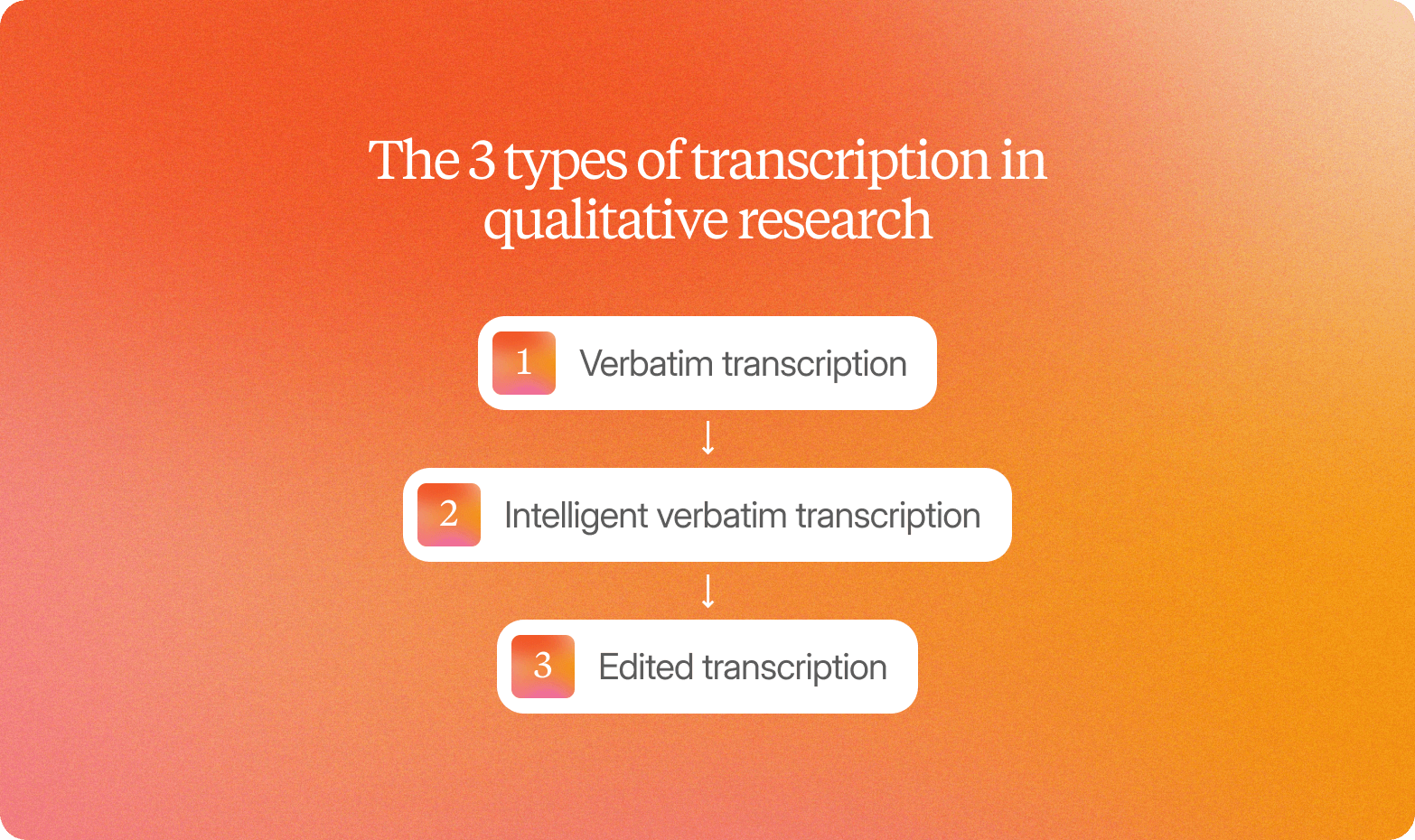

The 3 types of transcription in qualitative research

Three main approaches exist across qualitative research methods, each suited to a different analytical goal.

Verbatim transcription

Verbatim transcription in qualitative research captures every word spoken by participants, including false starts, filler words, pauses, and nonverbal cues such as laughter or audible sighs. Qualitative researchers use it when the question is about how something is said: discourse analysis, emotional response mapping, and focus group discussions where hesitation and tone carry meaning. This approach requires careful listening and is time-intensive to produce and code.

Intelligent verbatim transcription

Intelligent verbatim transcription captures everything a participant says, then removes the noise: filler words, false starts, repeated phrases. The result is clean, readable written text that preserves meaning and speaker intent. It is the default for thematic analysis in qualitative research and is well-suited to concept testing and most consumer qualitative interviews. When hesitation or self-correction carries analytical weight, intelligent transcription removes context that a skilled researcher might otherwise probe.

Edited transcription

Edited transcription, sometimes called summary transcription, condenses participant responses into key points rather than preserving exact language. Market researchers and qualitative researchers conducting large-scale studies use it to efficiently identify patterns in qualitative data. Without verbatim language, the evidence trail that lets stakeholders trace a finding back to a real participant's words is lost.

The decision rule: discourse analysis requires verbatim transcription; concept testing calls for intelligent verbatim; large-scale pattern detection is where edited transcription earns its place. Enterprise research platforms like Conveo apply the appropriate type consistently across every session, eliminating the variation that occurs when individual researchers or professional transcribers make ad hoc choices.

How to standardize transcription across research teams

Without shared conventions, each interview becomes its own artifact. One member of the research team labels speakers as "I" and "P." Another uses full names. When those research transcripts reach qualitative researchers for analysis, cross-participant comparison breaks down, and automated theme detection produces noise instead of signal.

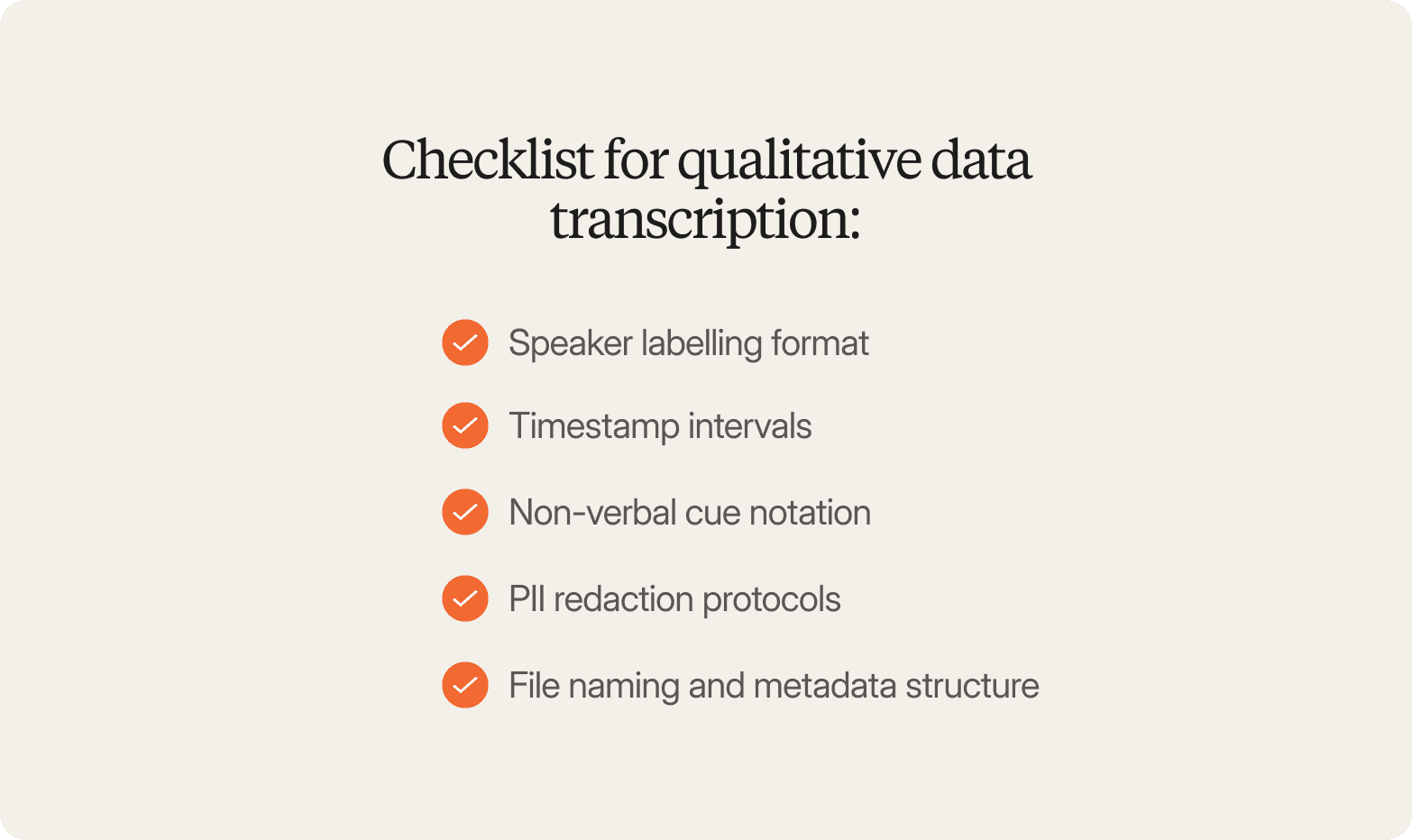

A standardization checklist for qualitative data transcription:

Speaker labeling format: one convention across all qualitative studies, applied without exception

Timestamp intervals: every 30 seconds, at each question transition, or on demand, documented in the study protocol

Non-verbal cue notation: consistent markers for laughter, pauses, and tone shifts

PII redaction protocols: names, locations, and employer references removed from research recordings at transcription, not after analysis

File naming and metadata structure: study ID, participant ID, date, and language, consistent across projects

Conveo applies these rules automatically across every session, regardless of who ran the interview or which market the participant was in. That structural consistency is what makes standardization achievable at enterprise scale, not a guideline that lives in a shared folder.

Transcription workflow integration: From interview to analysis

Most teams treating transcription as a standalone step in the research process live with the same delay cycle. The qualitative interview finishes; someone exports the audio file, uploads it to a transcription service, waits, downloads the output, cleans the speaker labels, reformats the research recording, and then uploads it to a separate analysis environment. Each handoff costs time and context. Tone, hesitation, the moment a participant's voice shifted: none of that survives a plain-text export from a point transcription tool.

Conveo eliminates that gap. When an interview is completed on the platform, the transcript is auto-generated with speaker labels and timestamps, themes are detected, and coding can begin the same day. The research team spends time on interpretation rather than file management.

How to analyze interview transcripts in qualitative research

Analyzing interview transcripts in qualitative research is where most analysis timelines collapse. Twenty qualitative interviews produce hundreds of pages of written text. Manual coding takes days: reading each transcript, tagging key themes, grouping those themes into categories, synthesizing patterns across data segments, and linking every finding back to verbatim evidence.

Automated theme detection addresses the scale problem. AI can identify patterns across large sets of interview transcripts and surface key insights without days of manual coding. But the output is only defensible if it remains traceable. Analyzing interview transcripts with a generic large language model produces summaries, not evidence. When a stakeholder asks which participants said what, a summary without source links cannot answer that question.

Conveo is built on research-grade qualitative analysis: every identified theme ties back to the verbatim quote and video recording that generated it, providing valuable insights that stakeholders can verify rather than simply accept.

Enterprise requirements: Multilingual research and data governance

Multilingual research

Enterprise teams running qualitative research across markets face a problem that sits before analysis can begin. Transcription and translation in qualitative research introduce failure points that compound at every stage. Interviews conducted in local languages must be transcribed accurately in the original language before translation. When that chain breaks, brand terms are rendered inconsistently, regional dialect variations are flattened, and complex terminology loses meaning, making cross-market comparisons of qualitative data unreliable.

Conveo's multilingual infrastructure covers more than 50 languages, with automated transcription in the original language and machine translation.

Data governance

Procurement and legal teams ask a short set of questions before approving any transcription platform: where is research data stored, how is sensitive data handled, and what happens to recorded audio and video files after a qualitative study closes?

Participants must consent to recording, transcription, and storage. Strict confidentiality agreements must govern how any third-party transcription service handles transcribed data. Secure file transfer protocols are required for audio or video files. PII redaction must be applied at the transcription stage, removing identifiable details from written records before they reach the analysis layer.

Conveo is SOC 2 certified and GDPR-compliant, with optional EU regional data hosting, built-in PII redaction, and configurable role-based access controls.

What to look for in a transcription solution

The first question is not which tool transcribes most accurately. It is whether the transcription process sits inside your research workflow or outside it.

Standalone transcription software and generic transcription services produce written documents that need to be exported, reformatted, imported into a separate platform, and manually coded. Poor audio quality and background noise from focus group discussions introduce errors that human transcriptionists must correct. And a written transcript alone loses what a video recording preserves. A transcript linked to a video lets stakeholders watch the participant moment that gave rise to a theme. Text-only transcription software cannot provide that.

"Conveo's video-first approach is a real differentiating methodological advantage. The ability to distill insights from reactions and not just hear answers adds context you simply can't get from transcript-only tools, or any other tool in the market for that matter."

Senior Marketing Research & Insights Manager, Google

Before committing to any approach to transcribing interviews, qualitative researchers should pressure-test against five questions:

Does the transcription process flow directly into analysis, or do research transcripts require export and re-upload?

Are speaker labels, timestamps, and structure applied automatically and consistently to every written transcript?

Can key themes be traced back to verbatim quotes and original video recordings?

Does the platform support multilingual transcription and translation for multi-market qualitative studies?

Is the vendor SOC 2 certified and GDPR-compliant for handling sensitive research data?

Conveo is built to answer all five.

How Conveo approaches transcription for enterprise research teams

Conveo treats transcription as an integrated part of the research process, not a preprocessing step that happens before the real work begins. Every session is transcribed, translated, and structured automatically as recorded audio and video files arrive. Speaker labels, timestamps, and consistent formatting are applied across every interview, market, and language, without a researcher manually cleaning research recordings before analysis begins.

Every identified theme links back to the verbatim quote and video recording that generated it. There is no export-clean-re-upload cycle, and findings can be traced and audited rather than taken on faith. Governance is built in: SOC 2-certified, GDPR-compliant, optional EU data hosting, built-in PII redaction, and support for 50+ languages.

Frequently Asked Questions

What is transcription in qualitative research?

What are the types of transcription in qualitative research?

Why is transcription important in qualitative research?

How do you analyze interview transcripts in qualitative research?

What is a verbatim transcription in qualitative research?

What is an example of transcription in qualitative research?