TL;DR

Voice of the customer interview questions are most effective when sequenced by objective: context-setting first, then experience reconstruction, then evaluation, then implication.

Most VoC question sets are designed for surveys, not conversations. Research-grade questions use a three-layer probing structure that turns vague answers into traceable, quotable findings.

Synthesis speed determines whether findings arrive in time to influence decisions. End-to-end platforms compress the full workflow from weeks to days and build institutional memory across studies.

The teams consistently generating decision-useful VoC insight build question guides around specific business decisions, not generic topics.

Most VoC interview guides treat question selection as the primary challenge. It isn't. The harder problem is structural: most teams ask reasonable voice of the customer interview questions in the wrong order, without a probing logic, and then hand the customer feedback to someone who wasn't in the room and expects a coherent synthesis. What comes out the other end is a deck full of quotable moments that don't connect to a decision.

VoC research takes many forms, from voice of customer surveys to in-depth customer interviews, but all of them share the same constraint: they are only as good as the questions behind them. That problem is now solvable in ways it wasn't before. Qualitative research at scale, with adaptive probing built into the interview structure, structured synthesis that delivers stakeholder-ready findings in days rather than weeks, and insight libraries that compound across studies, is no longer the preserve of agencies with long timelines and large budgets. The old constraints of slow, expensive, hard-to-defend qual are dissolving.

The gap most businesses still haven't closed is question architecture. Voice of the customer methodology interview questions designed for surveys ask for ratings instead of stories. They lack the probing structure that turns a vague answer into quotable, traceable evidence. They also tend to ask for opinions before they ask for experiences, which is the wrong sequence: customers who have narrated a specific episode are far better equipped to explain why they decided or felt something. Asking the right questions in the right order is what separates satisfaction tracking from decision intelligence.

This guide provides research-grade question templates with adaptive probing structures that deliver stakeholder-ready findings in days, not weeks.

Why Most VoC Interview Questions Fail

Most VoC interview programs fail before the first participant says a word. The failure is structural, and it shows up in three places: questions designed for customer surveys, no mechanism for adaptive follow-up, and fragmented synthesis that takes weeks because nothing was built to be coded at scale.

The first failure is the most common. Teams repurpose a customer survey template for interviews, asking things like "How satisfied are you with your onboarding experience?" or "Would you recommend this product to a colleague?" These are rating prompts dressed as conversation starters. They produce scores, not stories. When reviewing VoC survey questions and voice of the customer sample interview questions across programs, the pattern is consistent: questions built around a sliding scale or numerical anchors generate numerical responses. The decision context, the competing priorities, the moment a customer almost churned but didn't, none of that surfaces from a scale question, a social media platform comment, or a star rating. Survey fatigue compounds this: customers who have completed dozens of customer survey questions in their careers have learned to give socially acceptable answers quickly. Positive feedback and specific feedback alike rarely survive a rating-based format.

The second failure is the absence of adaptive follow-up. A static discussion guide treats every participant identically. When a respondent hesitates, contradicts themselves, or mentions something unexpected, a scripted guide cannot pursue it. That hesitation is often where the real finding lives.

The third failure is the synthesis bottleneck. When every study uses a different question architecture, VoC data cannot be coded consistently or connected across programs. Research platforms that maintain a consistent question taxonomy across studies can surface patterns that a single study would miss entirely. Without that structure, a team spending two weeks manually coding transcripts is the only path to a deliverable. By the time it lands, the campaign has shipped, and the sprint has closed.

Small insights teams serving entire organizations cannot sustain that pace. The difference between satisfaction tracking and decision intelligence starts with how the voice of the customer interview questions are structured.

How to Structure Voice of the Customer Interview Questions for Deeper Insights

Research-grade voice of the customer interview questions share three qualities: they are open-ended, story-eliciting, and built for adaptive follow-up questions rather than scripted response collection. The goal is to surface what participants actually experienced, in their own language, with enough specificity to be traceable and credible.

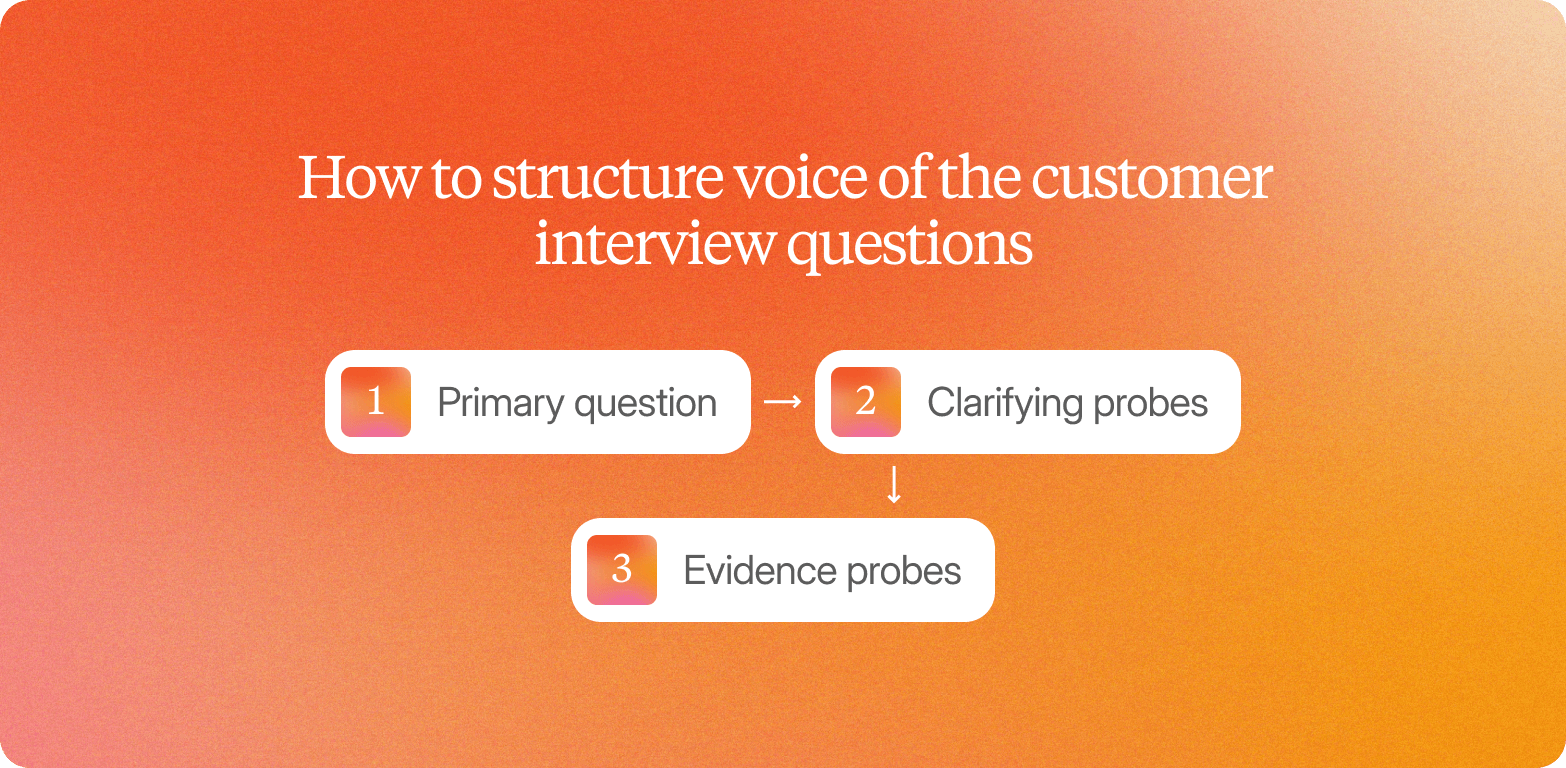

A reliable voice of the customer methodology interview questions architecture runs three layers deep.

Primary question

Broad and open, designed to invite narrative. "Walk me through the last time you tried to solve this problem. What happened?"

Clarifying probes

Deepen specificity without leading. "What made that moment particularly frustrating?" or "What were you hoping would happen instead?"

Evidence probes

Elicit concrete examples and quotable verbatim. "Can you give me a specific example of when that happened?" or "What did you actually do next?"

These question examples contrast the three-layer approach with the survey equivalent: "On a scale of 1-10, how satisfied are you with the experience?" That question produces a number. It tells you nothing about what drove the rating, what the participant tried before, or what they would describe to a colleague. The three-layer approach produces a finding you can stand behind in a stakeholder meeting.

Video-first interviews add a layer that text transcripts miss entirely. A participant's tone shift when describing a competitor, a pause before answering a pricing question, or visible hesitation when evaluating a concept are all signals that a transcript flattens into silence. Platforms conducting real voice and video conversations, not text-only or avatar-based alternatives, capture these cues by design.

Consistent question wording across studies also delivers the most value over time. When the same primary questions appear in a packaging study, a concept test, and a brand tracking wave, the insight library begins connecting patterns that no single study could surface alone. A well-structured set of voice of the customer interview questions does not expire at the end of a project.

The templates in the next section translate this architecture into ready-to-use VoC questions by use case.

4 Research-Grade VoC Interview Question Templates by Use Case

The four template sets below cover the most common VoC use cases: product development, customer satisfaction, churn prevention, and competitive intelligence. Each follows the structure: Primary Question, Clarifying Probe, Evidence Probe, Outcome Probe. Use concrete, copy-paste-ready language adapted to your products or services context.

Product Development VoC Questions

Three templates covering unmet needs, usage contexts, and feature prioritization. Platforms with adaptive probing built into the question flow produce more codeable data, reducing the time from fieldwork to finding before the sprint closes.

Template 1: Unmet Needs

Primary question: When you think about the biggest friction point in your current workflow, what is the one thing you consistently wish you could do that you currently cannot?

Clarifying probe: You mentioned it feels difficult. What specifically makes it difficult? Is it a missing step, a missing capability, or something that exists but does not work the way you need it to?

Evidence probe: Walk me through the last time you ran into that limitation. What were you trying to accomplish, and what did you do instead?

Outcome probe: If that friction were removed, what would you be able to do differently in your role?

Template 2: Usage Contexts

Primary question: Describe the last time you used this product to complete a task that really mattered. What were you trying to achieve, and what did the process look like from start to finish?

Clarifying probe: You mentioned you had to work around something. What was the workaround, and how often do you rely on it?

Evidence probe: Who else was involved in that process, and at what point did the product stop being the primary way you were working?

Outcome probe: What would a version of that workflow look like if it worked exactly the way you needed it to?

Template 3: Feature Prioritization

Primary question: If you could change one thing about this product before your next major project, what would it be, and why does that one thing matter more than everything else?

Clarifying probe: You said it would make things easier. What specifically would change about how you work? What step goes away, or what step becomes possible that is not today?

Evidence probe: Has a missing or broken version of that capability ever caused a delay or a decision to be made without the information you needed?

Outcome probe: If that change were in place today, how would it affect what you can deliver to your stakeholders in the next 30 days?

Customer Satisfaction and Experience Questions

Net promoter score, customer effort score, and CSAT tell you where overall satisfaction lands. They do not tell you what tipped a customer toward frustration, what they almost switched over, or what they would change first if they could. These interview templates surface the specific moments, pain points, and friction across the customer journey that scores obscure.

Q1: Think about the last time you had a notably positive or negative experience with us. Walk me through what happened.

Clarifying Probe: What were you trying to accomplish when that happened?

Evidence Probe: Can you describe the specific moment things felt right or went wrong?

Outcome Probe: How did that experience affect what you did next?

Q2: If you could change one thing about how we support you as a customer, what would it be?

Clarifying Probe: How long has that been a friction point for you?

Evidence Probe: Has that gap ever caused you to look at alternatives or delay a decision?

Outcome Probe: What would change for you if that friction went away?

Q3: When you think about the value you get from us relative to what you pay, how does that feel?

Clarifying Probe: What does value actually mean to you in this context: time saved, outcomes delivered, or confidence in decisions?

Evidence Probe: Can you give me a recent example where you felt that value clearly, or where it felt less clear?

Outcome Probe: What would need to be true for you to describe us as genuinely indispensable?

Churn Prevention and Retention Questions

Designed to surface the specific moments, not general sentiment, that precede a customer's decision to leave or stay. Brand loyalty is rarely lost in a single event. These questions expose the sequence of friction before it becomes churn.

Question 1: The Switching Trigger

Primary: "Walk me through the last time you seriously considered switching away from [product/service]. What was happening at that point?"

Clarifying probe: "What specifically happened that day or that week that made it feel urgent?"

Evidence probe: "Can you describe a concrete example of the problem you were experiencing?"

Outcome probe: "What would have had to change for that moment to never have happened?"

Question 2: The Alternative Evaluation

Primary: "When you were considering alternatives, which options did you look at, and what were you hoping they would do differently?"

Clarifying probe: "What made you start that search in the first place?"

Evidence probe: "What did those alternatives offer that felt meaningfully different from what you had?"

Outcome probe: "What ultimately made you stay, or what made the alternative feel like a real option?"

Question 3: The Loyalty Driver

Primary: "Think about a moment when [product/service] genuinely delivered for you. What happened, and what made it feel different from your baseline expectation?"

Clarifying probe: "What was at stake for you personally in that situation?"

Evidence probe: "How did that experience affect how you thought about the product going forward?"

Outcome probe: "If that experience happened consistently, how would it change your likelihood of recommending this to a colleague?"

Competitive Intelligence Questions

Questions written for a single team's use produce findings that don't travel. The templates below are designed to surface intelligence across product, marketing purposes, sales, and customer service team contexts from a single conversation, so findings reach the teams that need them without requiring separate studies.

Primary Question 1

When you were evaluating your options before making a purchase decision, what other products or approaches did you seriously consider?

Clarifying probe: What made those alternatives feel worth evaluating at the time? What were you hoping they could do?

Evidence probe: Can you walk me through how you actually compared them? What did you look at, and in what order?

Outcome probe: Looking back, what would have had to be true about one of those alternatives for you to have chosen it instead?

Primary Question 2

Were there any moments during your evaluation where a competitor's brand recognition or product capability seemed like the stronger choice? What was happening at that point?

Clarifying probe: What specifically made it feel like the stronger option? Was it a feature, a conversation, something you read, or something a colleague said?

Evidence probe: How did you resolve that uncertainty? What tipped the decision back?

Outcome probe: Is that consideration still relevant to how you think about your current solution today?

Primary Question 3

If your current solution disappeared tomorrow and you had to choose again, what tools would make your shortlist? What would you prioritize differently this time?

Clarifying probe: What's changed in what you need since you first made this decision?

Evidence probe: Which of those priorities would be non-negotiable versus nice-to-have?

Outcome probe: What would make you confident you'd made the right call faster than last time?

Adaptive Probing: How to Deepen Vague Answers in Real Time

Adaptive probing means the follow-up questions respond to what a participant actually said, not to the next item on a scripted list. It is the mechanism that converts a surface-level answer into valuable feedback worth acting on.

The problem is that participants frequently give vague or socially acceptable answers on the first pass. Those answers are not dishonest. They are incomplete. Consider three common examples:

"It's fine."

Probe: "What would need to change for it to feel more than fine?" / "What does 'fine' look like compared to something you genuinely love using?"

"I like it overall."

Probe: "Which part stood out most, and why did that land differently?" / "Was there anything you glossed over on first look but noticed on second?"

"It's a bit confusing."

Probe: "Where exactly did you pause?" / "What were you expecting to happen at that moment?"

Each probe moves the participant from evaluation to description, which is where traceable findings live. Negative feedback that arrives as "it's confusing" is not actionable. Negative feedback that arrives as "I expected the dashboard to update in real time, but it didn't, and I couldn't tell whether my settings had saved" is. The same applies to positive feedback: "I like it" is not useful feedback until a probe surfaces what specifically delivered that positive experience.

In traditional moderation, this requires a live researcher in the room or on the call. That is not a technology limitation. It is a structural constraint: one moderator, one conversation at a time. Scaling to 50 or 500 customer interviews means hiring more moderators, coordinating schedules across markets, and accepting that depth comes at the cost of volume.

Conveo's AI-led interviewing builds adaptive probing into the question flow, running asynchronously across 10 to 1,000 real conversations in parallel. The researcher's judgment shapes the guide; the platform carries that judgment into every session without requiring live presence.

"The video clips make it tangible, it's not just data anymore, it's real people with real emotions."

CMI Manager, Edgar & Cooper

Adaptive probing produces richer data. But only if synthesis is fast enough to matter.

From Interview Transcripts to Stakeholder-Ready Findings

The synthesis bottleneck in VoC research isn't fieldwork. It's everything that happens after fieldwork closes. Manual coding and thematic analysis across a multi-study program can consume 40 to 60 hours per project, which means findings routinely land weeks after the business decision has already moved on without them.

The path from raw interviews to a stakeholder-ready output runs through three stages: transcription, thematic coding, and structured reporting. In a traditional agency workflow, each stage is handled sequentially and manually. That chain takes weeks. By the time the report lands, the campaign has launched, or the product brief has been locked.

Integrated platforms compress that chain. When voice of the customer interview questions are structured consistently across studies, automated coding becomes faster and more accurate: the platform recognizes recurring themes, maps them to prior studies, flags contradictions against earlier findings, and connects outputs to the key performance indicators that matter to each team. Transcription, coding, and synthesis happen in parallel rather than in sequence.

What "stakeholder-ready" actually means matters here. It isn't a polished deck. It's structured themes with supporting quotes embedded alongside participant video clips, so the person reading the findings can click through to the real conversation behind it. Credibility comes from traceability. Stakeholders who can see the moment a participant's tone shifted at a price point are far more likely to act on the finding than those handed a summary they cannot verify.

Teams using Conveo report compressing multi-study synthesis from six-plus weeks to three to five days, without reducing the rigor that experienced researchers and their stakeholders require. Every AI-generated theme links back to its source video, and findings flow into the compounding insight library, where they remain searchable and connectable across future studies. That institutional memory only holds its value if the data behind it is governed correctly.

Global and Governance Considerations for Enterprise VoC Programs

Running VoC interviews across multiple markets creates two friction points that slow programs down before a single question gets asked: translation quality and compliance requirements. Both are solvable, but only if they're built into the study design from the start rather than treated as afterthoughts.

Translation and Cultural Nuance

Three translation pitfalls break multi-market VoC studies: literal translation that strips idiomatic meaning, probes that carry cultural assumptions that don't transfer, and inconsistent question wording across markets that makes cross-study comparison impossible.

Consider the difference between the two customer questions. "How do your company's products fit into your daily routine?" translates cleanly across most markets because it uses plain, direct language and makes no cultural assumptions. "What's your gut feeling about this brand?" is harder. "Gut feeling" is an idiom that translates awkwardly into German, Japanese, or Arabic, and the resulting question often means something slightly different in each language, making aggregate analysis unreliable.

The fix is to write voice of the customer interview questions that translate cleanly: simple sentence structures, no idioms, no colloquial shorthand. This matters not just for fieldwork quality but for the compounding insight library to function across markets with consistent question wording.

Conveo's global panel reaches participants across 50+ languages, with vetted participants and automatic transcription and translation built into the platform.

Consent, Privacy, and Compliance

Three governance layers apply to every recorded VoC interview program. First, participant consent: recorded voice and video interviews require explicit, informed opt-in before any session begins. Under GDPR, consent must be freely given, specific, informed, and unambiguous, with participants able to withdraw and request deletion at any time. A compliant statement makes three things explicit: that the session will be recorded, how the recording will be stored and who can access it, and what participants' rights are. Second, data residency: EU buyers require that participant data is hosted within EU borders, which eliminates platforms running on US-only infrastructure. Third, audit trails: enterprise teams need traceable records of consent and data handling to satisfy internal legal review and respond to data subject requests.

A fourth requirement is increasingly non-negotiable: participant authenticity. Enterprise procurement teams are now asking whether research outputs are grounded in real human conversations or generated by synthetic rparticipants. A compliance checklist that confirms real, verified participants is no longer optional for teams presenting findings to executive stakeholders.

Conveo is SOC 2 certified and GDPR-compliant, with EU regional data hosting. Every session links consent records, participant verification, and source video in a single traceable audit trail.

Enterprise VoC program checklist covering translation best practices and compliance requirements for GDPR and data residency

Translation Checklist

Plain language, no idioms

Tested with native speakers before fieldwork

Consistent question wording across all markets

Wording reviewed for cultural assumptions, not just linguistic accuracy

Compliance Checklist

Explicit participant consent obtained before recording begins

Data residency confirmed (EU hosting for EU participants)

Audit trail in place for consent records and data handling

Real participants verified: no synthetic or avatar-based responses

How Conveo Supports Research-Grade VoC Interviews at Enterprise Scale

The shift in qualitative research is not about replacing human judgment with AI. It is about removing the structural constraints that have kept qual periodic, siloed, and slow. Adaptive probing that follows what a participant actually says. Synthesis that compresses weeks of manual analysis into days. An insight library that compounds across every study rather than dying in a deck. An enterprise compliance infrastructure, including SOC 2 certification, GDPR alignment, EU data hosting, and real participants verified by video, that clears procurement without compromise.

That combination describes what qualitative research can now deliver at scale. Successful businesses use it to drive revenue from VoC programs that connect customer insight directly to sales strategy, product decisions, and future growth. Conveo is built around exactly that model. Over 400 enterprise teams, including Google, FOX, and Bosch, use it to run continuous voice-of-customer programs that generate traceable, stakeholder-ready findings without agency timelines or agency costs.

One qualification matters: Conveo is built for enterprise-scale qualitative programs. Most businesses running ongoing VoC work at volume will get compounding value from the platform. Teams looking for one-off surveys or small-scale pilots will not.

Frequently Asked Questions

What Are Effective Voice of the Customer Interview Questions and Answers?

What Are the Best Voice of the Customer Questions for New Product Development?

What Are Internal Voice of the Customer Survey Questions?

How Many Questions Should a VoC Interview Include?

What Is the Difference Between VoC Survey Questions and VoC Interview Questions?