TL;DR

Campaign validation AI is the practice of gathering real consumer feedback on campaign messages, concepts, and target audiences before a campaign ships, not startup idea scoring, not content quality checking, not post-launch verification.

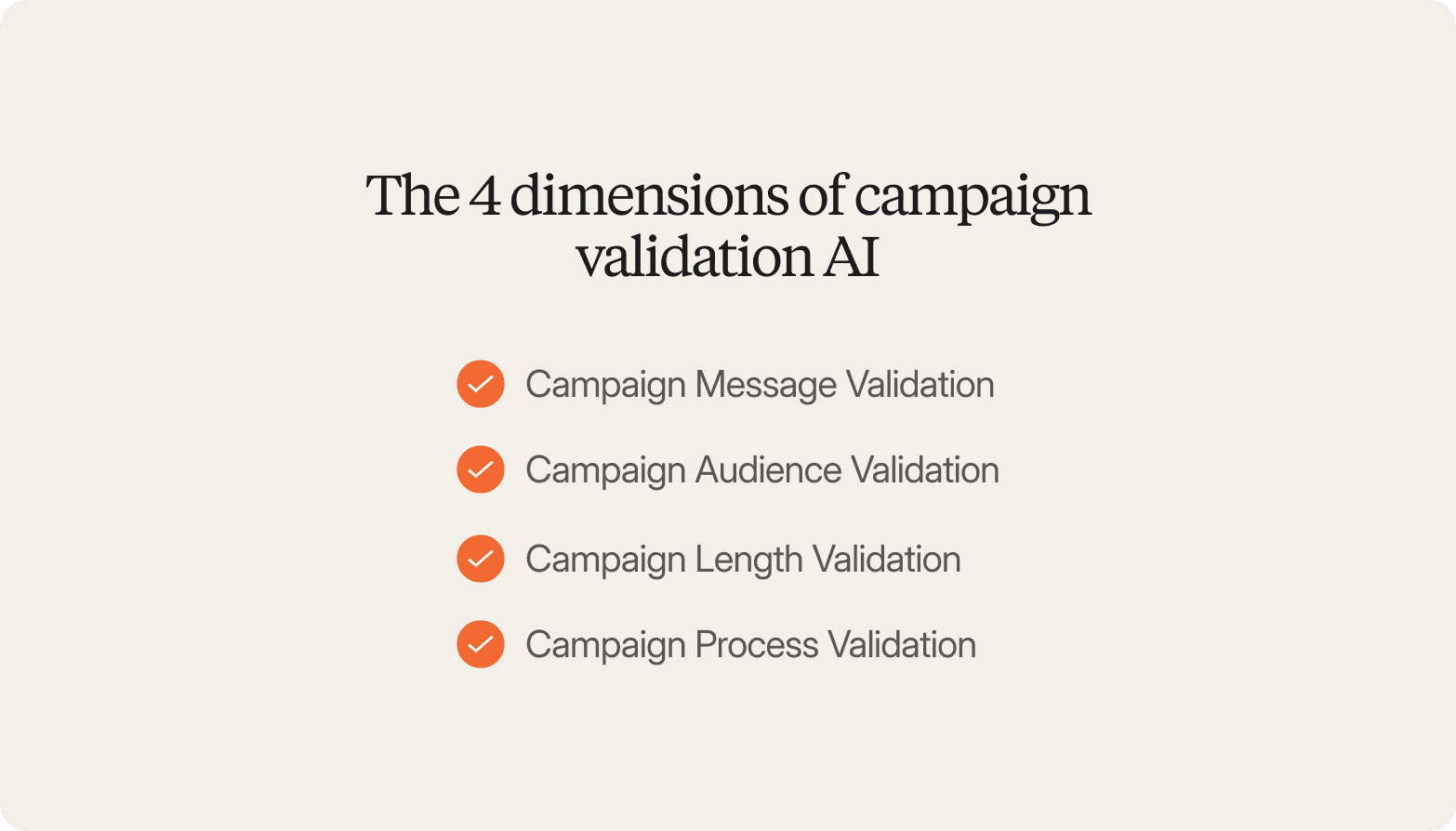

This guide covers four dimensions: message validation, audience validation, length validation, and process validation.

The method that produces real signals is AI-led video interviews with real target consumers, capturing hesitation, tone shifts, and emotional response alongside what people actually say.

Surveys tell you what consumers think. They do not explain why a message landed or fell flat. Algorithmic scoring simulates a response rather than capturing it.

Conveo runs AI-moderated consumer interviews from discussion guide to stakeholder-ready findings in days, not weeks.

Qualitative research has always been the method used to explain why a campaign lands or fails. For most of its history, it has also been the method that arrives too late to change anything.

Most campaigns fail not because the idea was wrong, but because no one asked a real consumer before spending money.

Many organizations skip campaign validation entirely, and the cost surfaces later, when a campaign ships without real consumer input and nothing can be walked back. Teams either proceed on instinct, run a fast survey that answers the wrong question, or wait and miss the window. Campaign validation AI is built to fix that timing mismatch.

But only if teams understand what the term actually means.

Right now, it describes at least three different things: startup founders use it to score business concepts, data teams use it to check spreadsheets for errors, and compliance teams use it to verify SMS messages. None of those are the problems a Marketing Research Manager or Innovation Director is trying to solve. And even teams that do run validation often make a subtler mistake, testing the execution without first confirming the underlying consumer tension is real. It is a pattern the Conveo team sees consistently, and it is where campaigns go wrong before a single asset is briefed.

For marketing, brand, and innovation teams, campaign validation AI means getting real consumer signals on messages, concepts, and audiences before the launch decision is made. Not a simulated score, an actual conversation with a real person from the target segment about whether the creative lands, and why.

This guide covers the four dimensions of a complete validation and how AI-led consumer interviews produce findings that leadership will actually act on.

The 4 Dimensions of Campaign Validation AI

Campaign validation is not a single question. It is four distinct questions that together determine whether a campaign is ready to launch, and whether it has a real chance of success across every channel, from digital and email to traditional media. Answering all four gives organizations the confidence to commit to the budget, knowing every element is working.

Campaign Message Validation

Campaign message validation asks: Does this headline, value proposition, or creative concept land with the real target audience?

It goes beyond internal creative review or A/B testing, both of which happen after a campaign is live. Concept testing can evaluate any campaign material at any stage, from early positioning territories to finished executions.

The most common finding across Conveo's enterprise customer base is not outright failure. It is that a campaign generates awareness and a positive emotional response, but fails to convert that sentiment into purchase intent. It feels good. It does not drive the behavior the brand needs.

AI-led interviews give marketing teams the evidence they need to surface that gap before production spend is committed, and before the window for a successful campaign closes.

Campaign Audience Validation

Campaign audience validation asks: Are we targeting the right target audience?

Marketing teams frequently discover that the segment in the brief describes their pain differently than assumed, or that a secondary segment shows stronger resonance with the core message. Understanding potential reach across the desired audience before media spend is committed is what separates a marketing strategy built on assumptions from one built on evidence. AI-moderated interviews with participants screened for the intended target profile surface these mismatches before the budget is locked.

Campaign Length Validation

Campaign length validation asks: at what point does the target audience disengage?

For video ads, digital content, and multi-step sequences, the timing of attention drop-off is critical. AI-moderated interviews surface where participants lose the thread, what they recall at the end of a longer format, and whether the pacing matches how the audience actually processes the content. The question is not whether the campaign is good. It is whether it sustains interest long enough to deliver its core message before the audience moves on.

Campaign Process Validation

Campaign process validation asks: Does the full campaign sequence make logical sense to the target consumer?

It tests the journey, not just individual assets. Teams need to verify that the narrative arc holds, the call to action feels earned, and there are no friction points that internal teams, too close to the brief, cannot see. AI-moderated interviews that walk participants through a complete campaign sequence surface those structural gaps before the campaign ships.

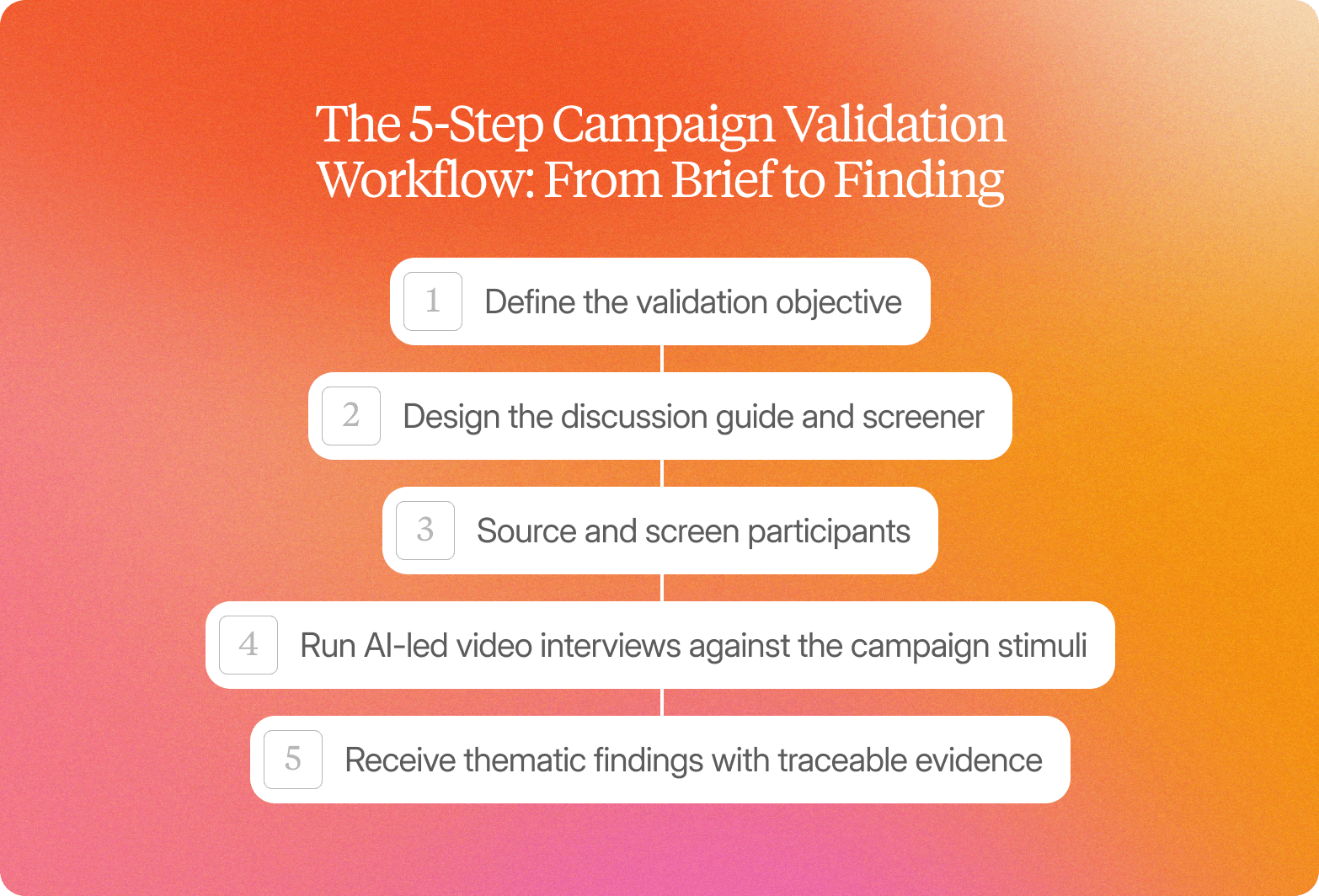

The 5-Step Campaign Validation Workflow: From Brief to Finding

The workflow for a campaign validation study on Conveo runs in five steps. Each step maps to a decision that a marketing or research team controls.

Define the validation objective.

What specific decision does this study need to inform? Message selection before the brief goes to the creative agency? Audience prioritization before media planning? Concept go/no-go before production spend is committed? Define the marketing goals this campaign is designed to achieve, and use those goals to shape both the discussion guide and the participant screener. The clearer the objective, the more actionable the findings.

Design the discussion guide and screener.

The discussion guide frames the campaign stimuli and the probing questions. The screener filters for the right participant profile: the actual target audience segment, not a general consumer panel. Teams can build from a prior study or start from scratch. For a closer look at discussion guide design, see Conveo's in-depth interviews and focus groups pages.

Source and screen participants.

Participant sourcing is historically the step that adds the most time to a validation cycle. Conveo's built-in panel access, fraud filtering, and incentive management remove this dependency entirely. Teams gain direct access to the right participants without scheduling coordination, geographic constraints, or waiting for a moderator to become available. Participants receive a link they open on their own schedule.

Run AI-led video interviews against the campaign stimuli.

Participants meet Conveo's AI interviewer, which follows the discussion guide, probes based on what each participant actually says, and adapts in real time. Sessions are video-first, with multimodal analysis that blends speech, tone, facial cues, and behavioral responses alongside the verbal answer. Research consistently shows that 83% of participants report greater honesty with AI interviewers than with human moderators, and it shows in the findings: structured moderation misses or rationalizes away the hesitation before an answer, the tone shift when a phrase lands wrong, and the moment a participant contradicts what they said ten minutes earlier. Those signals are what make campaign validation findings credible enough to act on.

Receive thematic findings with traceable evidence.

Findings are delivered as thematic clusters tied to the campaign dimensions under evaluation, supported by verbatim quotes, sentiment arcs, and video clips ready to share with leadership. Reviewing what affected each campaign performance metric is key to creating better-performing campaigns in the future, and traceable findings make that measurement possible. Stakeholders can interrogate the data directly in plain language: "Which segment showed the strongest skepticism toward this claim?" "How did responses differ across markets?" The question "how do we know?" has a direct, sourced answer.

See how Conveo handles concept and creative optimization end to end:

AI-moderated consumer interviews conduct the fieldwork. The researcher interprets the findings, connects them to the organizational context, and takes them to the decision-maker. Every organization running ongoing validation programs benefits from this division: the AI surfaces the evidence at scale, and the researcher applies the judgment that no algorithm can replicate. A team of two or three can now run three concurrent validation studies where previously they could manage only one.

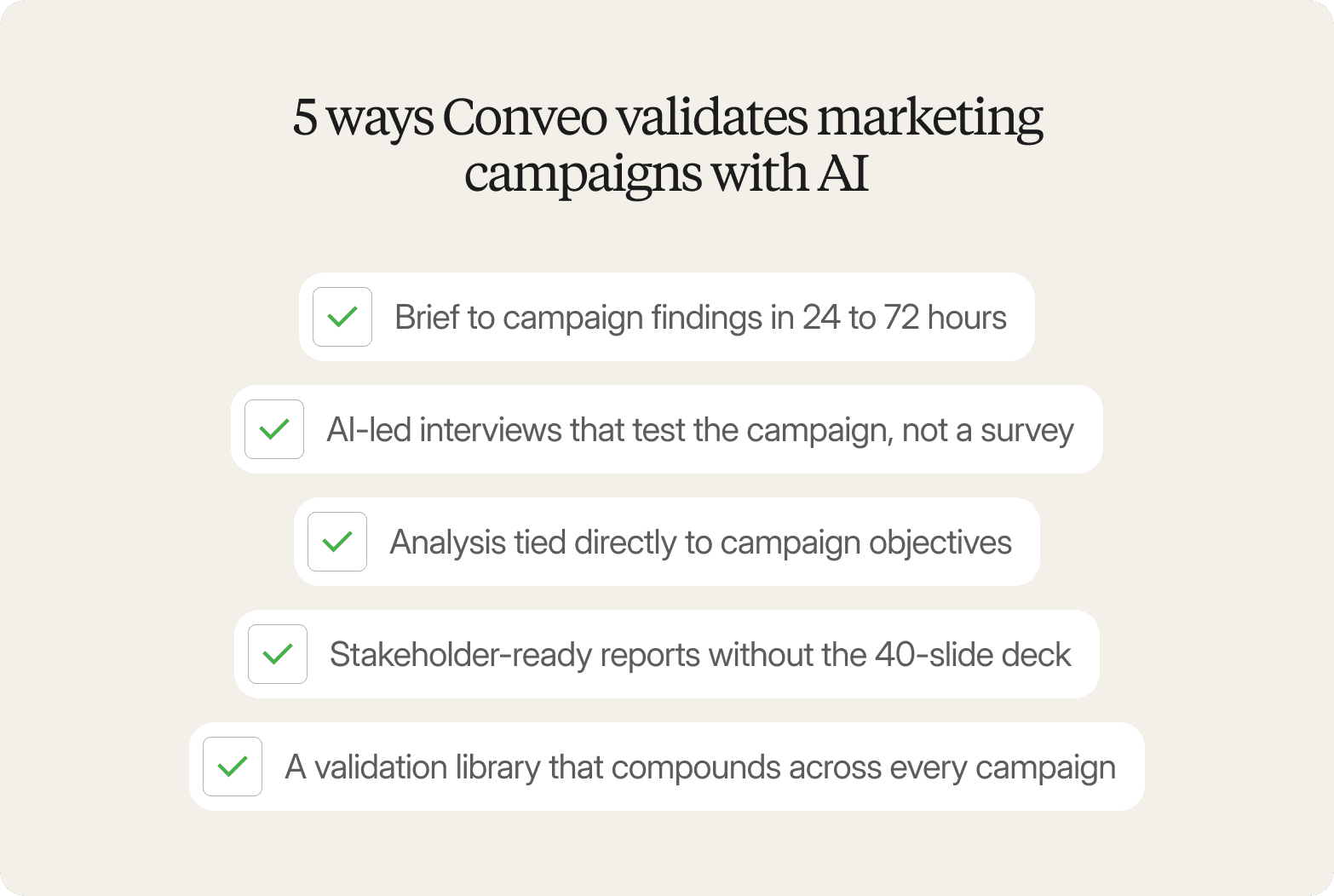

5 Ways Conveo Validates Marketing Campaigns with AI

Conveo is a video-first AI qualitative research platform built for enterprise and mid-market marketing teams that need real consumer signals before a campaign ships. Unlike platforms that model consumer responses algorithmically, Conveo speaks with real participants from the target segment, capturing voice, video, tone, and facial expressions simultaneously.

Brief to campaign findings in 24 to 72 hours. Teams define their participant screener to match the exact target audience, no agency, no scheduling bottleneck, no waiting until the brief is already locked. Conveo's AI interviewer drafts the discussion guide from the campaign objective.

AI-led interviews that test the campaign, not a survey. Conveo's AI interviewer holds real conversations with real participants, probing reactions to headlines, value propositions, and creative concepts. It asks follow-up questions, surfaces hesitation, and captures what participants say and how they say it.

Analysis tied directly to campaign objectives. Every session is transcribed, translated across 50+ languages, and analyzed against the specific goals the study was designed to measure: message resonance, audience fit, creative recall, or sequence logic, backed by verbatim quotes and video evidence.

Stakeholder-ready reports without the 40-slide deck. We deliver exportable video clips, emotion charts, and traceable findings structured around the campaign decisions leadership needs to make. Protected by SOC 2 certification, encryption at rest, SSO, and regional hosting.

A validation library that compounds across every campaign. Every study feeds a secure insight repository, so each campaign builds institutional knowledge about how your target audience responds, and the next brief starts from evidence, not assumptions.

Conveo is built for teams with internal research ownership and ongoing validation programs. If you need a one-time study without an internal champion to interpret and act on findings, a traditional agency engagement may be the better fit.

Ready to get real consumer signals on your next campaign before the brief is locked?

Conveo runs AI-moderated consumer interviews from discussion guide to stakeholder-ready insights in days, not weeks, so your team has the findings that matter before the decision window closes and can measure campaign performance against real consumer signals, not assumptions. Trusted by 400+ enterprise teams, including Google, Unilever, and Bosch.

Frequently Asked Questions

What is campaign validation AI?

How does AI validate a marketing campaign?

How is campaign validation AI different from using a survey?

How long does campaign validation AI take compared to agency qual?

Can AI campaign validation produce findings that are credible enough for a CMO or senior stakeholder?