TL;DR

Brand message testing is the practice of exposing real consumers to brand messaging before it goes to market, evaluating whether it communicates what the brand intends, and why it does or doesn't land, which survey scores alone cannot reveal.

The core problem it solves: messaging developed internally reflects what the team believes about the brand, not what target customers actually understand or feel when they encounter it for the first time.

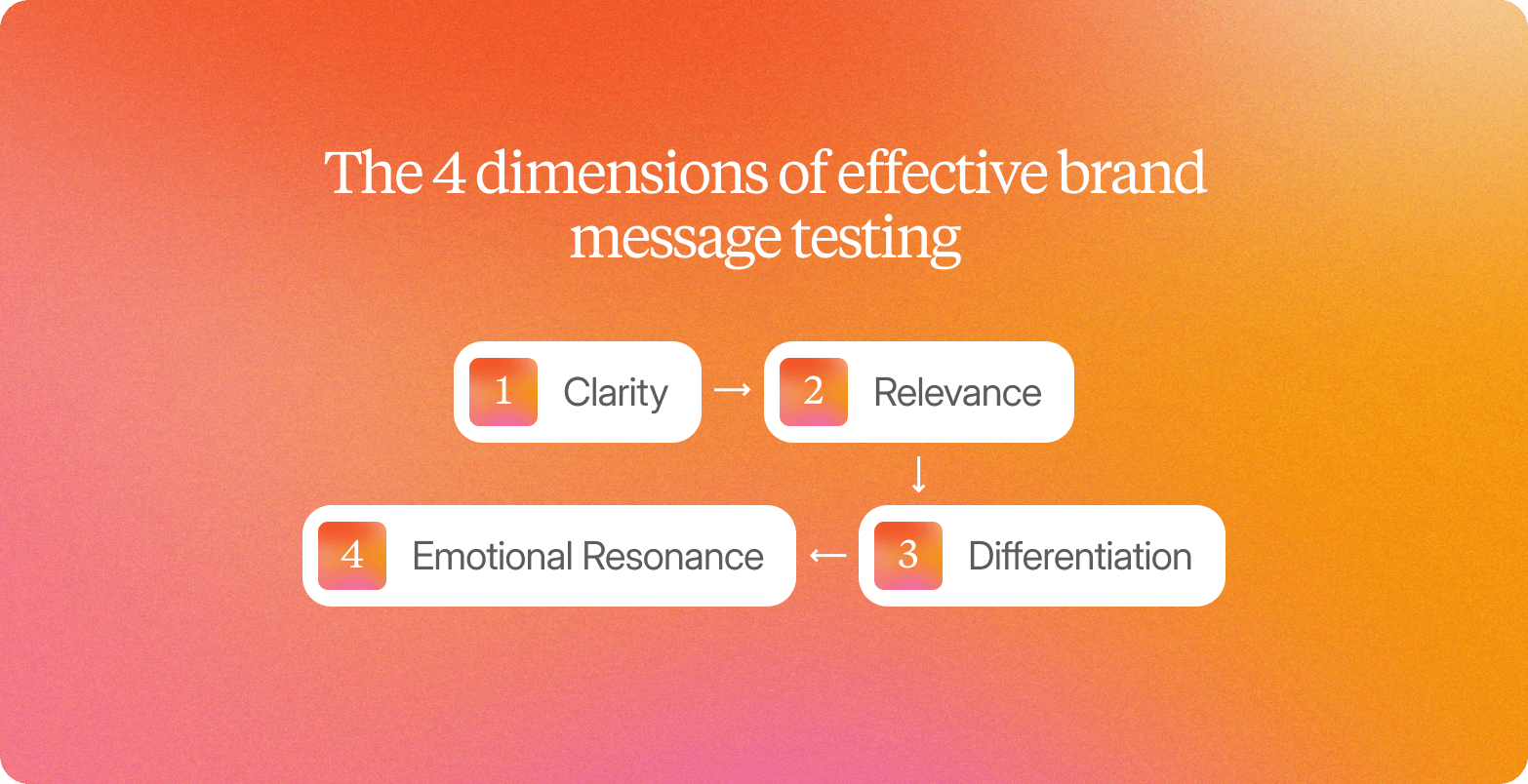

Effective brand message testing evaluates four dimensions: clarity (does the message communicate what was intended), relevance (does it connect to a real pain point the consumer experiences), differentiation (does it feel distinct from the competition), and emotional resonance (does it create the intended feeling).

The most efficient way to close the gap between internal confidence and external reality is through real consumer conversations using open-ended questions, not rating scales, simulated persona responses, or internal review panels.

Teams that know how to test brand messaging before committing to creative or media spend reduce the risk of launching language that performs well in the conference room but fails with the real people it was written for.

The campaign brief looked strong internally. The tagline had survived three rounds of stakeholder feedback, the messaging hierarchy felt tight, and the creative team was confident. Then it went live, and the value proposition that had resonated in every internal review landed flat with the actual target audience, not because the idea was wrong, but because the team had been refining language against their own understanding of the product, not against the customer's experience of the problem it solves.

That gap is where most brand messaging breaks down. Many marketers discover this only after the homepage headline is live, the landing page is indexed, and campaigns are already running spend against language that was never tested with the intended audience. In those internal sessions, people aren't encountering the message as a potential customer would: without context, product knowledge, or reason to give the team the benefit of the doubt.

The operational barriers that once made pre-launch consumer validation impractical are no longer fixed constraints. Agency timelines measured in weeks, sample sizes too small to be definitive, synthesis that took as long as the research itself: those limits belong to a previous era of qualitative research. This article is a practical guide to testing brand messaging before the gap costs you. It covers why certain methods surface the failure modes that matter, why others only measure surface preference, and what a credible testing process actually produces.

Why Surveys Alone Cannot Validate Your Value Proposition

Surveys have a legitimate role in message testing. They scale to large samples quickly, quantify preferences across audience segments, and can tell you with statistical confidence that Message A outperformed Message B. That quantitative data is genuinely useful for some decisions.

The problem is what surveys cannot tell you: why.

When a respondent rates a message "clear" or "compelling," that score reflects their reaction to the framing of the question as much as it reflects their understanding of the message itself. Survey scales do not reveal how a participant interpreted the message before answering, what associations they brought to specific words, or whether the value proposition mapped onto a pain point they actually experience in their work.

This is why many marketers see low conversion rates on otherwise well-trafficked pages: the message is being read, but not as it was written. What message testing actually requires are two things surveys cannot provide:

Open-ended probing that follows what the participant actually says

The ability to capture hesitation, reframing, and emotional reaction in real time

A participant who pauses, then quietly reframes your positioning in their own words, is telling you something critical. A written response box does not preserve that signal.

The 4 Dimensions of Effective Brand Message Testing

Clarity

The test for clarity is direct: can someone who has never heard of your company read your homepage headline and immediately understand what you offer and who it is for? Not after reading the subhead, on the first line.

Clarity failures are almost always invisible to the people who wrote the message. PandaDoc discovered this through consumer conversations: their phrase "on-brand docs" consistently led prospects to ask whether the company served medical professionals. The internal team heard "document templates with consistent branding." Real customers heard something else entirely. Words that carry precise meaning inside the building carry no meaning at all to a first-time visitor arriving from a search. The fix is not simpler language for its own sake; it is product messaging tested outside the building, with real people who match your target market.

Relevance

Relevance fails quietly. The message goes live, the campaign runs, and conversion rates come back soft. Not because the product was wrong, but because the language never matched how the customer was actually thinking about the problem.

Internal teams create messaging from the product outward: here is what we built, here is why it matters. Customers read from their pain points inward: Does this describe what I'm dealing with? When those two directions don't meet, the message lands as noise, technically accurate, practically invisible.

The gap usually isn't about creativity. It's about source material. Teams that rely on internal assumptions rather than in-depth interviews with ideal customers will consistently miss the register that makes a message feel like it was written for them specifically.

Differentiation

Most B2B product messaging explains what the platform does. It skips the harder question: why is this a better answer than what the customer is already using?

That gap is where differentiation lives. Customers don't evaluate your brand in isolation. They evaluate it against the competition already in the room: the agency they've worked with for years, the survey tool their team knows, the solution that's "good enough." Loom discovered this when early messaging described the product as a "video service." The description was accurate. It gave prospects no reason to choose Loom over the screen-recording tool already running on their laptop. The message described a category, not a choice.

If your messaging doesn't address that comparison directly, you're leaving the customer to make the connection themselves. Most won't.

For brand and marketing teams, this is where most messaging breaks down. The message explains the offering. It doesn't explain why this approach produces better insights than a survey or faster validation than waiting on an agency. Without that contrast, the message lands as information, not as a reason to act.

Emotional Resonance

Surveys can capture whether a message is liked. They cannot capture what it actually does to a person. Emotional resonance is the difference between a consumer who says "yes, that sounds good" and one who leans forward because the message just described their exact situation. That shift shows up in tone, in pacing, in the moment of hesitation before they agree with a claim they're not fully convinced by.

This is where video-first market research earns its value. When a participant's voice quickens or their answer trails off, that signal is visible. It cannot be coded into a Likert scale.

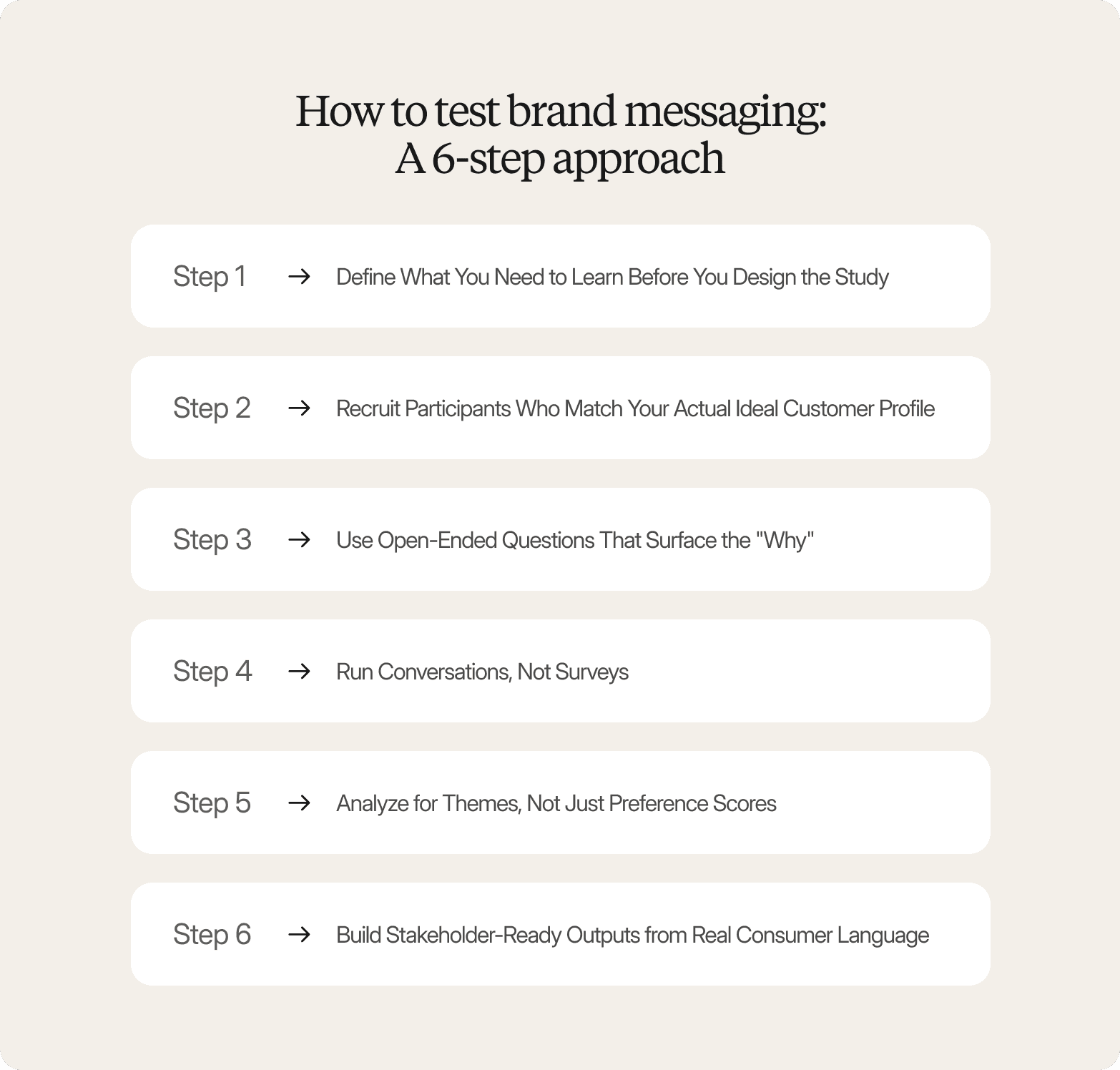

How to Test Brand Messaging: A 6-Step Approach

1. Define What You Need to Learn Before You Design the Study

Start with the decision this test needs to support, not the questions you want to ask. Is the team validating a final tagline before launch, or trying to identify why a message isn't landing with a specific segment? That learning goal determines who to recruit, what to probe, and what a useful result looks like. Without it, you generate reactions, not insights.

2. Recruit Participants Who Match Your Actual Ideal Customer Profile

Discover how Conveo handles participant recruitment:

Testing messaging with a general consumer panel is one of the most common ways brand research goes wrong. If your messaging is built for specific personas, VP-level buyers in financial services or category managers at large CPG companies, a broad panel gives you broad reactions. Those reactions may feel like a signal. They're mostly noise.

In practice, sourcing and vetting an ideal customer profile through traditional channels takes time that most marketing teams don't have before a decision window closes. Enterprise platforms with vetted global panels handle that recruitment infrastructure directly, matching participants to defined criteria and filtering for quality automatically.

3. Use Open-Ended Questions That Surface the "Why."

Five question patterns consistently produce the most diagnostic output in brand message testing:

"What do you think this message is trying to say?" Surfaces how the message is actually being decoded, not how it was intended.

"Which words or phrases feel unclear or unexpected?" Participants identify friction points that a score would never reveal.

"What resonates most, and what resonates least?" Forces participants to prioritize rather than give blanket approval.

"Does this change how you think about the brand? How?" Determines whether the message is doing real perceptual work.

"If you could change one thing about this message, what would it be?" Consistently surfaces the sharpest consumer language to answer questions about fit and clarity.

None of these can be answered with a single word or a score. Participants have to externalize their actual interpretation; the only way to understand whether messages resonate as designed, or are being read in a way the team never anticipated.

4. Run Conversations, Not Surveys

Surveys present the same questions in the same order to every respondent, regardless of what they say. A conversation follows the participant. When someone hesitates, a skilled moderator probes. When a respondent uses an unexpected word to describe your brand, the conversation can stop and explore what they meant. That adaptive quality, which mirrors the best in-depth interviews, is where the most useful messaging intelligence lives.

AI-moderated video interviews replicate this dynamic at scale. Conveo's AI interviewer, for example, senses hesitation, mirrors the participant's language, and probes based on what they actually say rather than following a fixed script, across 50+ languages, asynchronously, so hundreds of conversations can run in parallel. Teams surface the full range of how target customers interpret a message, including the interpretations the team never anticipated, and use that diagnostic signal to fine-tune copy before it reaches a live page.

5. Analyze for Themes, Not Just Preference Scores

Look for language that recurs across participants: the words they chose unprompted, the emotional reactions that surfaced in consistent contexts, the framings they reached for when describing the pain points your message is supposed to solve. Those patterns reveal why a message landed or didn't. The consumer's own words are often the most actionable output of the entire study, and the most useful raw material to fine-tune copy before launch.

6. Build Stakeholder-Ready Outputs from Real Consumer Language

The formats that move senior stakeholders from "interesting" to "decided" are specific: direct quotes attributed to a real participant profile, video clips timestamped to the moment a phrase landed or fell flat, and a three-to-five finding summary where each finding carries a concrete recommended change. Not a debrief deck, a decision document that connects research directly to brand strategy.

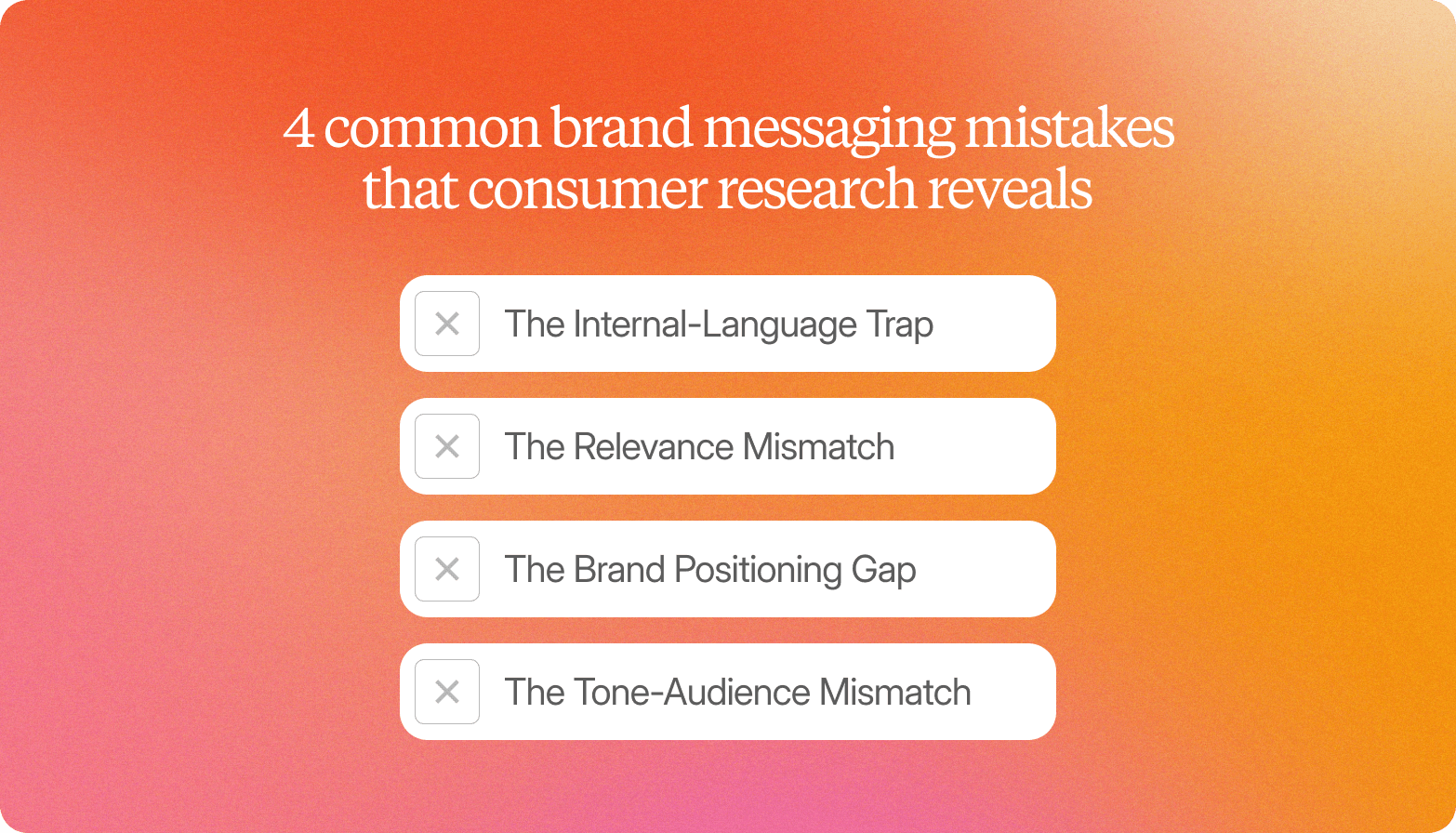

4 Common Brand Messaging Mistakes That Consumer Research Reveals

The Internal-Language Trap

Teams create messaging inside a shared vocabulary. By the time copy reaches the review stage, everyone already knows what the words mean, whether it appears on a landing page, a homepage headline, or a blog post. The problem surfaces in consumer conversations: participants read the messaging carefully and still cannot explain what the product does in their own words. This is a clarity failure that peer review cannot catch, because the people reviewing already have the context that real customers are missing.

The Relevance Mismatch

A message can be clear and still miss the buyer entirely. This happens when marketing teams optimize for a benefit their target market does not actually prioritize, often because ease of use is what the internal team finds most compelling, not what senior buyers evaluate. Real conversations reveal when product messaging never addresses the buyer's actual decision criteria. The approval signal and the sales signal are two different things.

The Brand Positioning Gap

Participants understand the message. They find it relevant. They express no urgency to act. This is the differentiation gap: brand positioning that accurately describes a category without giving any reason to choose this company over the competition that a buyer already knows. It is invisible in survey data because comprehension and approval scores look fine, but conversion potential is zero. It only becomes visible when target users are asked to explain why they would investigate further, and cannot.

The Tone-Audience Mismatch

A voice calibrated for a millennial brand manager can land as dismissive with the CMI director or procurement lead who also evaluates the decision. Participants rarely flag tone directly; they describe it as a feeling: the message "wasn't for them," or didn't feel on brand for the context in which they encountered it. That signal only surfaces in a real conversation, not in a rating scale.

How Conveo Helps Brand and Insights Teams Test Messaging With Real Consumer Conversations

Conveo is a video-first AI qualitative research platform built around one principle: every finding should be traceable to a real human participant, not a simulated persona. Over 400 enterprises, including Google, Unilever, and Bosch, rely on Conveo to run qualitative research at scale. The platform is SOC 2 certified, GDPR-compliant, and supports EU regional data hosting: the compliance infrastructure enterprise procurement requires before any research platform touches sensitive positioning strategy.

Unlike platforms that generate consumer responses through trained personas or synthetic data, Conveo grounds every finding in real participant reactions, the hesitation before agreeing with a claim, the qualifier someone adds when a phrase doesn't quite land, the tone shift when a competitor is mentioned. 83% of participants report greater honesty with AI interviewers compared to human moderators, which means the signal Conveo surfaces reflects what participants actually think, not what they think they are expected to say.

"Conveo's video-first approach is a real differentiating methodological advantage. The ability to distill insights from reactions and not just hear answers adds context you simply can't get from transcript-only tools, or any other tool in the market for that matter."

Senior Marketing Research & Insights Manager, Google

Recruitment is handled automatically. Teams define their participant profile, and Conveo recruits from vetted global panels, with fraud filtering and incentive management built in. If your brand messaging is directed at VP-level buyers in financial services or category managers at large CPG companies, Conveo sources participants who match those criteria, not a general consumer panel that introduces noise.

AI moderation that adapts in real time. Participants join on their own schedule via a link and meet an AI interviewer that greets them naturally, mirrors their language, senses hesitation, and follows up based on what they actually say, in more than 20 languages. That adaptive probing is how unexpected consumer language surfaces: the phrase participants reach for instead of yours, the reframing that reveals the message landed differently than intended.

An insight library that builds across studies. Because Conveo connects findings across research projects, each round of message testing builds on the last. Consumer language patterns from a tagline test inform the next value proposition review. The insight library compounds rather than resets, which means the second study is more valuable than the first, and the tenth more valuable than the second.

Findings in days, not weeks. Because sessions run asynchronously, hundreds of conversations can run in parallel. Timelines compress from weeks to days without reducing the depth that makes findings credible to senior stakeholders.

Analysis beyond the transcript. Multimodal analysis blends speech, tone, facial cues, and on-screen objects to surface what transcripts miss: the brow furrow at a tagline, the shift in tone when a competitor is mentioned. Outputs include thematic clusters, direct quotes, and video clips that land in a leadership meeting without a 40-slide debrief.

Conveo is built for teams running ongoing qualitative research programs with real research ownership. If your team needs a one-off survey, there are faster, lighter options. If you're building a continuous consumer understanding practice, one where each study makes the next one sharper, Conveo is built for that scale.

Frequently Asked Questions

What is message testing?

What is the difference between message testing and A/B testing?

What open-ended questions should you ask during brand message testing?

How do you test SaaS brand messaging with target customers?

How often should brand messaging testing be part of your marketing strategy?