TL;DR

VoC metrics like net promoter score (NPS) and customer satisfaction score (CSAT) measure what happened, not why. A score tells you a customer was dissatisfied. It does not tell you which part of the experience drove that feeling, what they would have preferred instead, or whether the underlying cause is systemic. Teams that treat key VOC metrics as endpoints make decisions without the customer insights those decisions require.

A three-tier key performance indicators (KPI) hierarchy gives VoC programs structure. Tier one covers customer metrics, net promoter score (NPS), customer satisfaction score (CSAT), and customer effort score (CES), which signal overall health. Tier two covers behavioral signals: repeat customer rate, churn, and support contact frequency. Tier three covers the qualitative feedback layer: the motivations, language, and reasoning that explain what tiers one and two are showing. Most enterprise customer programs are strong on tier one and weak on tier three.

Qualitative interviews belong in the VoC stack when scores shift without explanation, when a new segment or market is being entered, or when a strategic decision requires more than directional data. Teams that run AI-moderated video interviews alongside quantitative tracking report a materially sharper ability to explain VOC metrics to senior stakeholders.

Tracking VoC metrics has never been the hard part. Most enterprise teams have a net promoter score (NPS) in a dashboard, a customer satisfaction score (CSAT) in a quarterly report, and a customer effort score (CES) somewhere in a customer service workflow. What they rarely have is a clear line between a score moving and a decision getting made.

The structural barriers that made voice of the customer measurement slow and hard to act on were never about data collection. They were about synthesis: manual transcription, scheduling bottlenecks, customer insights buried in decks that no one revisited. Most customer programs compound this by building around data collection cadences rather than decision cadences, accumulating customer data that sits disconnected from the moments where it would actually change behavior.

NPS tells you that customer sentiment has moved. CSAT tells you a transaction went well or badly. What neither tells you is why, and without that causal link, the voice of the customer metrics function as lagging indicators rather than decision inputs. A score drops three points. The dashboard turns red. The team spends two weeks guessing, while customers expect faster, more informed responses than that.

Effective VoC measurement requires a KPI hierarchy that connects quantitative and qualitative data to sourced evidence: the video clips, verbatim quotes, and behavioral signals that show stakeholders what actually changed and why it matters. Without that architecture, VoC stays a satisfaction dashboard rather than a traceable decision engine.

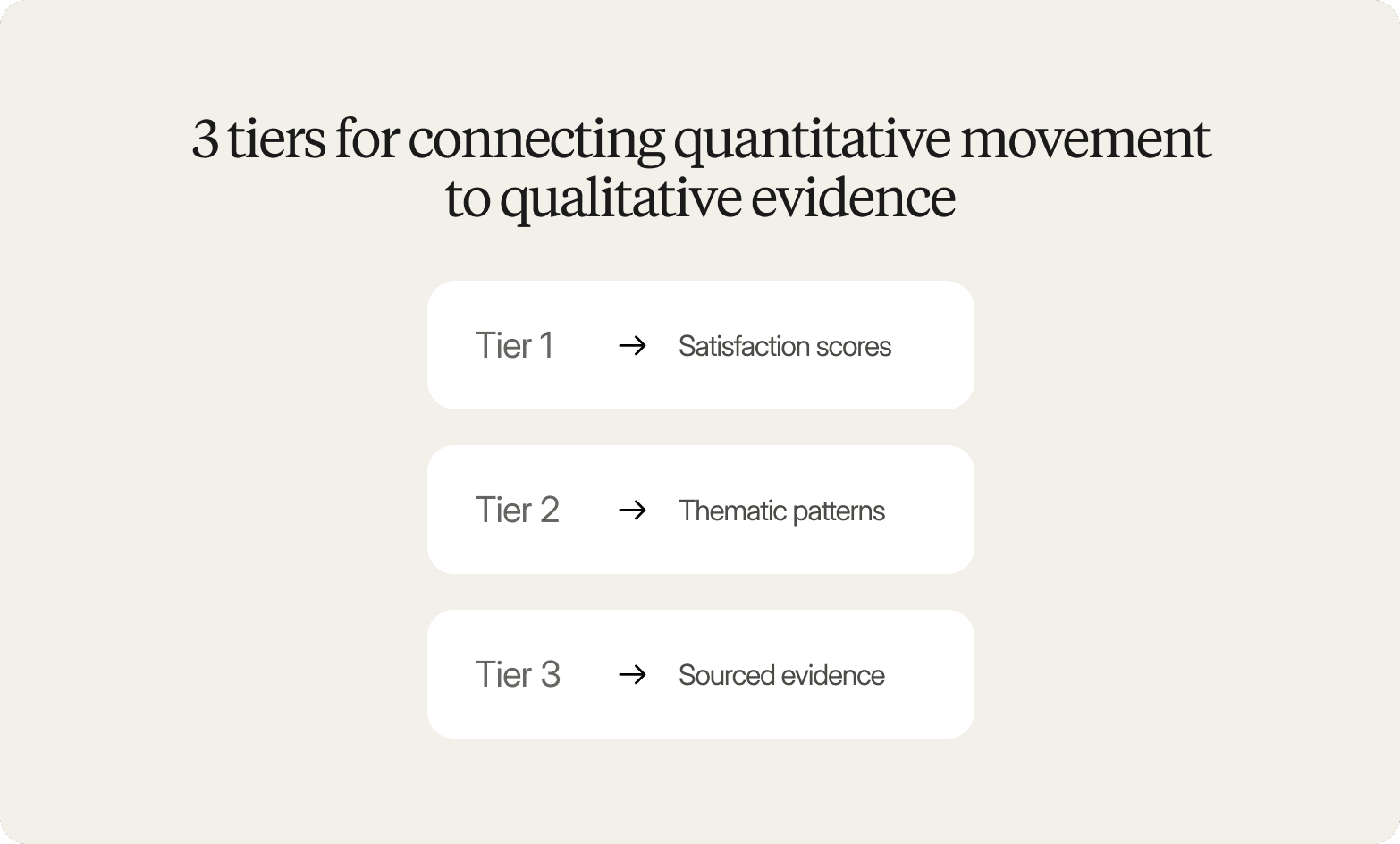

The VoC KPI Hierarchy: 3 tiers for connecting quantitative movement to qualitative evidence

Effective voice of the customer KPI programs do not fail because teams pick the wrong key metrics. They fail because those metrics have no evidence underneath them. A score without a cause is a number waiting to be argued over.

The structure that prevents this is a three-tier hierarchy.

Tier 1: Satisfaction scores

Net promoter score (NPS), customer satisfaction score (CSAT), and customer effort score (CES) sit at the top because they measure outcomes at scale. Some teams also track a customer loyalty index to gauge long-term relationship strength alongside transactional satisfaction scores. These metrics tell you the score moved. They do not tell you why it moved, which segment drove the shift, or what a team should actually change.

Tier 2: Thematic patterns

Recurring customer feedback themes, such as checkout friction, service quality gaps, onboarding confusion, and pricing resistance, sit in the middle tier. They explain score movement, but only when tracked consistently across feedback sources and time periods. A theme that surfaces once in a single study is an observation. A theme that recurs across six studies over two quarters is a signal.

Tier 3: Sourced evidence

Video clips, verbatim quotes, and customer behavior observations anchor the hierarchy at the base. This is the layer that makes every VoC metric auditable. When a stakeholder challenges a finding, the answer is not a methodology footnote. It is a clip of a real customer explaining the problem in their own words.

The traceability requirement is straightforward: every metric movement should link to a theme, and every theme should link to specific customer interactions. Without that chain, VoC metrics are vulnerable to the same credibility problems as any other unsourced claim.

Consider a concrete scenario: a brand team sees NPS drop eight points in Q3. They open the dashboard, see "packaging confusion" as the top recurring theme across 40 interviews, and click through to three video clips of customers demonstrating the customer pain points at the point of use. That sequence, from score to theme to evidence, is what makes VOC insights actionable rather than debatable.

Decision framework: Choosing the right VoC metrics for your research purpose

Not all VoC metrics serve the same research purpose. The metric that helps a product team understand why feature adoption dropped is not the same metric a brand team needs to evaluate whether a messaging change landed. Integrating VOC metrics without a clear framework produces data that is technically accurate but strategically useless.

A practical framework separates VoC measurement into three categories based on the question being asked:

Diagnostic Metrics (why is this happening?)

Open-ended qualitative feedback to surface root causes and uncover the customer needs that existing products or processes are not meeting. Best for product teams investigating feature adoption drop-offs and CX teams diagnosing spikes in support ticket volume before the pattern compounds.

Evaluative Metrics (did this work?)

Pre/post customer satisfaction scores paired with thematic feedback to measure whether a change improved the customer experience. Best for brand teams testing new messaging against customer expectations and UX teams validating redesigns against a defined baseline.

Predictive Metrics (what will happen next?)

Sentiment analysis and recurring theme tracking to forecast churn risk, customer retention likelihood, or shifts in customer preferences over time. Best for customer success teams monitoring account health and innovation teams pressure-testing market fit before a launch commitment.

One structural tradeoff determines whether any of these voice of the customer KPI categories actually influence decisions: the decision window. If a decision needs to be made in two weeks, a 6-to-12-week agency research cycle produces findings that arrive after the choice is already made. Surveys scale easily but rarely explain what drove the score. Qualitative feedback provides the depth behind the number.

Conveo resolves both constraints by running open-ended, AI-moderated video interviews at scale. Instead of choosing between a fast score and a slow explanation, teams get the drivers behind the score, the customer needs, preferences, and experiences that explain movement, structured and thematically coded within days of fielding.

How to operationalize voice beyond surveys: when to add AI-moderated interviews

Surveys are the default VoC channel for a reason: they scale without adding headcount, and they produce the quantitative signal that leadership expects on a dashboard. The problem is that a score tells you something has changed. It does not tell you what customers experienced that caused the change, or what you would need to do differently to move it back.

There are three situations where adding qualitative interviews to your VoC workflow pays for itself quickly.

When customer satisfaction scores drop, the cause is unclear

A five-point net promoter score decline is a fact. The story behind it only surfaces in conversation. Interviews turn a number into a diagnosis.

When themes recur across multiple channels but lack context

Customer support interactions and online reviews can surface a pattern. They rarely tell you the emotional weight behind it, or whether customers are frustrated enough to leave. Interviews provide the texture that makes a recurring theme actionable rather than merely observable.

When stakeholders distrust the findings

Quantitative dashboards feel abstract to non-research audiences. Verbatim quotes and highlight reels make VoC metrics credible enough to drive decisions across product, marketing, and operations, and ensure customers feel heard in the process.

The traditional barrier to adding interviews to a continuous feedback loop was operational: scheduling, moderation, and synthesis took weeks, which made recurring qualitative measurement impractical for most teams. The workflow shift that AI-moderated interviews enable is running conversations asynchronously, in parallel. Instead of booking 20 sessions over three weeks, teams can gather feedback from 20 or 200 participants simultaneously, triggering targeted feedback requests at key moments in the customer journey, and receive analyzed outputs within days.

For multi-market programs, 20+ language support with automated translation makes parallel fieldwork across regions feasible without localization cycles that add weeks and cost.

Interviews are not a replacement for surveys in a VoC program. Surveys collect VoC data at scale; interviews explain why the numbers moved. The teams that get the most out of both treat them as complementary signals within a continuous feedback loop: surveys set the alert, interviews provide the answer.

Enterprise governance for VoC metrics: definitions, sampling, and auditability

Enterprise VoC programs that span multiple teams, markets, and time periods share a common failure mode: key metrics that look comparable but are not. When NPS is calculated with different scale anchors in the US and Germany, or when CSAT question wording shifts between product lines, trend data becomes unreliable. The governance infrastructure that prevents this is not overhead. It is what makes VoC metrics worth tracking in the first place.

Four requirements separate enterprise-grade VoC from ad hoc measurement:

Metric definitions

Net promoter score (NPS), customer satisfaction score (CSAT), and customer effort score (CES) must be calculated consistently across every team and market. Governance also requires teams to specify how often to collect feedback from each segment; frequency and consistency matter as much as question wording. Conveo's study templates enforce this consistency, ensuring researchers in London and Chicago are measuring the same construct in the same way.

Sampling rules

Who gets surveyed, how often, and at which customer touchpoints must be defined and held constant. Inconsistent sampling introduces selection bias that undermines metric credibility when results reach the C-suite.

Multilingual comparability

When programs span markets, translations must preserve semantic meaning, not literal phrasing. Conveo's support for 20+ languages with automated translation means cross-market VoC metrics remain valid without manual localization cycles.

Auditability

Stakeholders need to trace reported metrics back to source conversations. Conveo links every insight to the video clips and verbatim quotes that generated it, making findings inspectable, not just reportable.

Conveo's SOC 2 certification, GDPR compliance, and EU data hosting remove the procurement blockers that prevent most AI research platforms from clearing enterprise adoption, particularly when voice and video data are involved. An end-to-end platform covering study design, recruitment, interviewing, VOC analytics, and reporting eliminates the data-quality gaps that stitched-together point tools create at every handoff.

The compounding benefit grows over time. Conveo's searchable insight library ties findings across studies, so recurring themes surface across quarters rather than disappearing into individual decks. Teams can track which themes persist, which resolve, and which are driving business outcomes, building the customer strategy evidence base that allows VoC programs to earn organizational trust.

Competitive differentiation: How Conveo solves the VoC measurement credibility gap

Most VoC measurement programs are caught between two unsatisfactory options. Traditional agency research delivers the methodological rigor stakeholders trust, but 6–12 week turnarounds make continuous metric tracking operationally impossible. Transcription and analysis platforms speed up VoC analysis, but they leave interviewing and recruitment as manual steps, which caps the cadence any small insights team can realistically sustain.

Conveo resolves that tradeoff across three dimensions that matter for enterprise VoC programs.

End-to-end workflow, no metric blind spots

Study design, panel recruitment, AI-moderated interviewing, automated coding, and stakeholder-ready reporting run inside one platform. When every step lives in the same environment, the VoC metric tracked in week one is measured the same way in week twelve.

Discover how to build and launch a study in Conveo:

Real voice and video conversations, not synthetic proxies

Conveo tracks recurring customer feedback patterns across AI-moderated video interviews and links every shift in voice of customer metrics directly to sourced evidence: specific participant responses, verbatim quotes, and video clips. That traceability is what converts a customer satisfaction score movement into a decision a leadership team can act on with confidence.

Enterprise-grade compliance that clears procurement

SOC 2 certification, GDPR compliance, and EU regional data hosting address the governance requirements that quietly block most AI research platforms from enterprise adoption.

The practical result: AI-led transcription, translation, coding, and thematic synthesis compresses time-to-metrics from weeks to hours, while every finding remains traceable to its source. Over 400 enterprise teams, including Google, FOX, and Bosch, rely on Conveo for this reason. Teams report up to 80% cost reduction compared to traditional research and up to 75% savings versus agency-led fieldwork. For satisfied customers and loyal customers alike, those results compound into stronger customer engagement, higher customer lifetime value, and sustainable business growth.

Frequently Asked Questions

Which VoC metrics actually show whether our program is influencing product, CX, or marketing decisions, not just collecting feedback?

What is a practical KPI framework for a VoC program when we have multiple channels, surveys, interviews, support tickets, reviews, and mixed data quality?

How do we measure VoC insight quality, not just volume?

What is the right cadence for VoC measurement: quarterly, monthly, or continuous?

How do we get stakeholders to actually act on VoC findings?

What is a realistic timeline for launching a VoC metrics program from scratch?