TL;DR

The AI qualitative research market now spans four distinct categories: generic large language models used for ad hoc analysis, standalone AI interview platforms, analysis-only point solutions, and end-to-end qualitative data analysis platforms. Choosing between them is a credibility decision, not a feature comparison. Enterprise procurement teams ask three questions before anything else: Can findings be traced back to real participant conversations? Are participants verified humans, not synthetic? Does the platform meet SOC 2, GDPR, and data residency requirements? This guide maps the landscape against those criteria and identifies the platforms built to deliver enterprise-grade qualitative rigor at scale.

Something has shifted in AI qualitative research, and the teams noticing it first are the ones who spent years defending qual to skeptical stakeholders. The qualitative market research that once required six to twelve weeks, a recruited panel, and an agency retainer can now deliver real voice and video conversations at scale, with multimodal analysis and stakeholder-ready outputs, in days rather than months. The operational constraints that made qualitative research a periodic luxury are no longer fixed.

That shift matters. But as those constraints fall away, the bottleneck moves. The question is no longer whether you can get findings fast. It is whether those findings hold up when a CMI director, a CFO, or a product lead needs them for decision-making. AI qualitative research that produces black-box summaries, findings without sources, themes without transcripts, outputs that experienced qualitative researchers cannot verify, fails exactly at that moment.

This guide covers what AI qualitative research is, how it works, where qualitative methods earn enterprise-grade credibility, and how to evaluate platforms against the criteria that matter.

What is AI qualitative research?

At its core, using AI in qualitative research means applying machine learning and large language models to conduct, analyze, or synthesize qualitative data: in-depth interviews, focus groups, open-ended survey responses, and video recordings. The category label spans four fundamentally different approaches.

Generic large language models used for ad hoc analysis

General-purpose artificial intelligence models can quickly summarize transcripts and suggest themes from textual data. But beyond basic prompting, there is a steep learning curve in adapting them to real qualitative research workflows: outputs lack participant grounding, source tracing, and the audit trails stakeholders require to act on findings.

Qualitative data analysis software with AI-assisted coding

Platforms like NVivo, Dovetail, and Notably layer thematic coding and pattern recognition onto existing research. The AI accelerates qualitative analysis; it does not conduct the study. The research itself still happens elsewhere, through human-moderated interviews or uploaded transcripts.

AI interview platforms

Platforms that offer AI interviews and return interview transcripts and recordings to the team for analysis. This solves collection bottlenecks but leaves analysis, synthesis, and reporting as separate manual steps requiring other tools.

End-to-end qualitative intelligence platforms

The complete category: study design, AI-led interviewing, automated multimodal analysis, and stakeholder-ready reporting on a single platform, with mandatory source linking so every finding from qualitative data analysis traces back to the original participant response. Conveo is a video-first qualitative intelligence platform built for enterprise teams that need the entire qual cycle covered, not just one step of it.

None of these categories replaces the human input that makes qualitative research credible. Study design, hypothesis framing, and contextual interpretation still require researcher expertise. The role of AI is to remove the manual steps that slow everything down, not to substitute for the thinking that makes findings worth acting on.

The credibility test: Where AI qualitative research stands or falls

The moment a senior stakeholder reads an AI-generated research summary and asks, "Where did this come from?", most AI qualitative platforms hit a wall. The output exists. The source does not. Themes are presented without quotes, quotes appear without context, and there is no path back to the original conversation. For a CMI director defending a positioning decision to the C-suite, that gap is a credibility failure.

The traceability standard that separates research-grade platforms from synthesis-only platforms is straightforward: every insight maps back to a theme, which maps back to a quote from interview transcripts, which maps back to a timestamped video clip. That chain of evidence allows a stakeholder to click through to the exact moment a participant said something, in their own voice, with the hesitation or emphasis that no transcript captures. Without it, AI qualitative research produces confident-sounding outputs that experienced qualitative researchers cannot stand behind, and the research data that should drive decisions gets ignored.

Participant authenticity compounds the problem. Some platforms now use synthetic respondents as a substitute for real recruitment. Real qualitative insight requires real human participants, fraud-filtered recruitment, and conversations that surface detailed insights grounded in actual behavior. The evidence supports this: participants in AI-moderated video interviews provide responses three to four times longer than those in equivalent text surveys, and 93% rate the experience 4 out of 5 or higher. That is a data quality metric, not a satisfaction score. Richer responses from real participants produce qualitative analysis that holds up under scrutiny.

See what a stakeholder-ready Conveo report looks like: themes, video clips, and sourced quotes, ready to share.

Conveo is a video-first platform built around source-linking on every insight, with every AI-generated finding traceable back to the original video context. The threshold question for any AI qualitative research platform is whether stakeholders can verify what they are being asked to act on.

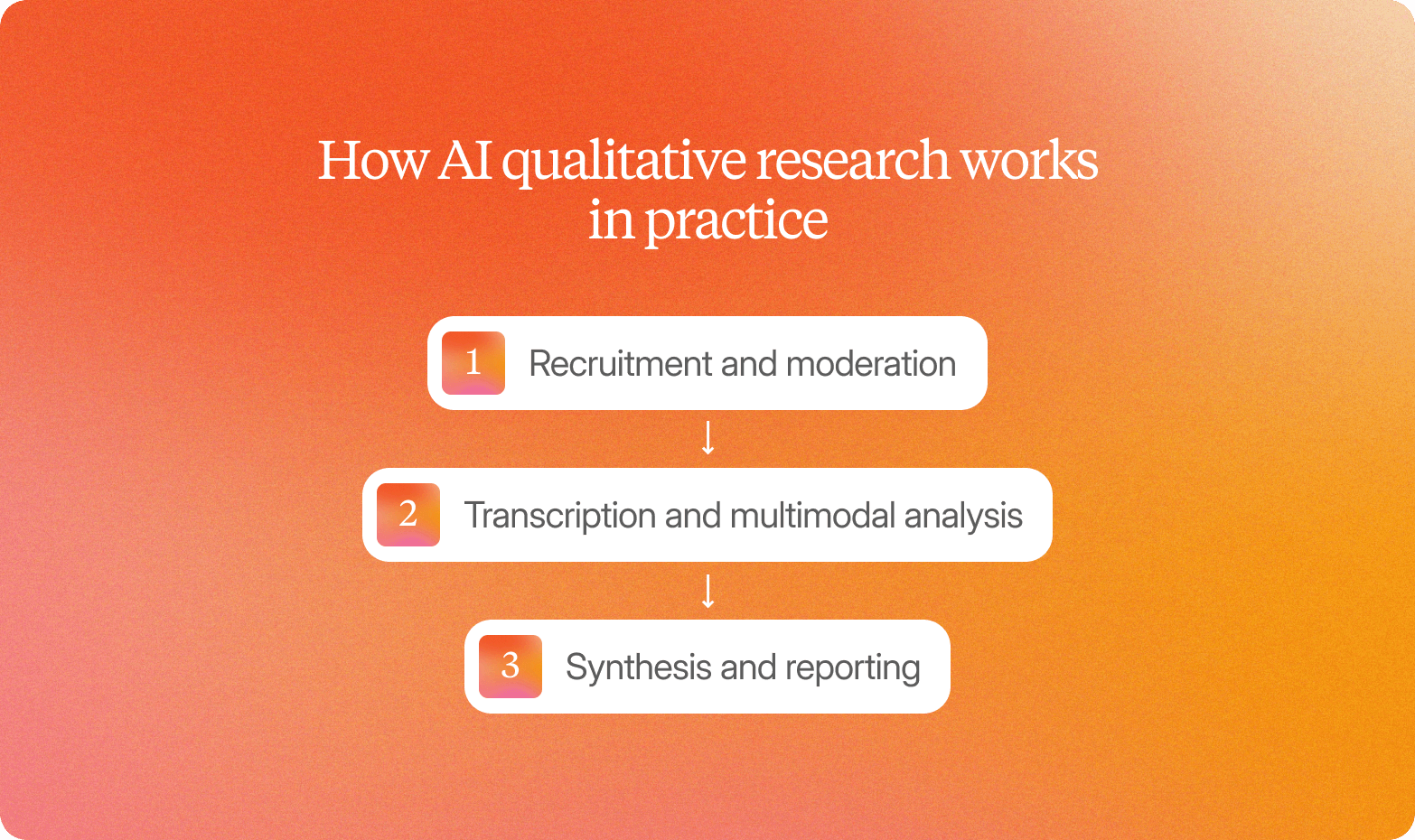

3 stages of AI qualitative research

The qualitative methods that once imposed sequential constraints, recruit, then moderate, then transcribe, then analyze, now run in parallel. That shift is where teams find the most meaningful opportunity to improve qualitative research with AI: not by cutting corners, but by removing the operational overhead that forced researchers to work in small, slow batches.

Stage 1: Recruitment and moderation

Participants are sourced from vetted global panels spanning 50+ languages, with fraud filtering and incentive management built into the platform. Each participant takes part in an AI interview that adapts in real time, probing when a participant hesitates at a price point, following up when a competitor is mentioned unprompted. Because sessions run asynchronously, genuine customer feedback from 10 or 1,000 participants arrives in parallel rather than being gated by a moderator's calendar.

Stage 2: Transcription and multimodal analysis

As raw data arrives in the form of video recordings, Conveo transcribes and translates automatically across 50+ languages, generating interview transcripts that feed directly into analysis. Multimodal analysis works across speech, tone, facial cues, and on-screen objects, including sentiment analysis of vocal tone, to surface signals that transcripts alone miss. These are indicators, not conclusions; human researchers interpret them in context.

Stage 3: Synthesis and reporting

Automated thematic coding groups findings into clusters, each linked back to timestamped video, verbatim quotes, and session-level evidence. Export-ready reports ship in days rather than weeks. The traditional agency model compresses this same cycle into six to twelve weeks of scheduling, sequential moderation, and manual coding. When those steps run in parallel, analyzing qualitative data becomes faster without sacrificing the depth that makes findings credible.

Enterprise readiness: Governance, compliance, and security

For qualitative AI market research platforms, the procurement question is not "what can it do?" It is "Can it be approved?" When an enterprise research function moves from periodic agency engagements to continuous qualitative work, the platform becomes organizational infrastructure. The compliance checklist is not optional.

7 things enterprise procurement requires:

SOC 2 certification:

Independently audited security controls for data handling and confidentiality

GDPR compliance:

Lawful basis for EU participant data, documented processing agreements, and deletion request support

Regional data hosting:

EU residency for European buyers; US residency for US-based teams

SSO and role-based access controls:

Enterprise authentication that integrates with existing identity providers

Audit trails:

Documented access logs are retrievable for governance and compliance reviews

Configurable retention and deletion policies:

Data lifecycle control on request or on schedule

Consent workflows and data privacy protections:

Jurisdiction-aware consent built into the participant experience

Enterprise teams evaluating AI qualitative research are deciding whether to bring their qualitative analysis in-house, reducing dependency on six-figure agency engagements. Procurement will not approve that decision on a platform with a compliance gap, and the data analysis infrastructure chosen will touch participant data across markets and functions. As of early 2026, few other platforms in the category have confirmed SOC 2 certification alongside EU data hosting. Conveo is SOC 2-certified, GDPR-compliant, and offers EU regional data hosting, built in from day one.

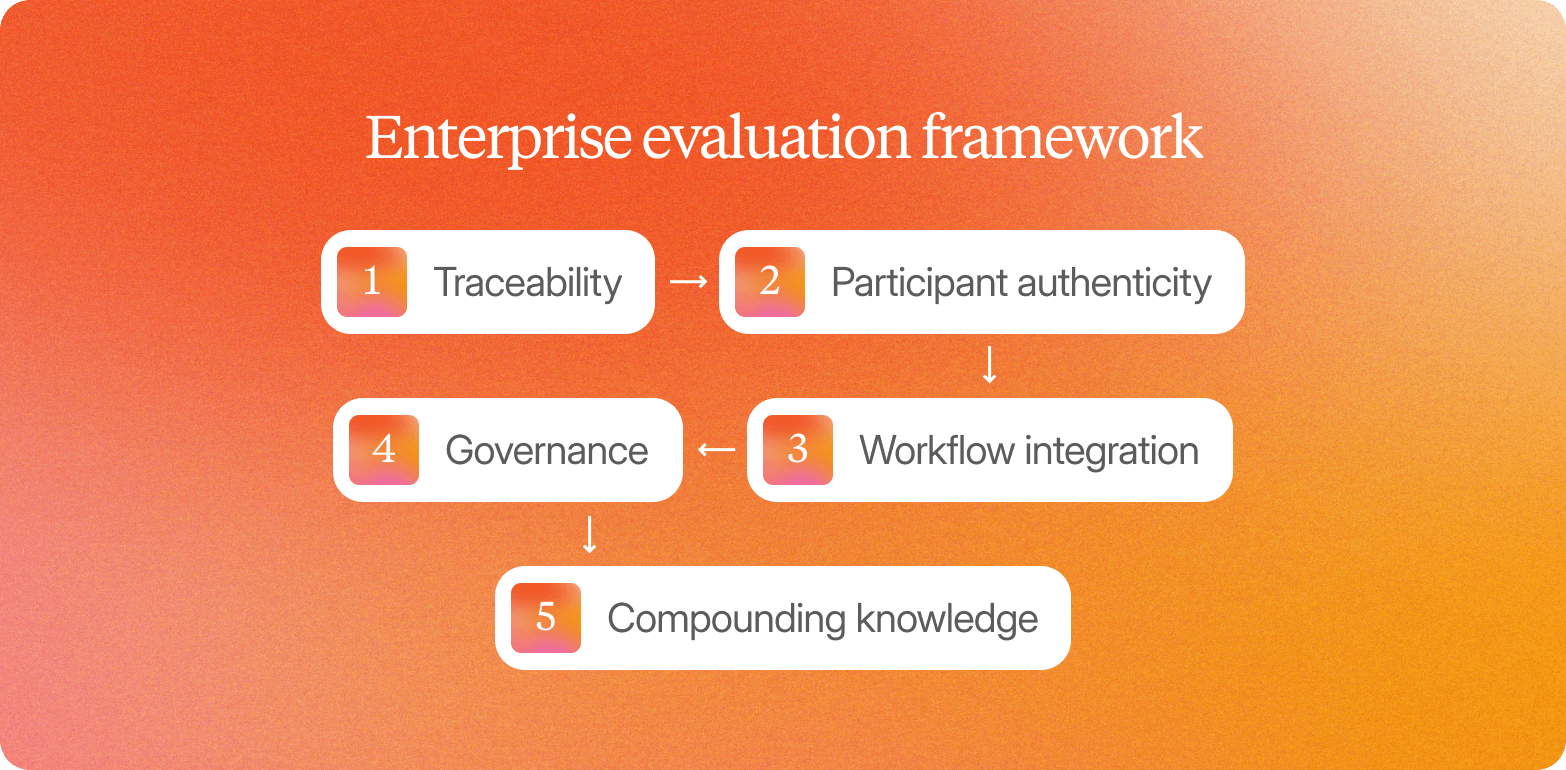

5 step evaluation framework

When teams ask who offers the best AI qualitative research capability, experienced qualitative researchers know the answer is not about impressive demos. The more useful question is which platform holds up across all five criteria that determine whether outputs are trusted and acted on.

1. Traceability

Require timestamped video clips and verbatim transcripts linked to every insight from the data analysis process. A stakeholder who cannot verify a finding within seconds will challenge or ignore it. Black-box summaries fail at scale.

2. Participant authenticity

Synthetic respondents produce outputs faster, but enterprise stakeholders rarely trust them once they find out. Real video responses from verified participants are what evidence-based procurement requires. Conveo makes authenticity auditable, not assumed.

3. Workflow integration

A stack of disconnected tools, one for recruitment, another for transcription, a third for analysis, and other tools for reporting, creates friction and data loss. Require an AI platform that handles the full cycle. When everything runs on one platform, handoffs disappear, and institutional knowledge accumulates.

4. Governance

SOC 2 certification, GDPR compliance, data privacy controls, regional data hosting, SSO, and audit trails are not optional. A compliance gap has implications beyond a failed procurement: it affects every study run on the platform. Require documented certifications before adding any vendor to the shortlist.

5. Compounding knowledge

Research that lives in a single deck delivers no lasting value. Require a searchable insight library that connects qualitative and quantitative data across studies and delivers sourced strategic insights over time. When a product manager or CMI director asks a question six months after a study has run, the answer should surface automatically.

Conveo is built around all five criteria. For a side-by-side platform comparison, see: 15 Best AI Qualitative Research Tools for 2025

4 use cases where enterprise teams apply AI qualitative research

Concept and ad testing

With AI qualitative market research on Conveo, a brand team launches AI-led video interviews and receives thematic qualitative analysis with video clips in days, not weeks. That structured customer feedback ships directly to stakeholders, grounding creative decisions in verifiable consumer reactions inside the campaign window rather than after it closes.

Continuous discovery

Product and UX research teams know they should talk to users more often; operational overhead is what keeps them from doing so. When AI interviews run asynchronously, UX researchers maintain a weekly cadence without coordination overhead. That same rhythm supports usability testing and concept validation. Every study feeds the insight library, so findings compound across sprints using established qualitative methods rather than disappearing into one-off decks.

Multi-market research

Running research across 10 markets in eight languages used to require a large agency network or a painful series of sequential studies. Conveo's AI interviewer conducts sessions in 50+ languages, with built-in AI tools for transcription and translation. Global teams get detailed insights across markets without the cost and coordination penalty multi-market qual typically demands.

CMI modernization

Teams that move their qualitative program onto Conveo shift from periodic agency projects to a continuous, in-house capability. Agency spend drops, study throughput rises, and strategic insights accumulate in a searchable library the whole organization can access. Teams report cutting research timelines from months to days, with spending up to 70% lower compared to agency-delivered qualitative programs.

“Within days, we had insights that would've taken a traditional agency a month."

Head of Customer Insights, JDE Peet’s

How Conveo delivers AI qualitative research at enterprise scale

Every criterion this article has worked through, traceability, participant authenticity, workflow integration, governance, and compounding knowledge, points to the same requirement: a platform built around all five, not just the ones that look good in a demo.

Each insight Conveo surfaces links directly back to the original video source. Stakeholders watch the moment, hear the hesitation, and see the context that a transcript strips out. That source-linking is the architecture, it is what makes quality assurance possible at scale, and what separates Conveo from large language models or point-solution AI tools that summarize without tracing.

Every step of the qualitative work, from study setup through stakeholder reporting, runs on one platform. No handoffs. No separate vendors for transcription, analysis, or storage. Conveo is SOC 2 certified, GDPR-compliant, and supports EU data hosting and SSO. Over time, every study feeds into a searchable insight library that compounds across projects, enabling enterprise teams to surface evidence from any study instantly.

Over 400 enterprise teams, including Google, Reddit, FOX, and Bosch, run qualitative research at scale on Conveo.

Frequently Asked Questions

What is AI qualitative research?

How does AI improve qualitative research?

What are the risks of using AI in qualitative research?

Can AI replace human researchers?

Why is AI the future of qualitative research?

How do I evaluate an AI qualitative research platform for enterprise use?