TL;DR

Generative user research tools use AI to conduct interviews, analyze qualitative data, and scale research workflows across teams.

The biggest distinction between platforms is whether they support interview execution, analysis workflows, or the full research lifecycle end-to-end.

This guide compares the best AI tools for user research in 2026 based on methodology support, automation depth, participant recruitment, and insight reuse.

For research teams running continuous discovery or scaled qualitative programs, Conveo provides the strongest end-to-end workflow coverage.

Stakeholder questions rarely arrive one at a time anymore. They arrive all at once, across product, CX, and strategy teams, each expecting evidence fast.

Most legacy user research tools were designed before modern AI capabilities reshaped how research applications operate. Today, research is faster, continuous, and increasingly parallel across teams. Generative user research tools support this shift by automating scheduling, moderation, transcription, and early synthesis so you can focus on interpretation, stakeholder alignment, and decision support.

This guide compares nine leading AI tools for user research using consistent evaluation criteria. It helps you shortlist the right platform for your research workflows before you start vendor conversations.

Generative User Research Tools at a Glance

Tool | Primary use case | AI moderation type | Automated synthesis | Recruitment included | Best for | Pricing model |

Conveo | End-to-end qualitative user research | Async AI video interviewer with adaptive probing, 50+ languages | Full - themes, sentiment, clips, insight library | Yes - vetted global panel, fraud filtering, incentive management | Enterprise and mid-market research teams running scaled qualitative programs | Custom/enterprise pricing |

Outset | AI-moderated product and UX research | Async voice/video AI interviewer (“Leo”) with structured probing | Automated themes and quote extraction | No | Product and UX teams running concept and usability testing | Subscription + usage |

Listen Labs | High-speed consumer and ad research | Async AI chat interviewer with audio/video support | Full report delivery within hours | Yes | B2C marketing and tech product teams needing fast turnaround | Custom/enterprise pricing |

Marvin | Qualitative analysis and repository tool | Voice-to-voice AI with custom persona | Transcript + immediate AI analysis | No | Teams wanting conversational, human-like interview depth | Early-stage / contact for pricing |

GetWhy | Video think-aloud consumer research | Agentic video interview, think-aloud format | Insights delivered in ~4 hours | Yes | CPG, retail, and consumer brand teams | Custom/contact for pricing |

Maze | Product and UX research (moderated + unmoderated) | AI moderator with live question adaptation | Themes, summaries, highlights | No (integrates with recruitment tools) | Product teams running continuous discovery | Freemium + paid plans |

Dovetail | Qualitative data analysis and insight - analysis layer only | AI tagging, coding, and summaries | No | Teams with existing recordings needing AI-assisted synthesis | Subscription tiers | |

Ballpark | Lightweight generative research and rapid feedback studies | Async AI interview flows with structured prompts | AI summaries, themes, quick synthesis | Yes - integrated participant panel | Startups and product teams running fast-turnaround exploratory research | Free trial + subscription tiers |

Voxpopme | High-volume video consumer feedback | No autonomous AI moderator - video survey format | AI sentiment, themes, highlight reels | Panel access available | Consumer brands running large-scale video feedback programs | Custom/enterprise |

Platforms were evaluated based on publicly available product documentation, independent user reviews, and hands-on research. Pricing reflects publicly available information as of Q1 2026.

This snapshot shows how the platforms differ at a glance. Let's take a closer look at where each tool fits in real research workflows.

The 9 best generative user research tools, reviewed

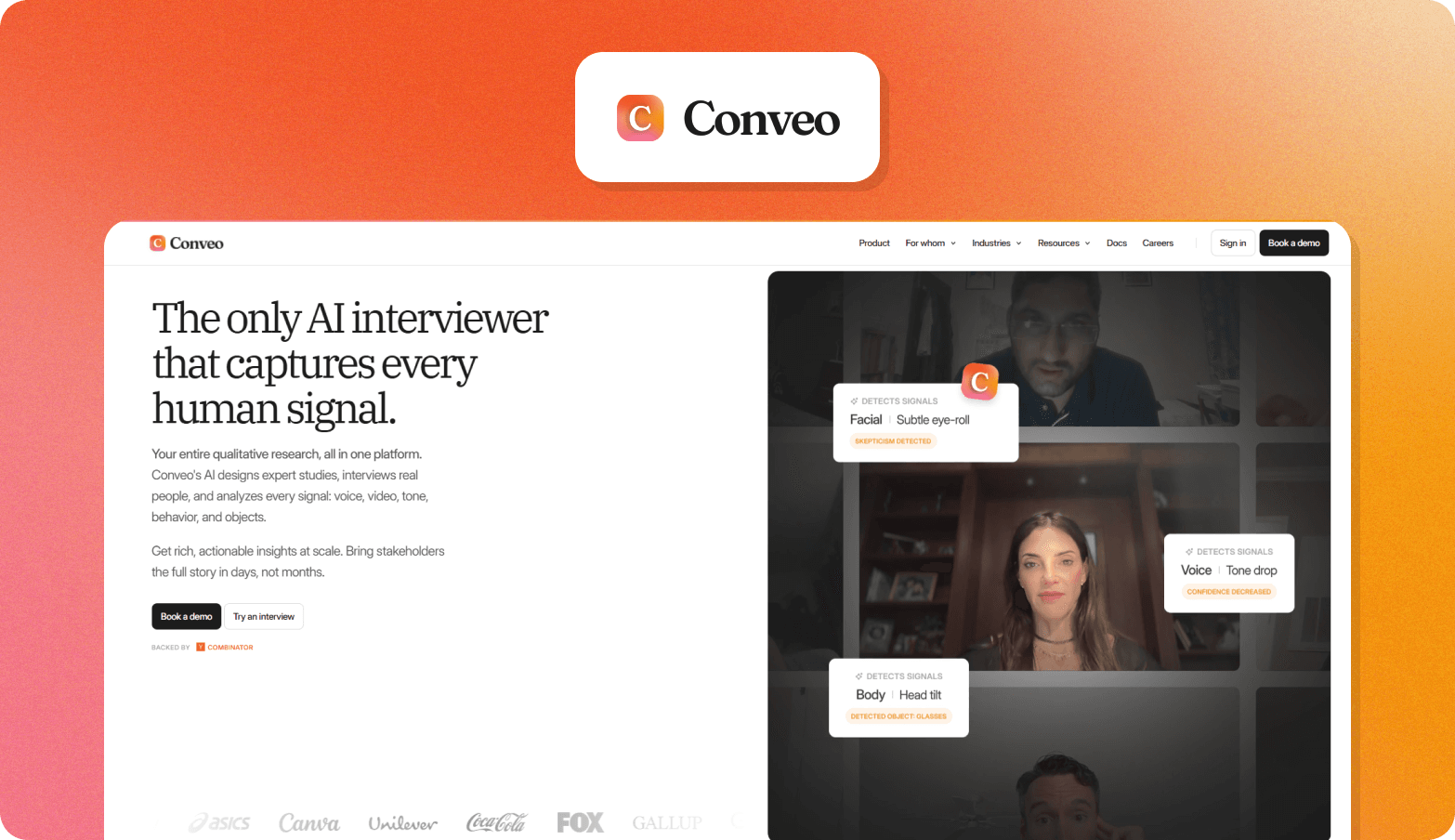

1. Conveo

Conveo is a video-first AI research platform built for enterprise and mid-market teams that need to run the entire qualitative research workflow, from study design and participant recruitment through AI-moderated interviews to automated synthesis and a compounding insight library.

What it does

Conveo supports end-to-end user research from study setup to long-term insight reuse in a single user research platform. You can launch studies from scratch or templates, define participant recruitment criteria, and run asynchronous video user interviews moderated by an AI interviewer that adapts follow-up questions across 50+ languages.

Multimodal automated analysis combines:

Speech,

Tone,

Facial cues,

On-screen context to surface key themes, sentiment patterns, and highlight clips.

All research findings flow into a searchable research repository so research teams can reuse qualitative data and identify patterns across studies instead of starting from zero each time.

Best for

Enterprise and mid-market insights, UX research, and product teams running 10+ studies per year that need to scale research workflows without adding headcount or relying on agencies. Conveo lets these teams run parallel user interviews, move faster from research sessions to stakeholder-ready research findings, and build a reusable insight library instead of repeating the same studies.

Particularly strong for CX insights teams running continuous discovery and CMI insights teams developing long-term customer understanding across markets that need to surface real consumer behavior insights consistently across regions, segments, and time.

Key features

Asynchronous AI-moderated video user interviews with adaptive probing in 50+ languages

Built-in participant recruitment with vetted global panel, fraud filtering, and incentive management

Multimodal analysis using artificial intelligence across speech, tone, and visual context

Cross-study insight library with stakeholder access and contradiction detection

Enterprise security, including SSO, encryption at rest, and regional hosting options

Trusted by Google, Unilever, and Visa

These capabilities make Conveo one of the leading AI tools for user research moderation and among the best AI user research tools in 2026 for teams running continuous qualitative programs.

Limitations to know

Not designed for lightweight studies or teams focused only on quantitative research methods. Enterprise pricing can also be a barrier for smaller teams without defined research budgets.

Pricing

Custom enterprise pricing (pilot studies available)

See how Conveo supports end-to-end qualitative research programs | Book a walkthrough.

2. Outset

What it does

Outset is an AI-moderated research platform for asynchronous voice and video interviews, with support for structured probing and automated synthesis across discovery and evaluative studies.

Best for

Product teams and UX research teams running frequent concept testing, discovery, and usability testing without scheduling live sessions. Larger programs run by CX insights teams will usually need a stronger long-term repository layer.

Key features

Async voice and video AI interviewer with adaptive follow-up questions

Automated themes and synthesis across interviews

Supports research across 40+ languages

Limitations to know

Outset is stronger on interview execution than on compounding cross-study knowledge management. Pricing is also custom, so cost is harder to benchmark early.

Pricing

Custom pricing. Outset says plans are tailored to team, research, and support needs.

3. Listen Labs

What it does

Listen Labs is an end-to-end research platform built around AI-moderated interviews, with use cases that include brand tracking, usability testing, segmentation, concept testing, and journey research.

Best for

B2C product, growth, and marketing teams that need fast-turnaround research findings and built-in participant recruitment.

Key features

AI-moderated interviews with audio and video support

Built-in panel sourcing

Research across 100+ languages

Limitations to know

Listen Labs is optimized for speed, so teams looking for a deeper research repository or broader program memory may want additional infrastructure to support it.

Pricing

Custom/enterprise pricing.

4. Marvin

What it does

Marvin offers an AI-moderated interviewer for large-scale asynchronous interviews and pairs that with transcription, analysis, and a research repository.

Best for

Teams that want conversational AI interviews but also need an analysis and repository layer in the same environment.

Key features

AI-moderated interviewer with researcher-controlled guides

Instant transcription and analysis support

40+ language support

Limitations to know

Marvin does not include native participant recruitment as panel-backed platforms do. Its pricing structure can also be less transparent for buyer shortlisting.

Pricing

Free plan available for repository use. Paid plans and fuller access vary by tier; contact sales for broader deployment.

5. GetWhy

What it does

GetWhy is an AI qualitative research platform focused on fast video-based consumer insight for high-stakes brand, campaign, and innovation decisions.

Best for

CPG, retail, and consumer brand teams running concept testing, creative testing, and rapid strategic validation.

Key features

Video-based AI research workflows

Fast synthesis, often positioned around a 48-hour turnaround

Option to recruit your own participants or source through GetWhy

Limitations to know

GetWhy is more brand- and consumer-research-oriented than deep UX research workflows. Pricing is not fully public at the enterprise level.

Pricing

Starter, Basic, and Custom plans are listed through G2, with higher-end pricing handled through sales.

6. Maze

What it does

Maze is a product research platform for concept validation, usability testing, copy testing, and broader continuous discovery, with an AI moderator available as an Enterprise add-on.

Best for

Product teams running frequent usability testing and lightweight discovery tied closely to release cycles.

Key features

Prototype and usability testing workflows

Access to a participant panel of over 5 million

AI moderator available on Enterprise plans

Limitations to know

Maze is stronger for product testing than for end-to-end qualitative interviewing. Its AI moderation is not broadly available across lower tiers.

Pricing

Freemium plus paid plans. AI moderator is an Enterprise add-on.

7. Dovetail

What it does

Dovetail is primarily an analysis and research repository platform, not an AI interview moderator. It helps teams centralize research data, analyze transcripts and feedback, and create searchable reports, dashboards, and summaries across existing studies.

Best for

Teams with existing interview recordings, notes, and feedback that need stronger synthesis and centralized research memory. It is often evaluated by CMI insights teams that manage large research repositories across functions or markets.

Key features

AI summaries and analysis inside projects

Searchable repository for customer feedback and research data

Dashboards and reports for stakeholder sharing

Limitations to know

Dovetail does not conduct interviews or manage participant recruitment. Teams usually need separate moderation-first research tools upstream.

Pricing

Free tier available. Professional starts at $15 per user per month, and Enterprise is custom.

8. Ballpark

What is does

Ballpark is an AI research platform for product, brand, and consumer research, enabling fast studies with large-scale participant access and short turnaround times.

Best for

Startups and product teams that want quick, lightweight research sessions without building a complex enterprise workflow.

Key features

AI-assisted research workflows

Access to millions of participants

14-day free trial with unlimited users and responses

Limitations to know

Ballpark is less clearly positioned for large-scale enterprise research governance than some heavier platforms. Detailed enterprise pricing is handled through sales.

Pricing

Free 14-day trial, then enterprise pricing via sales.

9. Voxpopme

What it does

Voxpopme is a qualitative research platform built around video surveys, live interviews, user research, and AI insights for large-scale customer feedback programs.

Best for

Consumer brands and larger research teams that want high-volume video feedback, panel access, and AI analysis in one platform.

Key features

Video surveys and live interviews

AI sentiment and theme analysis

Global panel recruitment or use of your own users

Limitations to know

Voxpopme is not positioned as a fully autonomous AI interviewer in the same way as moderation-first platforms. It is stronger for scaled video feedback than adaptive interview depth.

Pricing

Custom pricing with unlimited plans based on admin seats.

The right choice depends less on features and more on where you need support in your research process.

Here’s how to evaluate that fit.

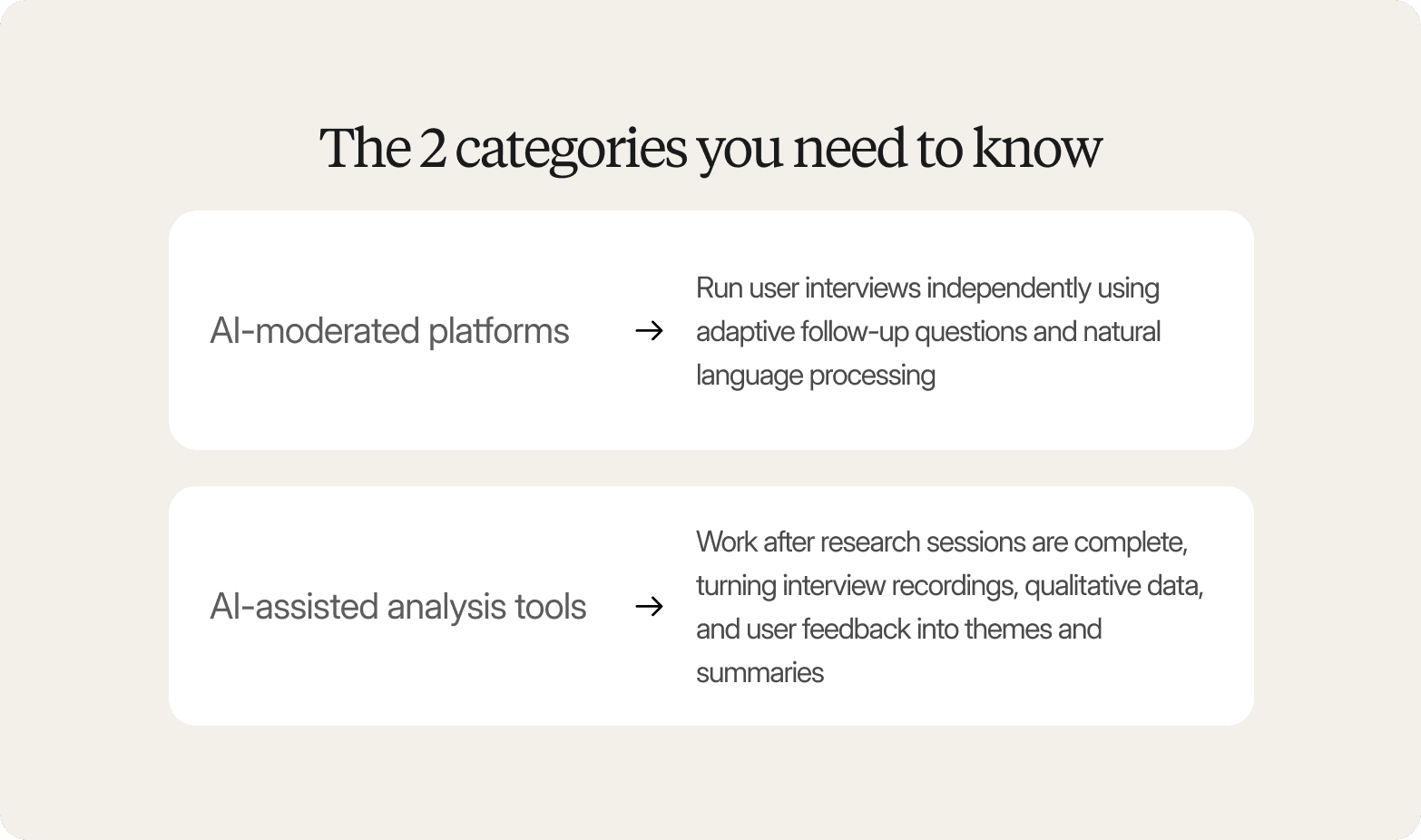

What makes a generative user research tool different: The 2 categories you need to know

Most teams evaluating AI for user research tools assume every platform automates the same parts of the research process.

Generative user research platforms fall into two distinct categories: tools that conduct interviews and tools that analyze them.

Understanding this difference helps research teams choose the right system for their research workflows and avoid mismatched expectations.

AI-moderated platforms run user interviews independently using adaptive follow-up questions and natural language processing.

AI-assisted analysis tools work after research sessions are complete, turning interview recordings, qualitative data, and user feedback into themes and summaries.

Many modern user research AI tools combine both layers, but one usually defines the core workflow they support.

AI-moderated interviews vs. AI-assisted analysis - why the distinction matters

AI-moderated interview tools | AI-assisted analysis tools |

Interview execution, probing, follow-up | Transcription, coding, thematic synthesis |

Research guide + participant profile | Existing recordings or transcripts |

Transcripts, themes, clips from AI sessions | Themes, summaries from human sessions |

Scale interviews without moderators | Accelerate analysis of recordings |

Conveo, Outset, Listen Labs, GetWhy | Dovetail, Marvin (analysis), Otter.ai |

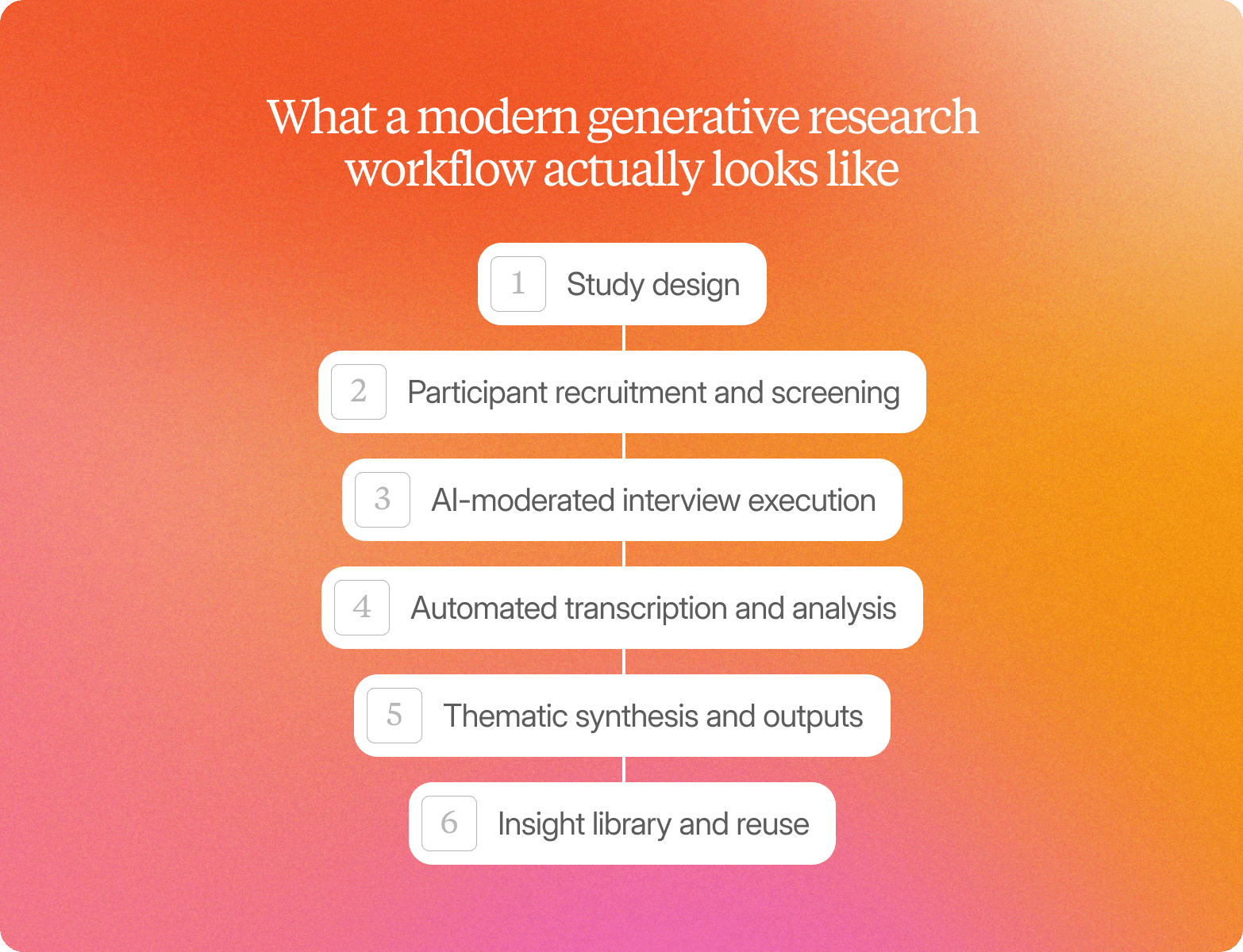

What a modern generative research workflow actually looks like

A modern workflow using AI user research tools typically follows a structured sequence from study setup to reusable insights. Conveo supports this workflow end-to-end rather than covering only one stage of the research process.

Study design

Define research goals, research plans, and interview guides aligned to key questions and pain points.

Participant recruitment and screening

Select qualified research participants using filters based on role, user behavior, or product experience, with panel access and incentive management handled inside the platform.

AI-moderated interview execution

Run parallel user interviews with adaptive follow-up questions across moderated and unmoderated studies using an AI interviewer that scales interview volume without adding moderators.

Automated transcription and analysis

Apply natural language processing, sentiment analysis, and AI-powered transcription to interview recordings and qualitative feedback as sessions complete.

Thematic synthesis and outputs

Identify patterns, surface key themes, and generate actionable insights from research sessions with stakeholder-ready summaries and clips.

Insight library and reuse

Store research findings in a searchable research repository so insights accumulate across studies instead of resetting between projects.

This is where Conveo differs most from point solutions by supporting the full workflow from participant recruitment through to a compounding insight library.

How to choose the right generative research tool for your team

The right generative research tool directly affects how fast your team can move from user interviews to decisions, how much manual work stays in your research workflows, and whether research findings actually reach stakeholders when they need them. Choosing well means fewer repeated studies, less time spent organizing qualitative data, and faster access to actionable insights your team can use immediately.

For teams running continuous discovery or frequent qualitative programs, platforms that support the full workflow from study setup through insight reuse make the biggest difference in your team’s daily research speed, consistency, and impact. That’s where end-to-end systems like Conveo stand out.

Start with your primary use case - interview execution, analysis, or end-to-end

The right choice depends on where AI should first support your research process.

Some teams need help conducting user interviews at scale. Others need faster analysis tools for existing interview recordings.

Teams running continuous UX research programs often need a user research platform that supports the full lifecycle from study setup through insight reuse.

Matching your primary workflow gap to the right category of AI tools makes it easier to compare vendors and avoid paying for features your research teams will not use.

If your primary need is… | Look for… | Tools that fit |

Running AI-moderated interviews at scale | Adaptive probing, async video, panel access, language breadth | Conveo, Outset, Listen Labs, GetWhy |

Analyzing existing recordings faster | AI-powered transcription, auto-coding, collaboration tools, research repository | Dovetail, Marvin (analysis layer) |

Full workflow from study setup to insight library | End-to-end coverage, stakeholder access, insight compounding, compliance | Conveo |

Continuous UX and product discovery | Lightweight setup, fast research sessions, product integrations | Maze, Ballpark, Outset |

High-volume video feedback at consumer scale | Large panels, sentiment analysis, scalable video capture | Voxpopme, Listen Labs |

Match tool depth to your research cadence

Research cadence should shape how you evaluate user research AI tools.

Teams running a few structured studies each year often benefit from lightweight AI-powered tools that reduce repetitive tasks such as transcription, tagging, and synthesis.

Teams running continuous discovery programs need infrastructure that supports insight compounding across research workflows and preserves research findings beyond individual studies.

If your team runs frequent usability testing, prototype testing, or concept testing across product cycles, look for platforms that connect research sessions over time rather than treating each study as a standalone project.

Over twelve months, this difference affects insight quality, research efficiency, and total cost of ownership more than feature lists alone.

Evaluate AI moderation quality, not just AI branding

Many vendors describe themselves as AI interviewer platforms, but the moderation quality varies widely across AI systems.

The strongest AI UX research tools conduct interviews using adaptive follow-up questions, detect hesitation, and surface unexpected key themes without losing alignment to research goals.

Use the criteria below during vendor demos to evaluate how well a platform supports real-world research methods.

Evaluation criterion | What to ask/look for |

Adaptive probing | Does the AI follow unexpected answers or only the guide? |

Language and cultural fluency | Native language support or translation layer only? |

Participant experience | Request a live test session from the participant's side |

Moderation consistency | Does quality hold across 10 and 500 interviews? |

Handling of sensitive topics | Guardrails for distressing responses or disclosures? |

Think past the study: What happens to your insights afterward?

Most research tools support study execution well. Few support what happens to your research findings afterward.

When evaluating AI for user research tools, look for a research repository that enables cross-study search, stakeholder self-serve access, and long-term storage of qualitative data across projects.

This matters most for teams running continuous UX research workflows. Structured insight libraries help prevent knowledge decay and make it easier to revisit key moments, customer insights, and user behavior without having to repeat earlier research sessions.

Practical questions to ask vendors before signing

Question | Why it matters |

Is the platform SOC 2 certified? What are your regional hosting options? | Non-negotiable for enterprise plans and compliance |

How is participant recruitment handled, and how is fraud prevented? | Panel quality affects qualitative data validity |

What languages are natively supported (not translated)? | Critical for multi-market research workflows |

What is the typical turnaround from study launch to deliverable? | Sets expectations for research teams and stakeholders |

How do stakeholders outside research access findings? | Determines whether research findings travel across teams |

Is a pilot or trial study available before commitment? | Reduces procurement risk and improves evaluation confidence |

Choosing the right generative research tool doesn’t just improve individual studies. It shapes how quickly your team can learn from customers, how reliably insights reach stakeholders, and whether research becomes a continuous capability instead of a one-off activity. That’s why it’s worth looking closely at how Conveo approaches the full research lifecycle in practice.

Why Conveo is the best generative user research tool in 2026

Most generative research tools improve one stage of the research process. Conveo improves the entire lifecycle, from interview setup to insight reuse across teams.

Instead of treating AI interviews as a standalone capability, Conveo helps your team run continuous qualitative research that compounds over time. Studies connect. Evidence stays traceable. Findings remain accessible long after a single project ends.

That changes what your research function can deliver day to day:

Run AI-moderated interviews without scheduling bottlenecks

Generate structured themes automatically from real participant conversations

Preserve insights in a searchable library instead of losing them after reporting

Give stakeholders direct access to clips, evidence, and patterns they can act on

For insights teams responsible for ongoing discovery, journey understanding, or product direction, this kind of end-to-end workflow makes research faster to run and easier to reuse across the organization.

Want to see how that can work for your team? Book a walkthrough today.

Frequently Asked Questions

Will participants open up to an AI moderator the way they would with a human?

How do AI-moderated platforms handle hallucination in analysis and synthesis?

Can AI moderation capture non-verbal cues the way a trained human moderator can?

How do generative research platforms protect sensitive participant data?

Which type of generative research tool is right for teams that already have recordings?

How does AI moderation quality scale? Does it hold up across hundreds of interviews?