TL;DR

Survey-only VOC programs tell you what customers rated, not why they rated it. Without an interview guide, a coding sheet, and a stakeholder-ready report structure, a voice-of-the-customer template produces data that is hard to act on and even harder to defend in a business review.

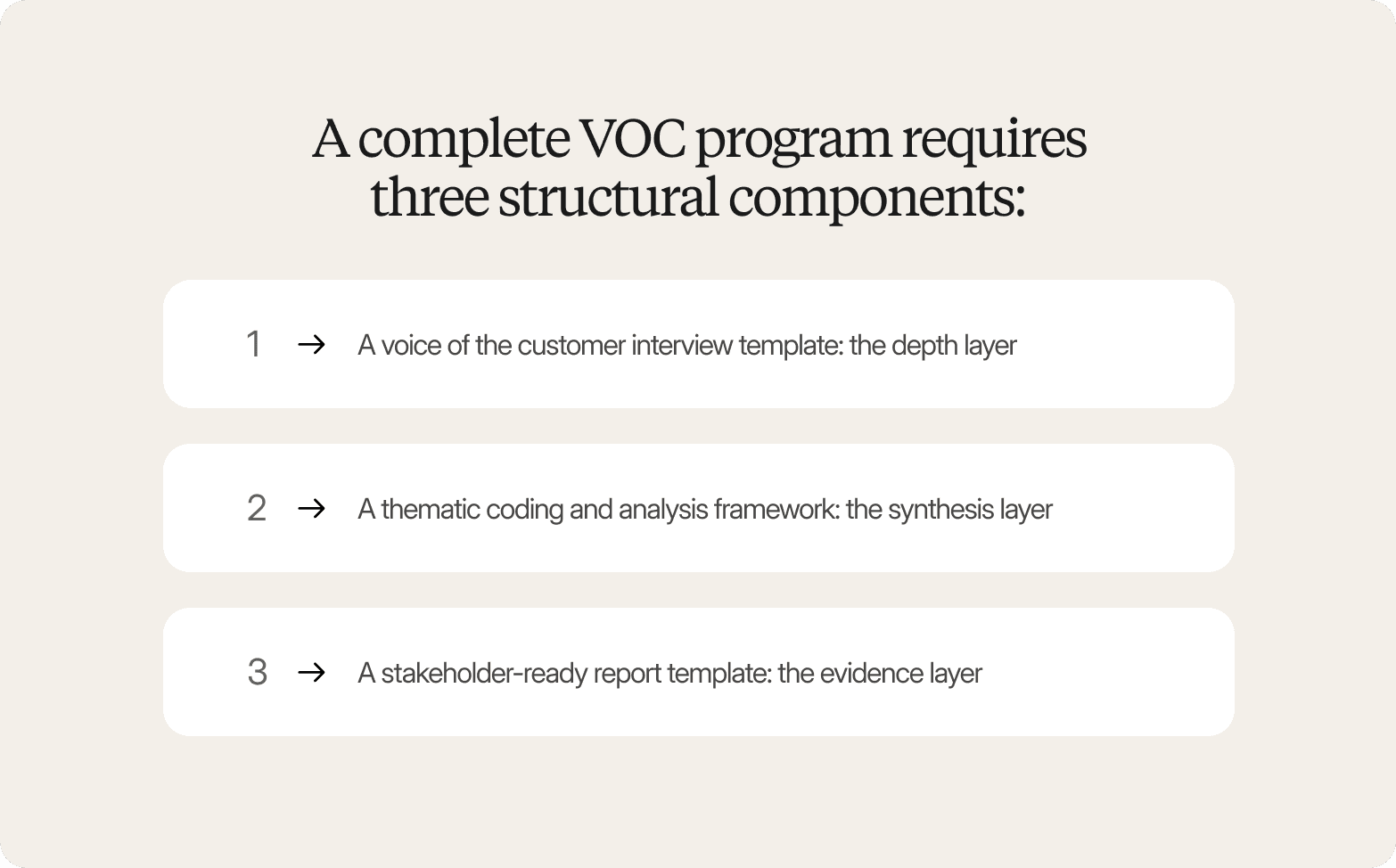

A complete VOC template covers three layers: the discussion guide that shapes what you hear, the coding framework that organizes what participants say into themes, and the reporting structure that connects findings to decisions stakeholders need to make.

Traceability is what separates credible VOC findings from those that get questioned in the room. When every theme links back to a specific participant quote or video clip, stakeholders can inspect the evidence rather than accept a summary on faith.

Qualitative interviews are what make continuous VOC feasible. When interviews are AI-moderated, and analysis is structured from the start, teams can run VOC monthly or in response to specific business triggers. Platforms like Conveo are built specifically to make that cadence practical at enterprise scale, without agency timelines or a separate moderation vendor.

3 Things a Voice of the Customer Template Actually Needs to Include

Most voice-of-the-customer templates in circulation are, at their core, feedback-tracking logs. They give teams columns for customer satisfaction scores, feedback categories, and action item owners. They don't provide teams with a structure for understanding why customers feel the way they do, which is the only part that actually changes decisions.

A voice-of-the-customer survey template captures what customers said through direct feedback and open-text responses. It does not capture what they meant, what they hesitated over, or what they contradicted between sentence one and sentence three. Satisfaction fields and sentiment categories organize data. They do not synthesize it into understanding.

A complete VOC program requires three structural components, each doing a different job:

1. A voice of the customer interview template: the depth layer

A structured discussion guide built around open-ended questions, probing logic, and topic sequencing. It is a framework that guides a real conversation, one that can follow a thread when a participant says something unexpected, surfacing the deeper insights that rating scales alone cannot reach. Without this component, a VOC program collects stated opinions. With it, it uncovers motivations.

2. A thematic coding and analysis framework: the synthesis layer

Raw feedback from interview outputs needs a structured process for identifying recurring needs, tensions, and patterns across participants. The goal is to identify common themes across customer segments so findings are actionable rather than anecdotal.

3. A stakeholder-ready report template: the evidence layer

Findings need placeholders for direct quotes, video clips, and source references, not just summary bullets. A report structure that forces evidence linking builds credibility into the output rather than leaving the presenter to defend findings on the spot.

The reason most VOC programs underdeliver is not data volume. It is that organizational silos between support, marketing, and product mean customer data lives in separate systems, formatted in incompatible ways. A customer template that does not force cross-functional inputs into a shared structure will always produce a partial picture.

These three components are not a checklist. They are the architecture of a program that can actually answer the question a VOC effort is supposed to answer: not what customers said, but what they need and why.

VOC Approaches Teams Default To (And Their Tradeoffs)

Most VOC programs fail not because teams stop caring about the customer's voice, but because the methods they default to create structural constraints that make continuous listening impractical.

Voice of customer templates and static surveys are fast to deploy, produce customer satisfaction scores that fit neatly into a dashboard, and require no moderation overhead. But knowing that customers are dissatisfied with a feature tells you nothing about why, what they would prefer, or whether the frustration is a deal-breaker. Voice of customer templates produce data that describes a problem without explaining it. Teams end up with a score they cannot act on, and a stakeholder asking a question the VOC surveys weren't designed to answer.

Point platforms for transcription and analysis are genuinely useful for synthesis, but they sit in the middle of a workflow that still requires separate platforms for recruitment, interview execution, and stakeholder reporting. Handoffs across multiple channels, from recruitment vendor to moderation platform to transcription service to reporting deck, introduce a delay that compounds until the program reverts to a periodic exercise.

Agency-commissioned qualitative research delivers the depth that online surveys and point platforms cannot. The tradeoff is time. A well-run agency study takes six to twelve weeks from brief to delivered findings. Teams run studies quarterly, not because quarterly feedback is sufficient, but because the timeline makes operating more frequently operationally impossible.

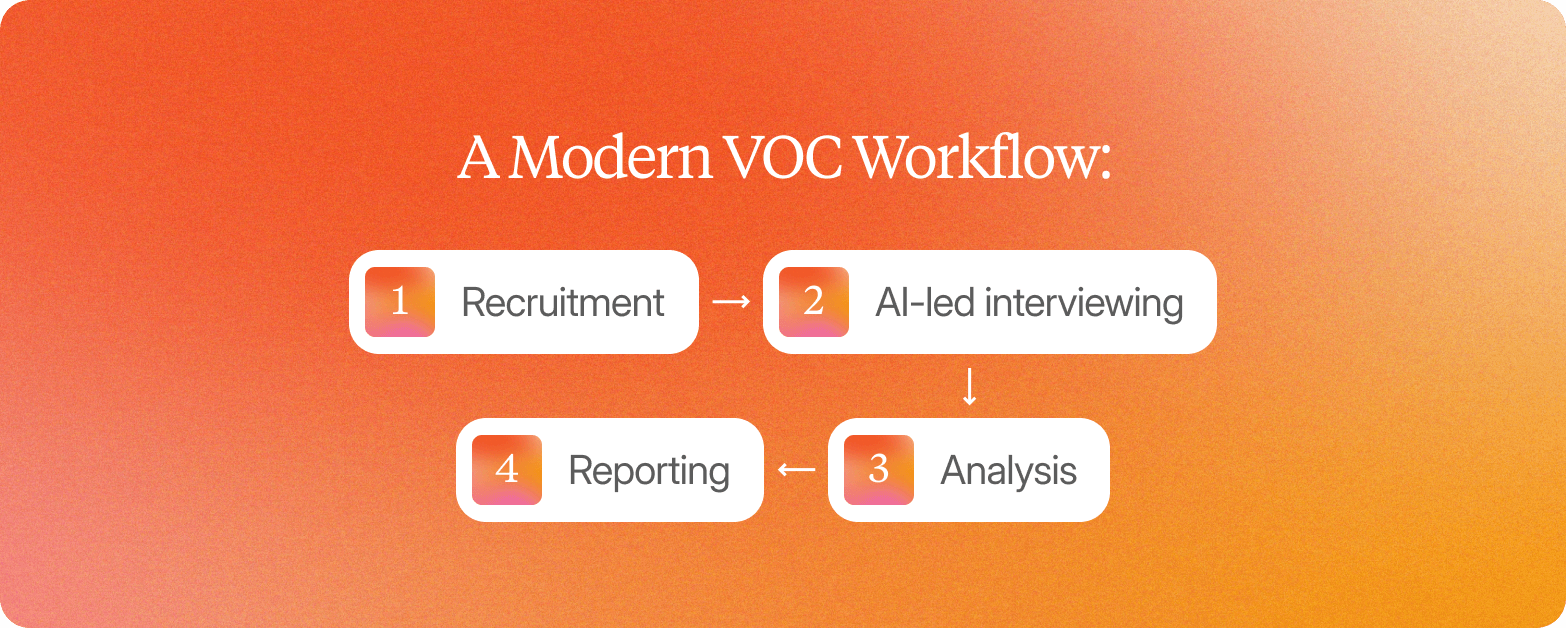

Conveo is built to close all three gaps in a single workflow. Recruitment, AI-led video interviewing, multimodal analysis, and stakeholder-ready reporting run inside one platform, eliminating the handoffs that make fragmented approaches unsustainable at continuous cadence.

Modern VOC Workflow: Speed at Scale Without Sacrificing Depth

Traditional VOC programs run on quarterly cycles, not because that cadence serves the business, but because the operational mechanics of qualitative research made anything faster impractical. Those constraints are dissolving.

The modern VOC workflow replaces that sequence with a continuous cycle. The key benefits extend beyond faster delivery: compounding customer understanding grows with every cycle rather than resetting with each new brief.

Recruitment

Access to vetted global panels across 50+ languages eliminates the two-to-four week sourcing delay. Screeners run automatically. Fraud filters apply before interviews begin. What used to require weeks of agency coordination to gather feedback from the right customer segments now takes hours.

AI-led interviewing

The voice of the customer interview template in Conveo is not a rigid script. The AI interviewer adapts its probing based on what participants say in their own words, following threads that a scripted survey closes off. This adaptive follow up approach surfaces behavioral data and emotional tone that structured VOC surveys cannot capture. Sessions run asynchronously, so hundreds of conversations are completed in parallel with no moderator scheduling required.

Analysis

As recordings land, Conveo transcribes, translates, and codes every session. Multimodal signals, tone shifts, facial reactions, and visible objects are layered into the analytics data alongside speech. Weeks of manual synthesis to identify common themes are compressed into days.

Reporting

Outputs include video clips, verbatim quotes, and structured themes with traceable evidence trails. There are no black-box summaries, no unsourced assertions.

The compounding library is what separates continuous VOC from running the same study repeatedly. Every insight flows into a searchable repository. Themes connect across cycles. Contradictions are flagged. Conveo functions as institutional memory; the organization's customer understanding deepens with each cycle rather than resetting. And because Conveo uses real participants in real video conversations rather than avatars or synthetic respondents, every finding can be traced back to a real human voice.

How to Make VOC Findings Traceable (Not Black-Box Summaries)

The most common reason VOC programs lose stakeholder credibility is not bad fieldwork. It is the inability to show your work. When a C-suite leader asks, "Where does this finding come from?" and the answer is "the AI summarized it," the conversation stops. That is a traceability problem.

Generic AI synthesis platforms generate summaries that are disconnected from the conversations that produced them. For enterprise stakeholders who have been burned by AI-generated outputs before, an unsourced summary and a fabricated one are indistinguishable. Both fail the credibility test.

Traceable VOC findings have a specific structure: every theme links to specific quotes, and every quote links to the source conversation, timestamp, participant ID, and video clip. This is what a voice of the customer template built for enterprise stakeholders should contain. Evidence placeholders that map feedback directly to the source conversation, showing the chain from raw feedback to customer insight.

Conveo is built around this chain. Every AI-generated finding links back to the original video context. Stakeholders can click through from a thematic cluster to the specific participant moments that produced it, the real conversation, in the participant's own words, with tone and facial expression intact. Research consistently finds that participants report greater candor with AI interviewers than with human moderators, particularly on sensitive topics, meaning the traceable evidence may reflect more accurate customer sentiment than a traditionally moderated study would have captured.

For enterprise teams, traceability also has a procurement dimension. Conveo holds SOC 2 certification, is GDPR compliant, and offers EU regional data hosting, the conditions under which enterprise legal and procurement teams will allow sensitive customer data to flow through a research platform. Many competitors in the AI interview space have not confirmed equivalent compliance infrastructure, which creates a structural barrier that no feature list resolves.

Role-Based VOC Templates: What Different Teams Actually Need

Most customer templates assume one set of questions, one output format, and one definition of actionable. The result is a voc template that fits no role particularly well. A strong VOC program requires matching the data collection approach to the team's specific decision context.

Insights and CMI teams need discussion guides with adaptive follow-up questions, coding frameworks that produce themes traceable back to specific participant moments, and report structures that hold up in a board-level review. The voc template for this team prioritizes evidence trails and cross-study continuity: findings from this wave should connect to patterns from previous studies, surfacing meaningful improvements rather than isolated data points. Customers feel heard when their feedback demonstrably shapes decisions, and that loop only closes when findings are traceable.

Product and UX teams need lightweight, repeatable research workflows that fit sprint cycles, often combining usability testing with qualitative interviews to understand both user behavior and the reasoning behind it. The output is a structured set of themes covering feature requests and pain points that the team can act on before the next planning cycle closes. In-app surveys can serve as a trigger layer, with Conveo interviews deployed as a follow-up when a customer segment shows unexpected behavior.

Brand and Innovation teams need consumer input on specific decisions: concepts, messaging, packaging, and creative direction. The first VOC template for this team should be built around a clear stimulus-response structure, helping potential customers react to ideas before significant resources are committed. Outputs are shareable highlight reels and theme summaries, not methodology reports.

Segment feedback by role and decision context, and the research starts producing findings that get used rather than filed.

4 Common VOC Template Failure Modes (And How to Avoid Them)

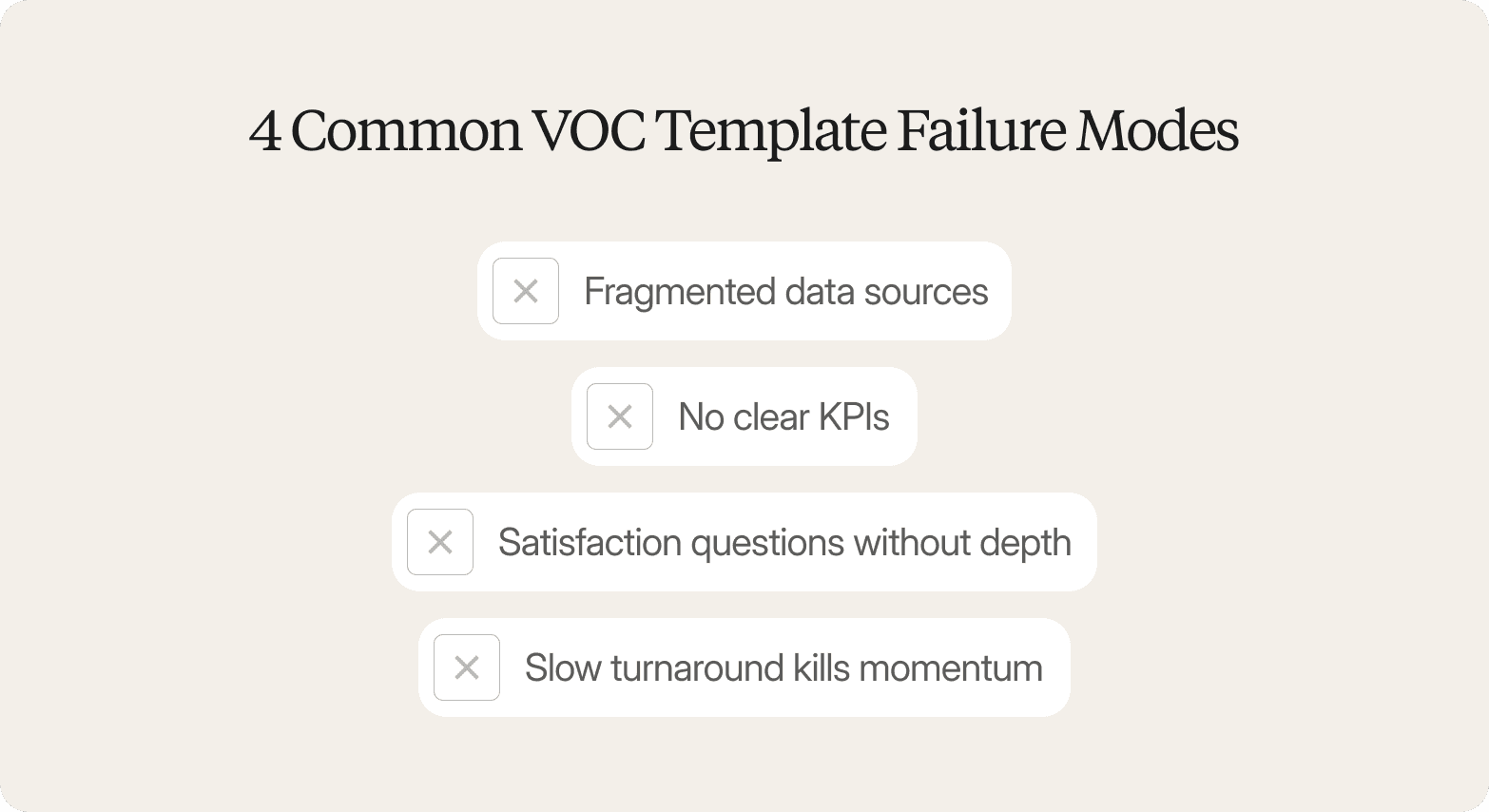

Most VOC programs fail because the program design guarantees the same problems recur every cycle. Four structural failure modes account for the majority of voice of the customer templates that stall or lose stakeholder confidence.

Fragmented data sources

When surveys, support tickets, and review platforms live in separate systems, no one can map feedback or see consistent themes across them. The fix is integrating qualitative interviews into the VOC program, a primary source of depth that connects and contextualizes what other channels surface.

No clear KPIs

A customer satisfaction score or net promoter score alone does not prove a VOC program is working. Tracking theme frequency and intensity alongside quantitative metrics, including the customer effort score where relevant, gives teams the data-driven decisions framework they need to show impact.

Satisfaction questions without depth

The classic voice of the customer survey template asks "how satisfied are you?" on a five-point scale. That confirms whether a problem exists; it does not explain what drives it. Open-ended conversational research produces actionable insights that rating scales cannot. Negative feedback in particular is where qualitative depth pays off: knowing a customer is dissatisfied is far less useful than knowing exactly what they would change and why.

Slow turnaround kills momentum

When synthesis takes four to six weeks, the finding arrives after the decision it was meant to inform. Platforms that cover recruitment, interviewing, and analysis in a single workflow remove the handoffs that create those delays.

The design has to change. Running a structurally flawed voice of the customer template more diligently will still produce the same gaps.

How Conveo Supports VOC Programs at Enterprise Scale

Over 400 enterprises, including Google, Reddit, and Bosch, use Conveo to move from periodic satisfaction tracking to continuous customer intelligence.

Study design and recruitment

Teams design qual, quant, or mixed-method studies from a single workspace, define participant criteria, add a custom screener, and launch to vetted global panels across 50+ languages. Fraud filtering and incentive handling are built in. Teams looking to pilot test a new research approach or run their first VOC template can be in the field in hours.

AI-led interviewing

Discover Conveo's AI moderation in action:

Participants meet an AI interviewer that listens, probes, and uses follow-up questions based on what they actually say. Sessions run asynchronously, ten or a thousand conversations complete in parallel, gathering structured feedback from customer segments across markets. Research shows 83% of respondents report greater honesty with AI interviewers compared to human moderators, meaning VOC findings reflect more candid responses, including direct feedback on sensitive topics and negative feedback that traditional moderated studies tend to smooth over.

Multimodal analysis

Conveo automatically transcribes, translates, and codes every session. Multimodal analysis captures tone shifts, facial expressions, hesitation, and on-screen context that transcripts miss, surfacing thematic clusters and highlight reels tied directly to research objectives. This is where behavioral data and emotional tone combine into deeper insights than any feedback form or online survey can produce.

Traceable, stakeholder-ready outputs

Every theme links to specific quotes. Every quote links back to the source video with timestamp and clip intact. Customers feel heard because their actual words reach decision-makers directly, not through a synthesized summary.

Everything flows into a secure insight library protected by SOC 2 certification, GDPR compliance, and EU regional data hosting. Each VOC cycle builds on the last: themes connect across studies, contradictions surface automatically, and customer relationships deepen as organizational understanding compounds with every wave of research.

Conveo is built for research teams that run continuous VOC programs and expect traceability. It is not the right fit for one-time studies or teams without a dedicated research function.

Frequently Asked Questions

What Is a Voice of Customer Template Six Sigma?

What Should a Voice of Customer Template Excel Include?

What Is a Voice of Customer PowerPoint Template Used For?

How Do You Create a Voice of the Customer Survey Template?

What Is the Difference Between a Voice of Customer Template and a Customer Feedback Form?

Can I Use a Voice of Customer Template for B2B Research?