TL;DR

The 6–12-week agency research cycle is no longer the constraint it once was. Enterprises using AI-moderated qualitative research are already operating outside it, running continuous consumer intelligence programs that reveal consumer behavior and inform brand strategy in days, not quarters. Understanding how enterprises use consumer intelligence for brand strategy starts with recognizing that the old timeline was a structural limitation rather than a methodological requirement.

The operational problem most brand teams face is not a shortage of market research. It is research that arrives too late and lives in the wrong place. Brand positioning decisions are made before agency findings are returned. Consumer insights that do get delivered sit in slide decks, disconnected from the next brief and invisible to anyone who wasn't in the readout.

Enterprises that solve this build searchable repositories: consumer intelligence that compounds across studies, connects to real conversations, and can be queried by brand teams to surface actionable insights without having to file a new research request.

This article explains the enterprise operating model for consumer intelligence in brand strategy: who owns it, how it connects to brand decisions, and what it takes to move from periodic reports to continuous learning.

Consumer Intelligence Pain Points: Why Insights Stay Trapped in Research Teams

Brand strategy decisions rarely wait for research to catch up. A positioning review, a campaign brief, a packaging refresh: each one carries a decision window that closes whether or not consumer understanding is ready. Agency-led qualitative market research typically takes six to twelve weeks from brief to deliverable, moving through recruitment, screener design, moderation, transcription, analysis, and report writing before a single finding reaches a stakeholder. By the time the deck lands, the brief has already been answered by assumption.

An insights team commissions a study, waits on agency delivery, receives a polished presentation, presents it internally, and files it in a shared drive. That drive becomes a graveyard. Customer research findings that took weeks and a significant budget to produce are effectively unsearchable six months later. No tagging, no cross-study synthesis, no way to surface what consumers said about a related topic in a prior project.

The consequence is a recurring failure point for consumer intelligence for brand strategy. When a brand team needs to validate a positioning angle, test a messaging direction, or understand how a new concept lands against existing brand equity, they face a binary choice: commission a new study and miss the decision window, or proceed without evidence. Most teams, under pressure, choose the latter. The research function becomes reactive rather than generative, leaving real customer pain points unaddressed.

Consider a brand manager validating a sustainability messaging angle before a campaign brief is finalized. Three previous studies touched on sustainability attitudes. None of those findings is accessible in a form that answers the current question. They exist in separate decks, coded differently, owned by different project leads, with no mechanism to connect them. The historical data exists. The organization just cannot use it. This is not a technology failure. It is an infrastructure failure: consumer intelligence that was never designed to compound.

The result is that insights teams, despite running substantial research programs, cannot operationalize consumer understanding across marketing, innovation, and strategy. Each function starts from zero. Each study is its own island. The organization keeps paying for knowledge it already owns but cannot use.

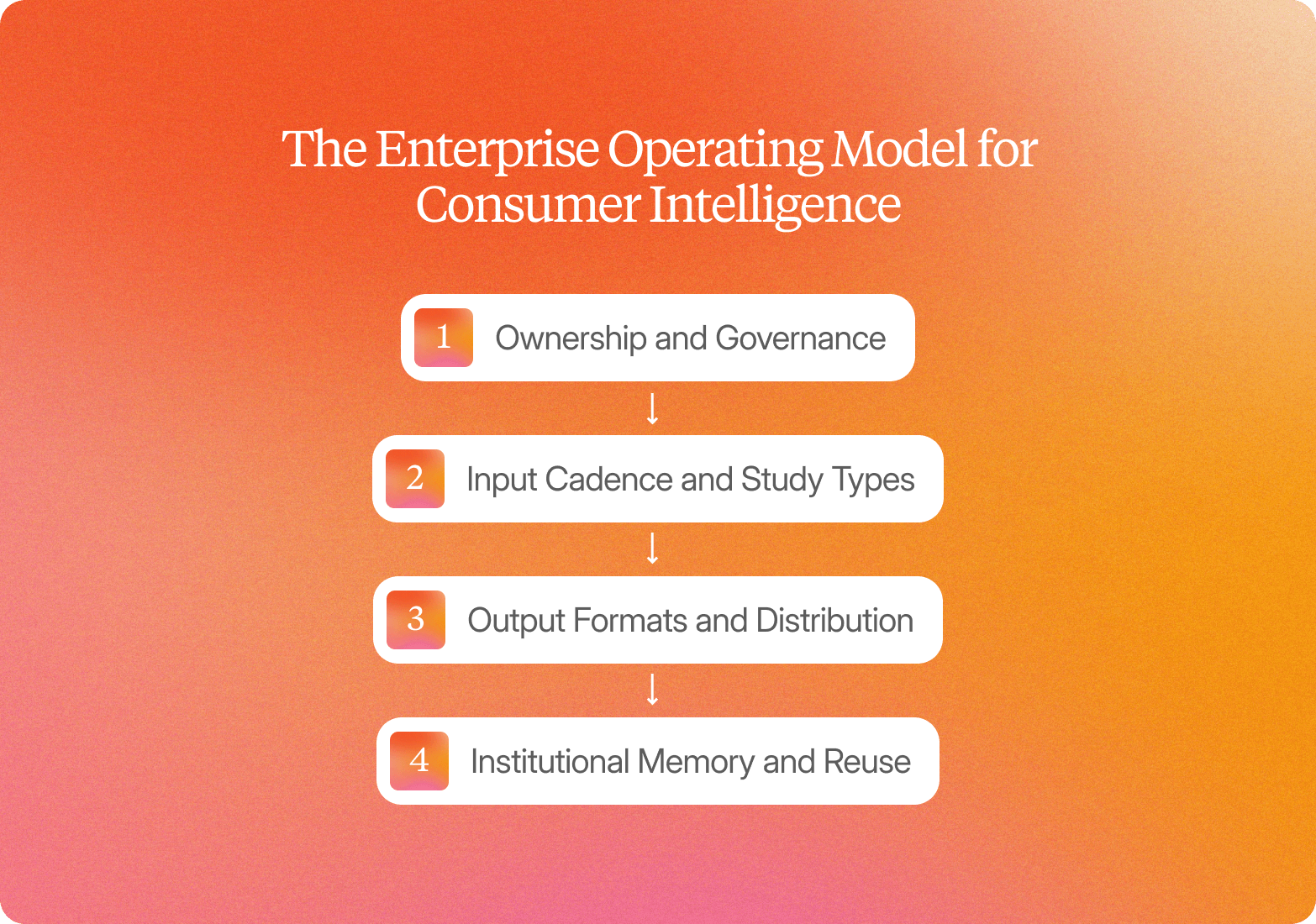

The Enterprise Operating Model for Consumer Intelligence

Enterprises that treat consumer intelligence and brand strategy as infrastructure rather than a project look structurally different from those that don't. Research has a clear owner, recurring workflows are built into the planning calendar, and findings are accessible across functions rather than locked inside a single team's shared drive. The 6–12 week agency model was never an inherent limitation of qualitative research. It was the product of sequential, manual steps: briefing an agency, waiting on recruitment, scheduling moderation, then waiting again for analysis and reporting. Remove those steps, and the constraint disappears.

Ownership and Governance

Most enterprise consumer intelligence functions are owned by CMI, insights, or brand strategy teams. In practice, two structural models exist. Centralized teams own the research calendar and run every study on behalf of marketing, product, and innovation stakeholders. Federated models give individual brand or category teams the ability to commission their own research using shared infrastructure, with the insights team setting methodology standards and owning the insight repository. Neither model is inherently better. The question is whether the infrastructure supports both without creating parallel silos.

Defining a customer intelligence strategy at the outset matters: who runs studies, who owns the repository, and how findings reach marketing and product teams. That decision determines whether consumer intelligence functions as organizational infrastructure or a departmental service.

Input Cadence and Study Types

The shift from quarterly agency studies to continuous research means brand decisions no longer wait for a research cycle to close. Concept testing, message testing, brand perception tracking, competitive positioning research, and packaging validation run in parallel with the decisions they're meant to inform. A campaign team testing three messaging directions and exploring customer preferences across audience segments doesn't need to wait for the next agency engagement. The study launches the same week the brief is written.

Output Formats and Distribution

Static decks have a short shelf life. They capture findings at a point in time, but they don't travel well across teams, they can't be searched, and they don't connect to anything that came before. Structured repositories that store video clips, verbatim quotes, and tagged themes alongside their source conversations change how findings are used. A brand strategist can search for "price sensitivity in the 35–50 segment" and return sourced responses from three studies across two markets, rather than requesting a new study or waiting for the insights team to surface it manually. For business leaders who need evidence to act on market trends and industry trends, that kind of direct access is what moves consumer insights into decisions.

Institutional Memory and Reuse

This is where operating model design separates platforms from point solutions. Conveo's insight library is built to compound. Every study that runs flows into a searchable repository where findings are tagged, linked to the source video, and connected to prior studies. When new evidence contradicts an earlier assumption, the platform surfaces that conflict rather than burying it. Over 400 enterprise teams, including Reddit, Google, and Bosch, rely on Conveo's insight library to query findings in plain language and get sourced answers without commissioning new research or waiting on the insights team.

How Consumer Intelligence Connects to Brand Strategy Artifacts and Marketing Campaigns

Most research processes stop at "here's what consumers said." The gap between that output and a finished brand strategy artifact, a positioning territory, a message hierarchy, or a creative brief is where consumer intelligence for brand strategy either compounds into decisions or dies in a deck.

That gap is a translation problem, not a data problem. Research outputs describe what consumers said. Brand strategy artifacts require interpretation: which phrases become positioning claims, which emotional patterns become creative territories, which unmet needs address consumer concerns, and become the lead message in a campaign brief. Bridging that gap requires a deliberate workflow, not just better analysis of consumer data.

The workflow moves in four steps: identify the brand artifact and the decision it needs to serve; query the insight repository for relevant evidence; extract specific quotes, themes, or clips that support or challenge the proposed direction; and embed that evidence directly into the artifact with traceability back to the source so any stakeholder can inspect the reasoning.

Three illustrative examples show what this looks like in practice:

Positioning territory development

Consumer intelligence reveals that sustainability-conscious buyers describe a brand as "trying but not credible." That phrase surfaced across multiple interviews and was tagged to specific video moments, shifting the positioning direction from "sustainability leader" to "transparency and progress." Understanding consumer sentiment at this level of specificity requires real conversations, not survey data.

Message hierarchy

Consumers consistently prioritize "works fast" over "gentle formula" in pain relief interviews. The evidence reorders the message hierarchy in campaign briefs, moving efficacy above tolerance as the lead claim. The brief now cites specific participant quotes and the frequency with which that priority appeared across segments.

Creative brief development

Video clips show consumers describing a product as "reliable but boring." The emotional tone in those clips, not just the words, informs the creative brief's direction: "dependable confidence" rather than "exciting innovation." The brief links directly to the source clips so the creative team can watch the moments that shaped the direction.

Every brand strategy claim built this way carries its evidence with it. Stakeholders can challenge brand positioning, question the message hierarchy, or push back on the creative direction. They do so with consumer evidence in front of them, not competing opinions.

Continuous vs. Periodic Consumer Intelligence

Most enterprise teams run qualitative research on a periodic cycle: a quarterly brand tracker, an annual segmentation study, and a concept test commissioned when the innovation calendar demands it. That model made sense when fieldwork required agency coordination, moderator scheduling, and weeks of manual processes. It no longer fits how brand decisions actually get made.

Campaign launches, concept pivots, and competitive responses happen monthly or weekly. Periodic research operates on quarterly or annual cycles. The gap between those two rhythms is where decisions get made without current consumer input: not because teams don't care, but because the research infrastructure cannot keep pace with the business. Customer loyalty, customer retention, and customer satisfaction depend on brands that understand what consumers want now, not what they wanted last quarter.

The shift to continuous consumer intelligence closes that gap by changing the underlying architecture of how research runs. Instead of sequential, agency-dependent studies that start and stop, parallel async interviewing runs 10 to 1,000 conversations simultaneously, across markets and languages, without moderator scheduling constraints. Study volume increases without adding headcount. This is a specific capability, not a generic industry claim. Most research workflows are still built around sequential fieldwork. Parallel async interviewing at enterprise scale is not yet standard practice, and it is the core of how Conveo is built to operate.

The second structural change is what happens between studies. Most research programs treat each project as a standalone: the deck gets presented, filed, and largely forgotten. Conveo's insight library inverts that model. Every interview, theme, clip, and data point flows into a searchable repository that compounds across projects. Past evidence is not archived. It is actively reused.

Brand teams query the repository before drafting positioning statements, compare current findings to previous studies to track perception shifts, and trace claims back to specific video moments across multiple waves of research. When new evidence contradicts earlier findings, both sets of clips sit side-by-side so teams can determine whether consumer sentiment shifted, the sample changed, or the question framing differed.

Continuous intelligence is not a faster version of periodic research. It requires end-to-end workflow integration: study design, recruitment, interviewing, analysis, and reporting in a single platform that removes the manual processes and agency dependencies that make periodic research the default. Without that integration, speed gains in one stage are absorbed by bottlenecks in the next.

5 Ways to Make Customer Feedback and Consumer Intelligence Stakeholder-Trustworthy

Stakeholders shelve consumer intelligence findings for one reason: they cannot verify the claim in front of them. When a deck says "consumers associate the brand with trust," but the only support is a synthesized paragraph with no source material attached, the finding gets questioned in the room and quietly deprioritized. Customer feedback gathered through qualitative interviews carries inherent credibility that synthesized summaries cannot match, but only when that feedback is traceable and the methodology is transparent.

Trustworthy consumer intelligence is built on four mechanics, and skipping any one creates the credibility gap that sends findings to a folder rather than a decision.

Traceability to source

Every finding needs to link back to specific video clips, verbatim quotes, and participant metadata. Stakeholders should be able to inspect the evidence themselves, not trust a summary. Static decks break this chain. Platforms that surface thematic clusters alongside the original interview moments preserve it, enabling deeper insights than any summary document can deliver.

Evidence standards

Enterprises set thresholds for what counts as a validated finding: minimum participant counts, thematic saturation across independent respondents, and cross-study corroboration before a pattern becomes a strategic input. These standards prevent cherry-picked data points from carrying more weight than the data supports.

Bias and fraud handling

For brand strategy, a single fabricated or low-quality response can mislead a positioning decision rather than averaging out statistically. Participant authentication steps, fraud filtering, attention checks, video verification, and demographic validation are the baseline for credible qualitative data at scale, not optional extras.

The synthetic respondent fault line

The research market is dividing into platforms that generate synthetic or avatar-based responses for speed and platforms that insist on real human participants for credibility. This distinction matters for enterprise procurement. Conveo's own research shows that 83% of respondents report greater honesty with AI interviewers than with human moderators, which means video-first AI research does not just preserve authenticity: it actively reduces the social desirability bias that distorts findings in traditional moderated sessions.

"Conveo's video-first approach is a real differentiating methodological advantage. The ability to distill insights from reactions and not just hear answers adds context you simply can't get from transcript-only tools, or any other tool in the market for that matter."

Senior Marketing Research & Insights Manager, Google

Methodological transparency

Qualitative interviews answer why brand perceptions form. Social listening tracks volume and sentiment at scale. Sentiment analysis helps teams identify shifts in consumer attitude across digital channels and social media. Surveys measure the prevalence of attitudes you already understand. Using the wrong method produces findings that are technically accurate and strategically useless.

Evaluation Framework: What to Look for in Customer Intelligence Platforms

Enterprises evaluating customer intelligence platforms rarely start from scratch. Most teams have already cycled through at least two models: an agency relationship that delivered quality but took too long, a survey platform that scaled but left the "why" unanswered, or a generic AI synthesis approach that moved fast but produced outputs no senior stakeholder would stand behind. The question is not which platform performs best in isolation. It is the platform that resolves the specific tradeoffs your team has already hit.

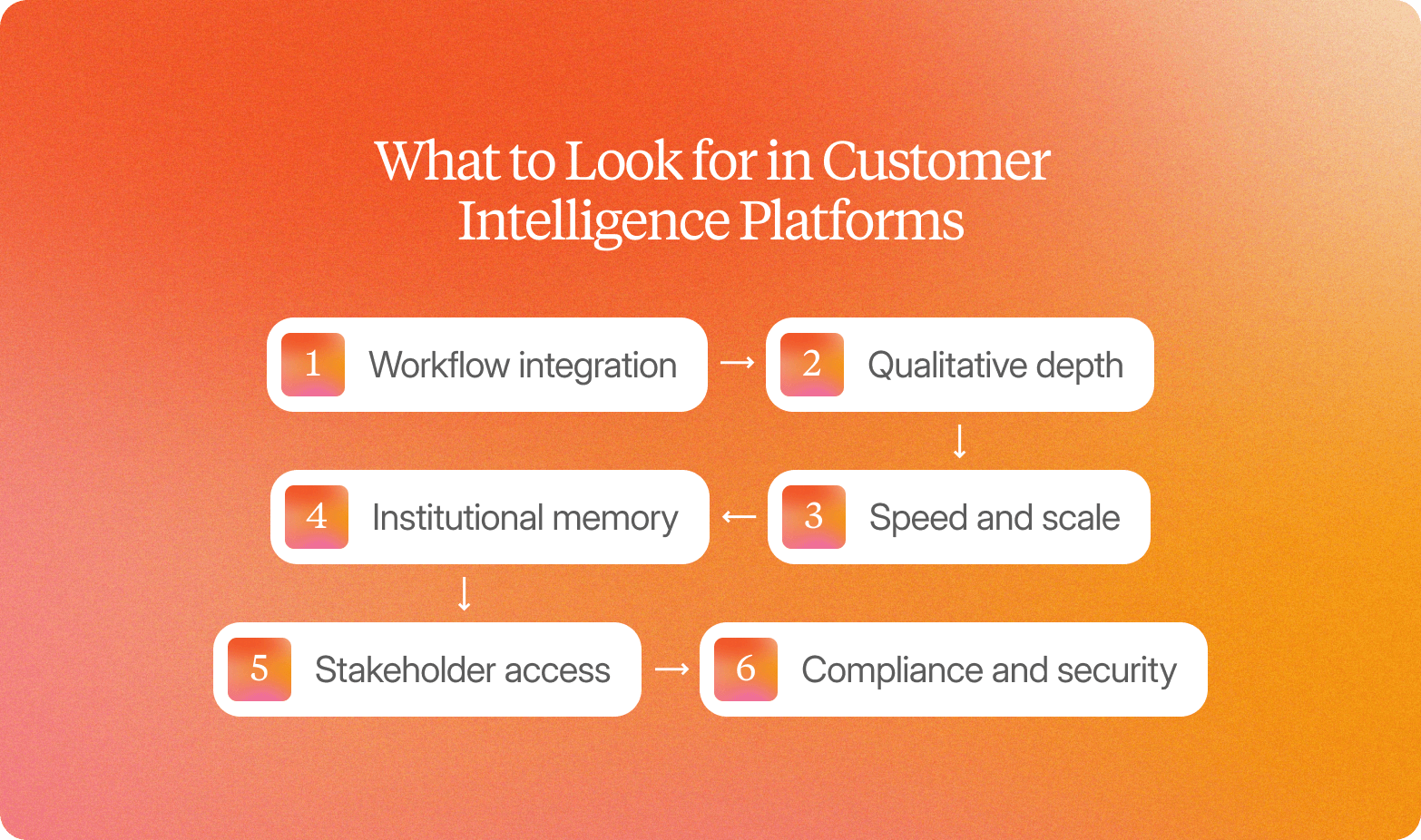

Six criteria separate platforms that serve enterprise consumer intelligence programs from those that serve individual studies.

Workflow integration

End-to-end platforms cover recruitment, interviewing, analysis, and reporting in one place. Point solutions require manual handoffs at each stage, introducing delay, version risk, and coordination overhead. A unified platform keeps customer intelligence data flowing from study design through stakeholder delivery without interruption.

Qualitative depth

Video-first interviews with adaptive probing produce the kind of depth that brand positioning and messaging decisions require: tone shifts, hesitation, and the moment a participant's language changes when a concept lands wrong. Text-chat platforms and surveys capture responses. They do not capture meaning. Video-first qualitative methodology is the benchmark for consumer intelligence, not one option among equals.

Speed and scale

Parallel asynchronous interviewing enables teams to run 10 to 1,000 conversations simultaneously, compressing timelines from weeks to days without reducing the depth of each session. This is what makes continuous research operationally viable rather than a quarterly event.

Institutional memory

Customer analytics compounds in value when findings connect across studies. Platforms that connect findings allow brand teams to compare what consumers said six months ago against what they are saying now, across markets and segments. Platforms that treat each study as a standalone create insight silos.

Stakeholder access

Research that lives on a deck reaches one meeting. Research that lives in a searchable library reaches every team that needs it. Natural-language search and plain-language access let non-research stakeholders find and reuse evidence without waiting on the insights team, building stronger customer relationships at every stage of the customer journey.

Compliance and security

SOC 2 certification, EU data hosting, and GDPR infrastructure remove procurement blockers that quietly kill enterprise deals, particularly in regulated industries and European markets. Customer privacy protections matter especially when enterprises are collecting video data from research participants at scale. Among AI-first qualitative research platforms, Conveo's combination of all three is not yet matched by competitors. For enterprise procurement teams, this is not a footnote. It is a gate.

No platform optimizes equally across all six. Agencies lead on qualitative depth but fall short on speed, scale, and institutional memory. Survey platforms scale but cannot explore the "why" that drives brand decisions. End-to-end qualitative platforms balance depth, speed, and compounding memory, but they require internal ownership rather than outsourced delivery. Conveo comes closest to the full set. Teams who want to evaluate against these criteria can review the platform in detail at conveo.ai/product.

Conveo is built for enterprises running ongoing research programs with internal ownership. Teams with one-off research needs or no dedicated research function, will get more value from a traditional agency engagement.

Common Market Research Failure Modes and How to Avoid Them

The most common reason market research programs fail to influence brand strategy has nothing to do with research quality. It has to do with how the function is structured. Enterprises invest in research infrastructure, then operate it as a closed system that serves the insights team rather than the organization.

Four failure modes account for most of this gap.

Insights team gatekeeping

When brand, marketing, and innovation teams must submit requests and wait for custom reports, the insights function recreates the agency bottleneck internally. Stakeholders stop asking. Customer expectations go unmet because the evidence that would surface them never reaches the people making decisions. The fix is direct access: a searchable insight repository where brand teams can query evidence in plain language without routing every question through a research manager.

No institutional memory

Each study lives in its own deck. Findings from a packaging study conducted eight months ago are invisible to the team running a concept test today. The consequence is redundant research spending and missed pattern recognition across programs. Conveo's compounding insight library addresses this directly: themes, quotes, and video clips from every study are indexed and searchable, so past evidence is reusable rather than buried. Teams identify opportunities that a siloed research approach would have missed entirely.

Speed without credibility

Prioritizing turnaround over rigor produces outputs that stakeholders will not act on. Synthetic personas and generic LLM synthesis introduce the synthetic respondent fault line: findings that cannot be traced to real human behavior, which procurement teams and senior stakeholders now reject as a baseline requirement. Business growth depends on understanding what potential customers actually think. The fix is real video participants with traceable, source-linked evidence.

Platform sprawl

Stitching together separate platforms for recruitment, interviewing, transcription, and analysis creates manual handoffs at every stage. Each handoff slows the workflow and introduces errors that compound by the time findings reach stakeholders. An end-to-end platform that integrates the full qualitative workflow, from study design through stakeholder-ready reporting, removes those failure points structurally.

Frequently Asked Questions

How Do Enterprises Operationalize Consumer Intelligence So Brand, Marketing, and Product Teams Work from the Same Customer Insights?

What Does a Practical Workflow Look Like for Turning Ongoing Consumer Interviews into Brand Strategy Inputs?

What Makes Consumer Intelligence Outputs Credible Enough for Senior Stakeholders to Act On?

How Does Continuous Consumer Intelligence Differ from a Traditional Quarterly Brand Tracker?

What Should Enterprises Look for in a Consumer Intelligence Platform Beyond Speed and Cost?